WebAPI Manager: limit website access to Web APIs

WebAPI Manager is an open source extension for the Firefox and Google Chrome web browser that you may use to limit website access to Web APIs.

Support for new features and technologies exploded in recent years. Browser makers like Mozilla or Google integrate APIs into their web browsers that websites may use.

While there is no doubt that many of the features are beneficial as it gives sites new capabilities, some features may also get abused or are not really used by a lot of sites out there.

Some examples: Canvas may be used for fingerprinting, WebRTC may leak the device's local IP address even when a VPN is used, and sites could use the Battery Status API to fingerprint clients as well.

The author of WebAPI Manager identified two core issues when it comes to the integration of new functionality in web browsers: that some features are rarely if ever used, and that features are used for non-user-serving purposes such as fingerprinting or attacking them outright.

WebAPI Manager

WebAPI Manager is a browser extension for Google Chrome and Mozilla Firefox that gives you control over WebAPI use in the browser. While I have not tried the extension in browsers like Opera or Vivaldi, it is likely that it will work in those browsers as well.

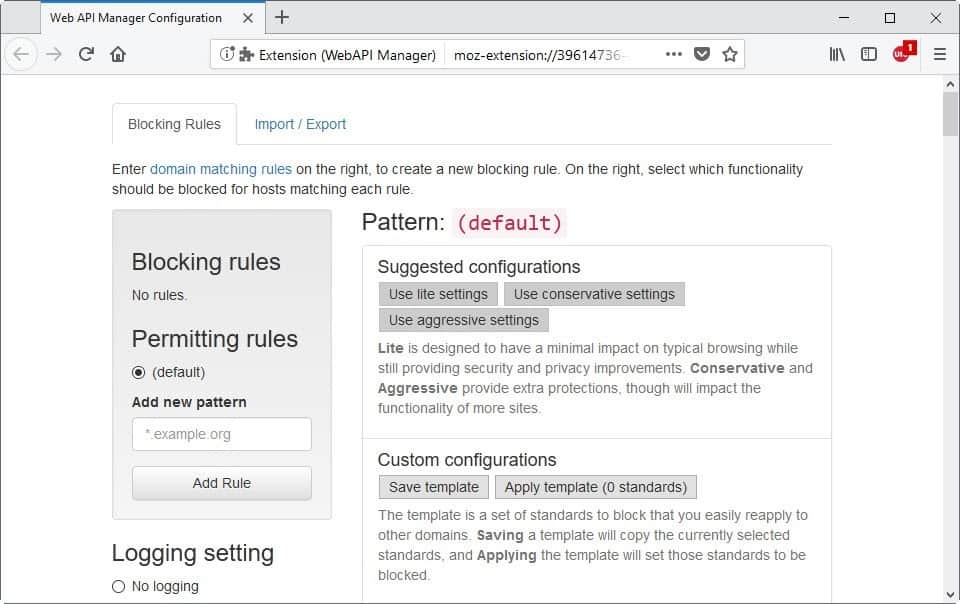

The extension won't change support for any APIs by default. It is up to you to limit access to APIs, and you have two main options to do that.

You may enable a suggested configuration. WebAPI Manager includes three which differ regarding aggressiveness. The lite configuration should have minimal impact on the functionality of sites while conservative and aggressive settings may impact functionality more but improve security and privacy more as well.

The extension marks all features of the selected configuration so that you know what gets blocked when you apply it.

You don't need to use suggested configurations. You may create a custom configuration and have it applied automatically to sites you visit. This requires a more in-depth knowledge of APIs and technologies, however.

The extension lists general information on the configuration page and links to specifications so that you may read up on a certain feature before deciding whether to block it or not.

The list of APIs and features that you may block is extensive. To name a few: Service Workers, WebGL 2.0, Canvas Element, Scalable Vector Graphics, Battery Status API, Ambient Light Sensor, Vibration API, Encrypted Media Extensions, WebVR, Web Audio API, Payment Request API, Beacon, Push API, or WebRTC 1.0.

WebAPI Manager may block functionality on matching domains using host-matching regular expressions, or across all domains using the default blocking rule.

The extension includes two features right now that reveal the APIs and functions a website uses to you. It adds an icon to the browser's toolbar on installation that displays the number of sites and whether APIs are blocked. This works similarly to how content blockers such as NoScript or uBlock Origin highlight activity.

A click on the icon lists each host and the number of APIs blocked. The interface has an "allow all" button to whitelist a domain and an option to configure blocking rules for the rule in question.

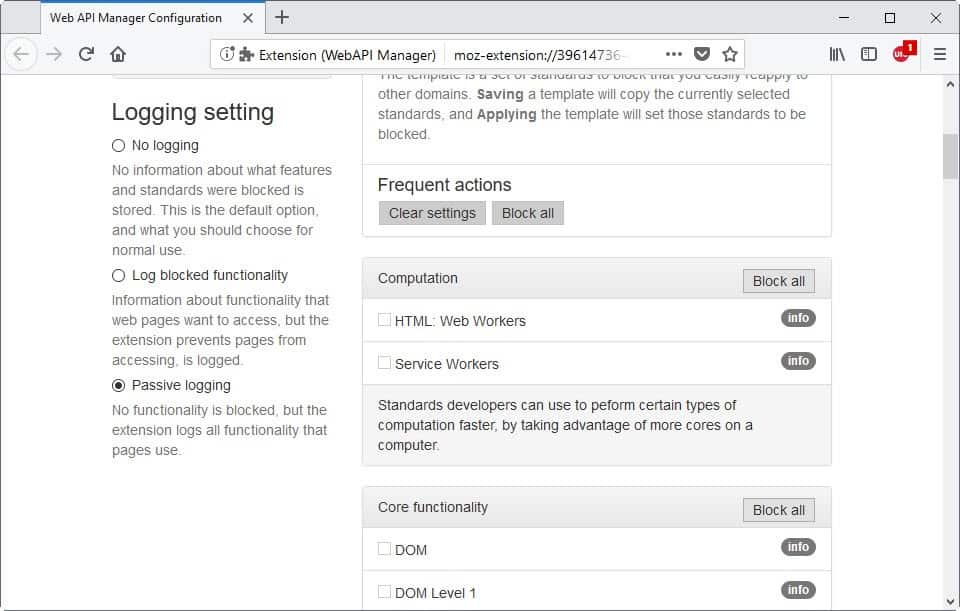

The second option that you have to find out which features sites use is to enable passive logging. This logs all functionality so that you may access it and see which APIs sites use. You may use the information to customize rules for specific sites and export all logged information for all tabs at once.

WebAPI Manager supports rule importing and exporting, useful if you want to use the extensions on multiple devices or across different browsers.

The future

Of all the planned features that may land at one point or another, it is support for rule sets that I'm most excited about. The system would work similar to how content blockers load rule lists right now. This would make it easier for users who want to improve their privacy and security without investing a lot of time into researching Web APIs and customizing access for sites based on trial and error.

Closing Words

WebAPI Manager is an excellent companion extension for content blockers. While some content blockers may block some features as well or may be configured to do so, the bulk is not touched if scripts run on the root domain.

You can use it to block features that many sites abuse, Canvas and Beacon comes to mind, or use an aggressive configuration and customize it only if sites you visit regularly require certain functionality to run properly.

Related articles

- A comprehensive list of Firefox privacy and security settings

- Canvas Defender: canvas fingerprinting protection

- Close annoying website overlays in Chrome and Firefox

- HTML5 Test Your Web Browser

- Should you put tape over a webcam?

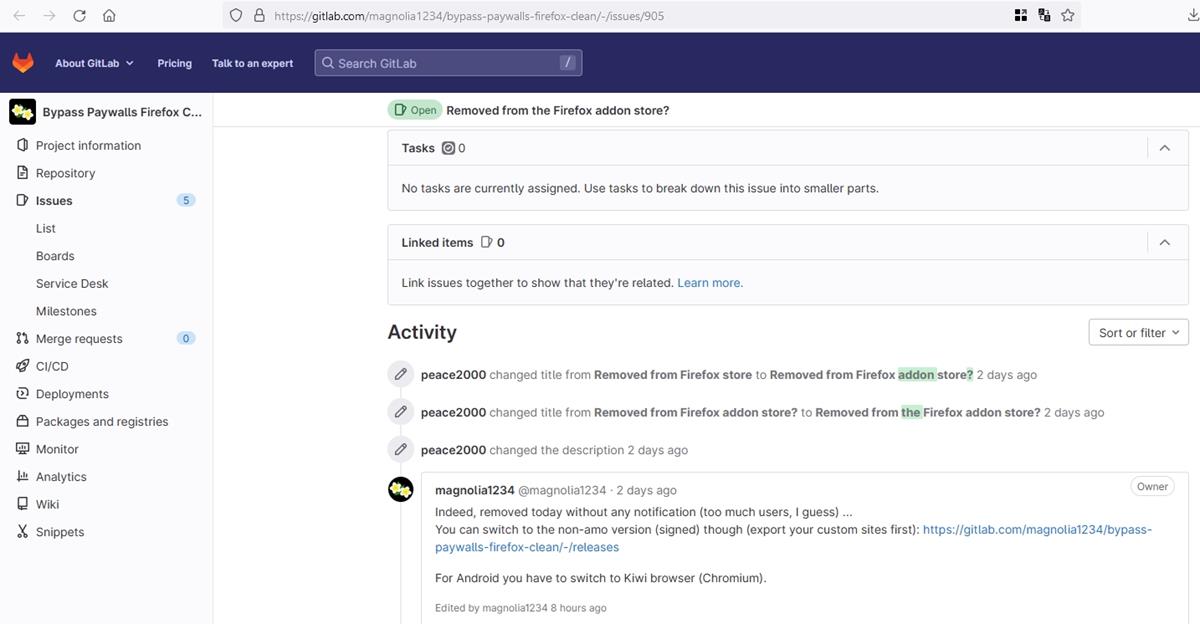

I just came across this article and found that both the Mozilla Add-on page and the author’s github repo say that the add-on is unmaintained. Last update Feb 14, 2018: v. 0.9.27

This was (and perhaps still is for a bit longer, I’ll need to install and test) one of the most promising, powerful and exciting extensions I’ve read about. To be able to manage this in a user-friendly UI on a per site basis is both impressive and empowering (for the end-user).

I hope the status changes and/or the main author (Peter Snyder) can find the time to keep the main core compatible and bug free – going forward.

On Chromium-based browsers, resisting fingerprinting is hopeless. It’s best to reduce exposure to the maximum with things like uMatrix.

Resisting fingerprinting implies making the least amount of tweaks possible in order to be as close to the main browser crowd as possible. Any tweak made is a compromise between getting further from the main crowd and closer to a smaller but actually more protected crowd guaranteeing better privacy. As more tweaks are made though, we move towards crowds that become too small for the extra protections they have to be worth it.

To compensate this drawback we were forced until now to reduce exposure. These days, Mozilla’s Tor Uplift project and privacy.resistFingerprinting are coming to the rescue regarding this tweaking dilemma because we have much less deliberations to do and we’re much, much less scattering ourselves into crowds that become smaller and smaller. When RFP is finished, it will appear in Firefox’s UI or be enabled by default in Private Browsing and many more users will come to use it. We hopefully won’t have to flip any other pref to protect ourselves from fingerprinters, just like on Tor Browser. Well maybe a couple. It would be nice if there was a way for RFP to better handle the window size issue though, but it’s a huge fingerprinting avenue so the solution is not evident.

TL;DR I don’t recommend using that kind of add-on if resisting fingerprinting is your goal. If you want to protect yourself from it, I recommend using Firefox with RFP enabled or Tor Browser, or eventually a Waterfox install with RFP IF you are confident you can set it to reliably spoof Firefox. Beware though, RFP is as powerful as it is intrusive so keep in mind that you enabled it. If something is odd on a website, think about disabling RFP and reloading the website.

If the aforementioned browsers are not an option for you, forget resisting fingerprinting, you can install this add-on or not install it, it really won’t change much except browsing comfort. (The information-only part of the add-on is pretty interesting though) Instead focus your efforts on reducing exposure, by which I mean reducing the amount of servers from which your browser is requesting resources, and reducing leaks of information that go along with these network requests.

Using this could be complimentary, but would require (for now) re-thinking your overall strategy. It requires cookies to be enabled (I have not used it, but have followed it for months and had a few chats with Peter on github – so not sure it it requires 3rd party cookies in order to handle 3rd party content). For now, each site needs cookie permissions in order for the extension to “inject itself?” into the content. But there may be hope for some API changes within Mozilla that eliminates this reliance.

My strategy is to default deny cookies (which controls other persistent storage) but allow a small handful of site exceptions (about 8 in total). I also block cookies by default in uMatrix – but uMatrix actually allows cookies to be received, it just block them getting back out.

So in theory, you could allow all cookies in FF settings and control them via uM. This would then allow WebAPI Manager to go to work.

Additionally, FPI also controls 3rd party leakage (if 3rd party cookies are required). FPI has some issues with logins that utilize a supercookie flow – but then there are “internal” issues with Origin Attributes that are slowly being fixed (so much stuff is OA keyed, and here we see issues with extensions yet again)

Time will sort it all out.

Same goes for Luminous (which I have not looked at in any detail), which is an events driven blocker on a granular/domain level.

uBo/uM etc = domain level control of content types (in general)

WebManager API – domain level control of APIs

Luminous – domain level control of types of events (in JS) (not looked at it except in passing for now)

^^ just a general overview. The question is will they all play nicely together, and under what overall strategy with FF settings? Do they play nice with FPI (can’t see RFP causing issues)?

It doesn’t leak stateful information, yeah.

It doesn’t prevent network requests to third parties though, so it still leaks fingerprint and IP; any tracking method that doesn’t depend on the browser storing state is not defeated by First Party Isolation. FPI thoroughly ties everything to the domain displayed in the URL bar (up to even low level stuff like DNS cache, HSTS, AltSvc, … Firefox’s implementation of FPI is actually more thorough than Tor Browser’s.), but it doesn’t block network requests to third parties. I think Firefox’s combo of Tracking Protection + FPI + RFP is pretty awesome on its own. If you add the Tor network (Mozilla’s “Fusion” project), you almost have Tor Browser’s default config available to all Firefox users on Private Browsing. We would still need a unified hard mode though, because currently we still have to rely on add-ons with little market share to block JavaScript or other types of content. As long as we do…

I mean, as long as IP can be used, only a small fingerprint entropy is necessary to uniquely identify and thus track people. That’s why fingerprinting resistance is completely useless on Chromium-based browsers, and Firefox has only recently been reaching a state where it can claim to actually give a chance against fingerprinters. I don’t think it’s quite there yet, at least I’m not ready evaluating that it is, so I still rely on drastically reducing exposure to third parties. It did allow me enable a number of web standards (Web APIs in the article) that I previously disabled, and as a result my browsing comfort increased.

I’m looking forward to Firefox 60, because that’s where Tor Browser will start making direct use of most of the Tor Uplift project. By the time the Fusion project is done (in a year ?), privacy on the web will be vastly improved because all Firefox users on Private Browsing mode will have few different fingerprints.

Yup TBB based on ESR60 can finally leverage it (not that they don’t already mitigate against it all anyway, resource URI’s excepted, which could only land/happen when legacy extensions were dropped). The RFP doesn’t actually cover THAT much (yet) and from my experience most of it has been HW/OS detection mitigations (yup, followed every single ticket, also on tor trac, and more, as you well know from the github sticky). If you look at the list in the RFP section in the user.js, it’s not very big – swallowed most of the hardware section though (section 2500 is almost empty now) – and the solutions are often better (eg fuzzing/spoofing vs disabling)

But its not so much the more elegant solutions or the mitigations implemented (some them though there were no existing mechanisms) – its the fact that larger numbers of users can be enforced to have the same effects – wait til it gets turned on in PB mode!!

One day, in the distant future (because priorities/resources/time), I expect half the prefs in the user.js to become redundant when RFP is true. I have 30 more items lined up for Arthur, Ethan and Tom (I’ve already had a lot, and I mean a lot, of input via various mechanisms – I’d explain more but do not want to give too much away)

However, the uplift will save them time at their end, and some of the implementations are better approaches – given input directly from Mozilla engineers as well, not to mention the superior numbers of end users testing it out. They’re hoping for a FF60 MVP (it says 59, but I say its 60 cuz … no sources, you’ll have to trust me xD edit: https://wiki.mozilla.org/Security/Fingerprinting – fuk it, have a source then) – so effectively they’re trying to it ready for prime time and are just trying to clean up left-over current FP-breakage (and battling to get fonts via kinto done in time as well).

As for IP, it’s an assumption that users should know to mask this

Yes, I meant that I look forward to Tor Browser using Firefox 60 not because Tor Browser’s protections will get that much improved, but because we’ll get to see the work done widely exposed to real world scenarios, and I’ll be able to perceive more easily the remaining difference in protection between Firefox and Tor Browser :)

It may also help configuring Firefox, I’m slowly getting to the point where none of my pref changes will be related to things that can be detected by websites. (Instead the protection would be given by either uMatrix, uBO, RFP or FPI. uMatrix being the most fingerprintable of the lot.) Not there yet though.

don’t know why I said MVP, I meant “Ship”

I’m glad that there are people like you to back this work up so that the Tor Uplift project is not only importing work previously done by the Tor team in that area, but also an opportunity to do more.

The biggest problem for adoption right now is window size changes. I think the 1000×1000 default with 200×100 variations is way too uncomfortable and will just cause a high percentage of people to disable RFP.

According to Mozilla’s study on privacy features, 17% of people from the RFP group reported a screen problem (slide 31), and no other group reported such issues. Among those who reported a screen problem, a whopping 94% left the study (slide 32).

While I didn’t check the raw data to verify, this suggests that the window resize could be the culprit. Yet it is extremely important as window size is one of the biggest fingerprinting vectors there is, to the point that fingerprinting resistance is going to be impossible in many cases if RFP stops handling it.

The Tor developers are planning a feature that eases it out a bit, allowing users to dynamically resize the window in increments of 200×100 with visual feedback and all, but I’m not sure that will be enough. Mozilla UX people should chime in, and it’s IMO definitely worth a standalone study testing various options.

Still, as a quick improvement I would start ditching the 1000×1000 default to make it something more proportionate to screen sizes that people use. Firefox’s user base is dominated by 1366×768 screens and IIRC from a calculation I made some months ago screens that are mostly 4:3 in proportion are a minority. In that case, it makes sense that the default window proportion be something like 16:10 instead of 1:1. At least for me, that’s way more comfortable.

If at some point you have the occasion to raise this to Ethan, I think he is the one well versed in the screen resize area, he may be interested about the two slides from the study if he’s not aware already.

Forgive me if I’ve misunderstood… are you saying that it’s necessary for Firefox to actually change the window size to accomplish this? Why can’t it just lie to the web server about the window size?

Lying about window size can be detected. I’m not sure if there’s anything that can be done to let a user pick arbitrary window dimensions while letting content think window dimensions are, say, 1366×768 :/

It’s the same for fonts: You can report a font list that isn’t the truth, but content can find out that you’re lying. That’s why Firefox with RFP is going to ship with a bundled list of fonts (like Tor Browser), unless that list is downloaded the first time a user enables RFP (the decision depends on the file size of the fonts package). So that all RFP users have the exact same list of fonts and don’t lie, meaning this huge fingerprinting vector that are fonts is thwarted.

@Pants & Zuck

That’s too bad. :( I would find that the window changing size on its own to be unacceptable — I’d prefer that websites have their layout messed up over that happening. It sounds like there’s no good solution to this at all.

What I truly wish is that there was a way I could tell the browser to simply not report this sort of data to the web server at all.

@John Fenderson

Spoofing all measurements to inner window values removes all the variations (and measurements of extra things such as chrome) so they’re only dealing with one width and one height.

Lying about the inner window would mean websites could/would visually break as JS/CSS(?) expect the area to be what it says it is. Elements, width %’s, etc could end up in weird places – not an expert on CSS and web page design – but its just not feasible to lie.

They toyed with the idea of using zoom – eg lets say the spoof lies as 1600 width but in reality you are 1237px wide – so a zoom of something like 77% would get auto-applied. This idea didn’t get far, its too complicated.

Just not lying is the simplest way forward, and then using a set number of res combinations to reduce the entropy.

Here’s a cool video of dynamically resizing the content window (display window) and snapping the inner window/chrome to fit: https://www.youtube.com/watch?v=TQxuuFTgz7M

> I would start ditching the 1000×1000 default to make it something more proportionate to screen sizes that people use

Nah, that doesn’t work. Its too limiting. As long as all RFP users sizes are enforced, then the number of combinations need to be reduced. Desktops being 16:9 = increments of 200×100 (wxh) – perfect. Its just the auto-determing that is screwed up: max limits, and not using width properly. Just needs a tweak IMO

Whoa, just re-read what I said. The “its too limiting” was meant to apply to the comment about using common screen resolutions, nothing else. There should also be an upper limit. If they tweak the width, as you say, to accommodate desktops, then the combos already serve enough variation – no need to add in common screen res. That’s all I meant.

I didn’t mean it as a final solution of course. IMO we can’t figure out a final solution without doing a study that compares several propositions with a no-resize control group.

It’s just that the 1000×1000 default is terrible, and the 200×100 increments based on such a default only generate shit dimensions like 1000×800, i.e. we’re left with proportions that are in the ballpark of 4:3. I guess it’s comfortable on 4:3 screens (is it ?), but since these are a minority we are probably inconveniencing a high amount of users. I don’t know why they chose 1000×1000 as a default, possibly at the time 4:3 screens were a majority ?

Regarding your resizing to 1366×768, you probably noticed but just in case beware, that’s telling websites that you are browsing fullscreen! It’s pretty rare. I would spoof a maximized window, so inner width and height of 1366x(768-K) where K is the height of the default Firefox UI in maximized mode + the default height of my OS taskbar :)

That said, someone who enables RFP is recognizable as someone who enabled RFP. Among those, my guess is that there’s a majority of 1000×1000 variants and a decent amount of maximized windows of various screen dimensions, 1366×768 being the most common. I decided to stick to the default variants but boy do I wish it would be improved!

*the default Firefox ESR UI, since RFP spoofs ESR.

I understand the ramifications of the res measurements. Even without JS, sites can get the info if they really wanted to ( https://arthuredelstein.github.io/tordemos/media-query-fingerprint.html – eg: using media elements). Until they sort of the RFP implementation, I need to resize as something – being 1400×900 is probably just as damning. I consider a lot of sites might not dig too deep and only get monitor res, who knows.

These client side FP’s are always a worst case scenario: I always assume things such as IP, 3rd party, etc as the first steps. Its only if and when a site HAS to have things enabled that the extra stuff kicks in/makes a difference. By default I deny all JS, all 3rd party, all iFrames, all XHR, all cookies, all video, and so on.

tl;dr: well, then they better sort out a better set of auto-sizes :)

screen issues: not just the default 1000×1000 starting point but also site zoom would come under this (but less of an deterrent to using RFP)

I’m the same as you: TBB can restrict what they like, but for mainstream FF users the desktop res needs to be taken into account (it would be trivial to detect FF vs TBB when TFF build in Tor etc), so I’m only concerned with the FF set of users here. Most FF users are desktop users (95%? – you’d have to extrapolate the desktop vs mobile numbers and percentages).

Personally, for now, I use the hidden override prefs and set myself up as 1366×768. I know it rounds down (also, if you have the bookmark toolbar open, its always out by a pixel or two due to icon padding), but I use WindowResizer with just the one preset, so open FF at 1400×800, click preset to get 1366×768.

I would like the code to address width for desktop users: have some max limits, but adjust to get rid of squares. I do not care about using common screen resolutions, because sizes would be enforced on new windows for all RFP users. All it needs is a better determination of what width to use

Also people are annoyed with the start in max state, so that’s a separate % of annoyed uptakers

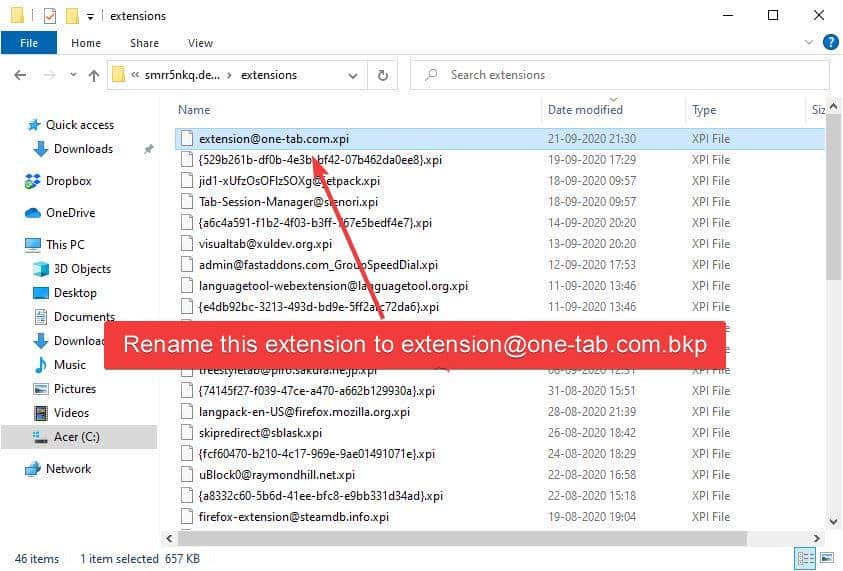

The article neglected to mention this Known Issue:

“[bug] not working with container tabs and First Party Isolate”

https://github.com/snyderp/web-api-manager/issues/53

How does WebAPI Manager compare to uBlock Origin and NoScript?

uBlock Origin just blocks certain websites and/or page elements. So you can use it to prevent certain sites (like ad companies) from running scripts, and hide ads or anything annoying on a page.

NoScript handles which sites are allowed to load scripts, and from where.

So all three extensions do somewhat different things but with some overlap.

If you want to block ads and the like, get uBlock Origin.

If you want to be able to block all scripts, get NoScript.

If you don’t want to be tracked, get WebAPI Manager (along with other privacy tools)

Dark, if you’re needing to know what the WebAPI Manager compare to uBlock Origin and NoScript. Just search youtube like you do as always for your tech information. Just google kids playing video games talking about tech, or search on youtube kids playing video games and don’t have any certifications in technology, and never worked in a IT job before. So when you find it you can post here on this website, in non https.

here is another,IMHO, little nifty addon worth of mention, highly customizable.Give it a try ;)

https://addons.mozilla.org/it/firefox/addon/block-cloudflare-mitm-attack/

Very promising addon. I’ll keep an eye on it.

In the future the browser will be the OS for all (web) applications. In enterprise this is the case for the last 15 years.

The problem will be to separate this platform for running web apps from the classic browser used for web surfing (this also is one of the biggest security problems in enterprises).

For my personal use I’m not a fan of web apps and I’ll resist as much as I can, but I need to start to make a strategy for separating this new ‘web OS platform’ from the classic web: many browser with special roles, many profiles in browser, FF container technology, etc.

This type of addon (and the future iterations) will make the difference between a browser/profile/container used for a web app, which will be more ‘open’ (from a security standpoint) but used for a very special scope, and a browser/profile/container used for general, more risky purposes, which will be security hardened.

@STech

I’m with you on the dislike for web applications. My personal approach is to ignore them entirely. An extension like this could help in that a lot! If I have to use a browser that supports web apps (or HTML5 generally), this would be the next best thing.

It’s either web apps with open web standards or proprietary apps like on the smartphone and recent Windows versions.

When it comes to apps that need web access and your privacy, would you rather have them run in your browser which you know and control, or through standalone apps made by X amount of companies that can do whatever they want ? That’s what’s at stake from a privacy standpoint, but it’s not like it’s just privacy that’s on the line…

If you want to completely segregate web apps from regular browsing, just set up two different browser profiles.

Personally, I prefer standalone apps, because I can set firewall policies on a per-app basis. If they’re web apps, then I can only set policies for the web browser, and I lose a great deal of control over what apps get what access to the net.

So, for me, the security issue is that I’m less secure with web apps.

Also, web apps tend to suck terribly compared to native apps that do the same thing (although, admittedly, this is much less true on mobile devices than on the desktop).

This seems to be a well thought out extension for Chrome. Of course “Conservative” kills Google mail, but have run in to no other squawk so far.