The era of corporate AI: Is academia losing control?

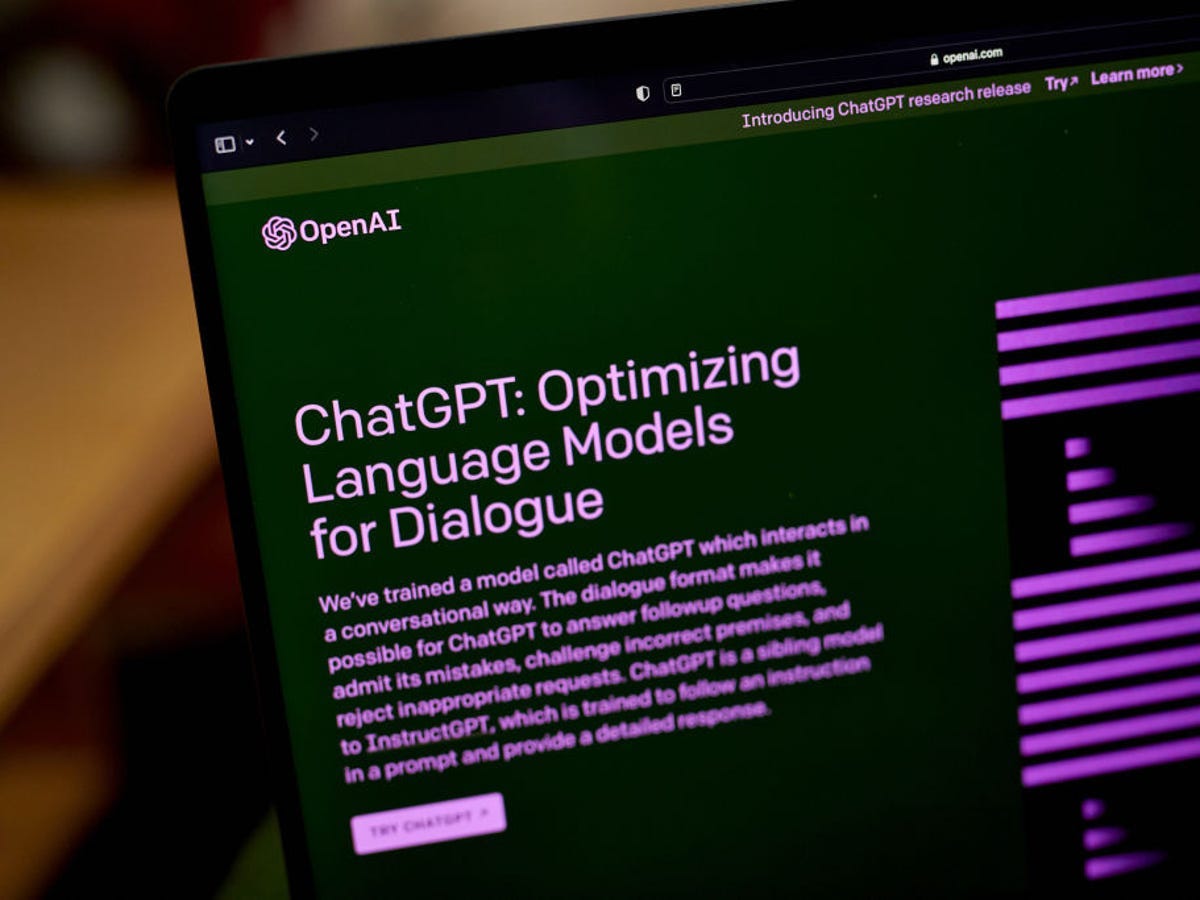

Artificial intelligence has met with a wider user base in the last couple of years. Currently, corporates are deciding on the future of AI services, how to deploy them, and how to balance risk and opportunity.

Researchers from Stanford University teamed up with companies like Google, Anthropic, and Hugging Face to publish the 2023 AI Index. According to the report, AI is entering a new stage, and corporates have full control over its future, use areas, and more.

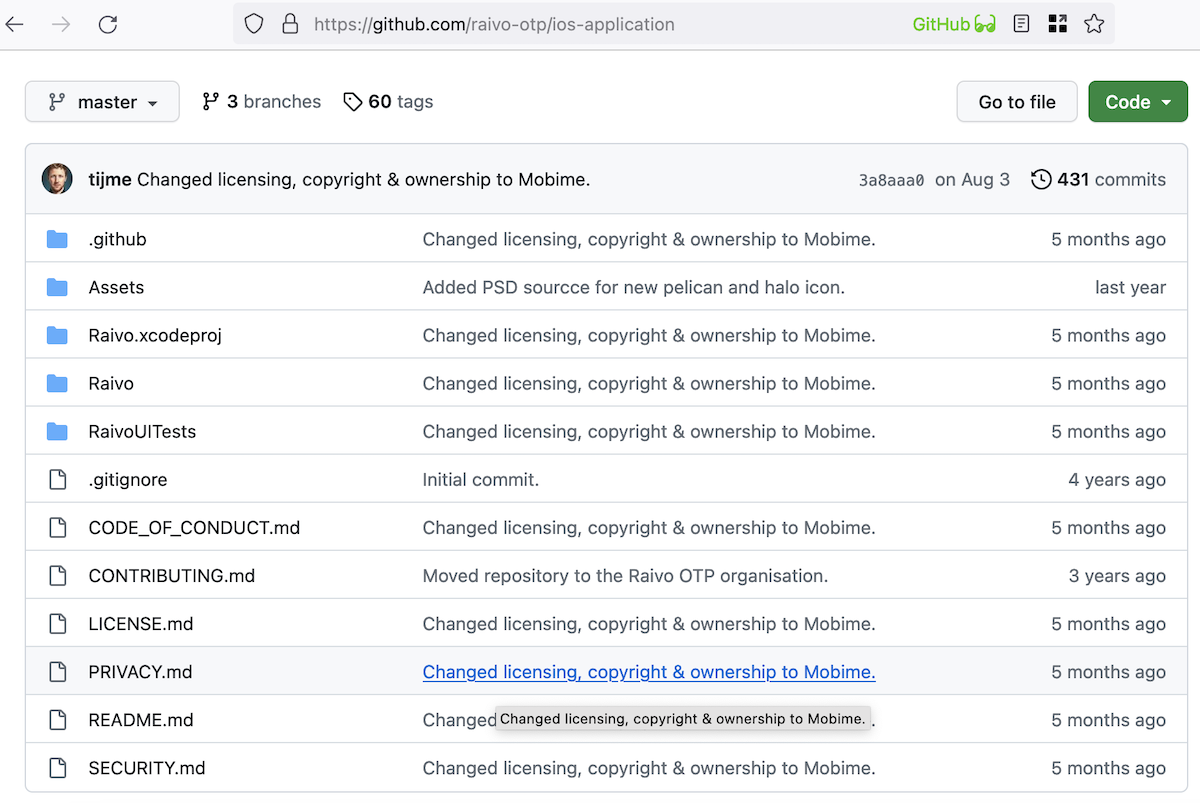

"Until 2014, most significant machine learning models were released by academia. Since then, industry has taken over. In 2022, there were 32 significant industry-produced machine learning models compared to just three produced by academia. Building state-of-the-art AI systems increasingly requires large amounts of data, compute, and money, resources that industry actors inherently possess in greater amounts compared to nonprofits and academia," says the report.

Corporate solidarity and its influence on the industry has given us advantages and disadvantages. Especially in the last couple of years, we have seen an increase in inappropriate manner from chatbots, including ethical misuse.

Ethical misuse incidents increased

Lately, deepfake audios and pictures have shown an increase in numbers. The index says firms launched their products as quickly as possible to hop on the bandwagon and not miss any of the hype. They took AI services mainstream, and this caused more ethical misuses.

Before the "corporate era," academia was responsible for developing state-of-the-art AI systems.

Numbers show that field investment has increased incredibly over the past years. For instance, OpenAI spent $50,000 to build GPT- 2 in 2019, containing 1.5 billion parameters. Around three years after, Google introduced PaLM and spent around $8 million on training and other necessary expenditures. PaLM has 540 billion parameters, 360 times larger than OpenAI's GPT-2.

AI services also have environmental costs. According to a 2022 research, transporting one person from New York to San Francisco and back would emit 25 times as much carbon as training the massive AI language model BLOOM. The carbon cost of OpenAI's GPT-3 was calculated to be 20 times greater than that of BLOOM.

However, there is also a positive side for the environment that can be used to reduce carbon emissions. A three-month experiment in the company's data centers in 2022 by Google subsidiary DeepMind's BCOOLER AI system cut energy consumption by 12.7%. The system did this by optimizing cooling processes.

People from China have more faith in AI than Americans. Chinese respondents to an Ipsos study conducted in 2022 agreed with the assertion that "products and services using AI have more benefits than drawbacks" by a margin of 78%. Chinese respondents had the highest levels of enthusiasm for AI, followed by Saudi Arabian and Indian respondents, with 76 and 71 percent. Only 35 percent of survey respondents from the US agreed with the statement.

Advertisement

The last paragraph if verifiable to be true, raises many questions academia might successfully answer.