Adobe Bets on Generative AI With ‘Firefly’ Tool to Create Images From Text

With so many apps incorporating AI into their systems, it only makes sense that Adobe does the same. Adobe recently jumped into the game by acquiring generative AI a new AI model called Firefly.

The main focus is to bring AI into Adobe’s suite of apps and services. Adobe VP, Alexandru Costin told TechCrunch that the AI will work by generating media content as Firefly will work with multiple AI models that work across a variety of different use cases.

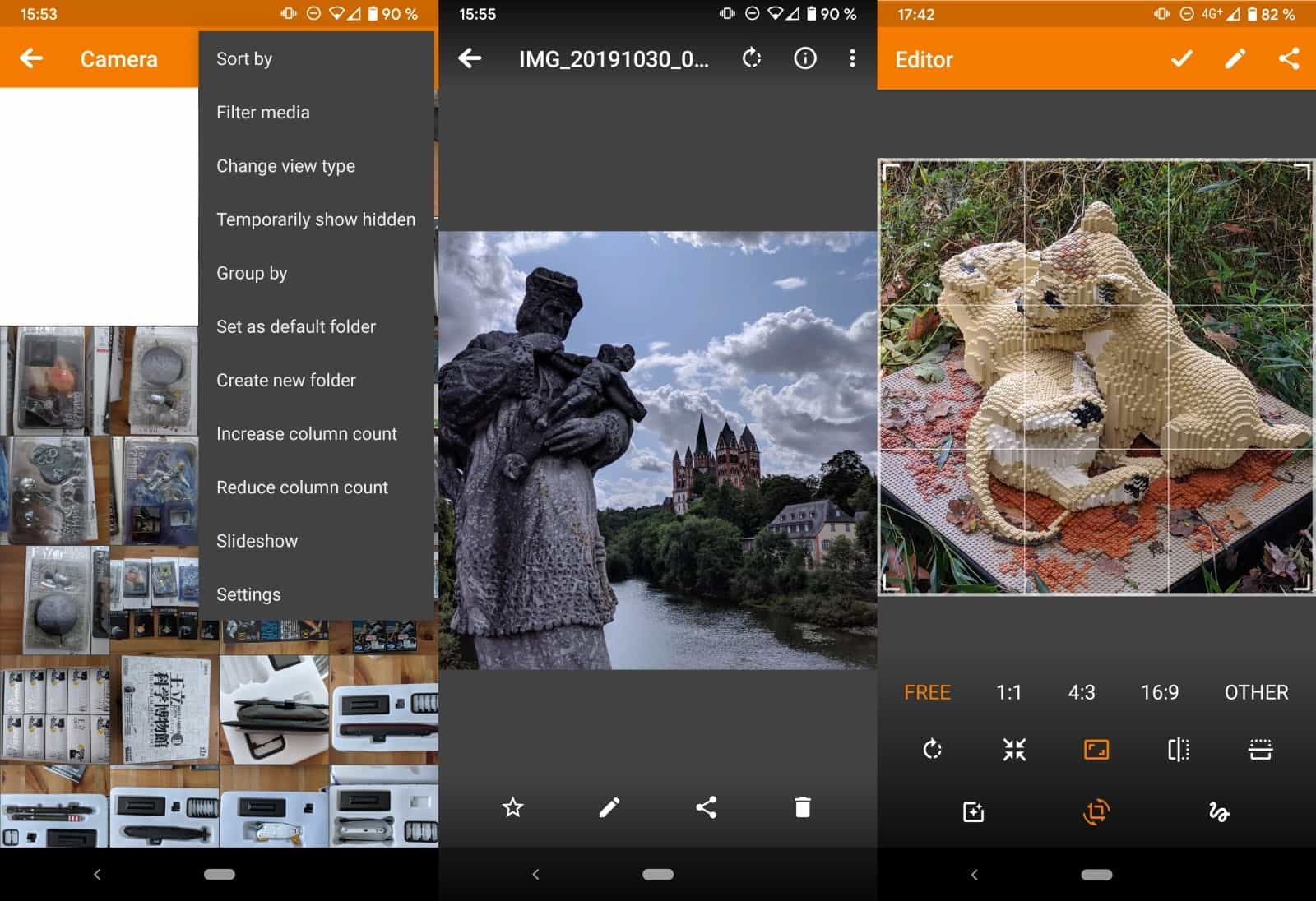

This will be an extension to the already existing generative AI being used in Express, Lightroom, and Photoshop. These let users create what they want using just a description. Costin also certified that Firefly would be Adobe’s next step in the AI journey. This new AI will work with the new ‘gentech’ models. These models work on imaging typography, illustration, and more to produce assets. The content will be created on Creative Cloud, Document Cloud, and Experience Cloud.

Firefly already exists in beta without pricing and Adobe promises that a price tag will be put on it soon. The AI works on generating images and text effects from a mere description. Adobe's first firefly model is also capable of transferring different styles to existing images of à la Prisma as an addition to the text-to-image generation.

Adobe says metadata will be used to indicate that the artwork is AI-generated. With Firefly, anyone will be able to create content regardless of their Talent and experience. Finally, I can use Photoshop without having to worry about the size of the brush.

What About the Creators?

On the technical side, the first Firefly model is similar to the text-to-image AI from OpenAI known as DALL_E_2 and Stable Diffusion. This AI works on training existing images from data sets. Experts suggest that training models that use public images should be covered by the fair use doctrine in the US. Another issue raised was AI’s tendency to replicate images. Some platforms have banned AI tools for fear of legal implications.

The Solution

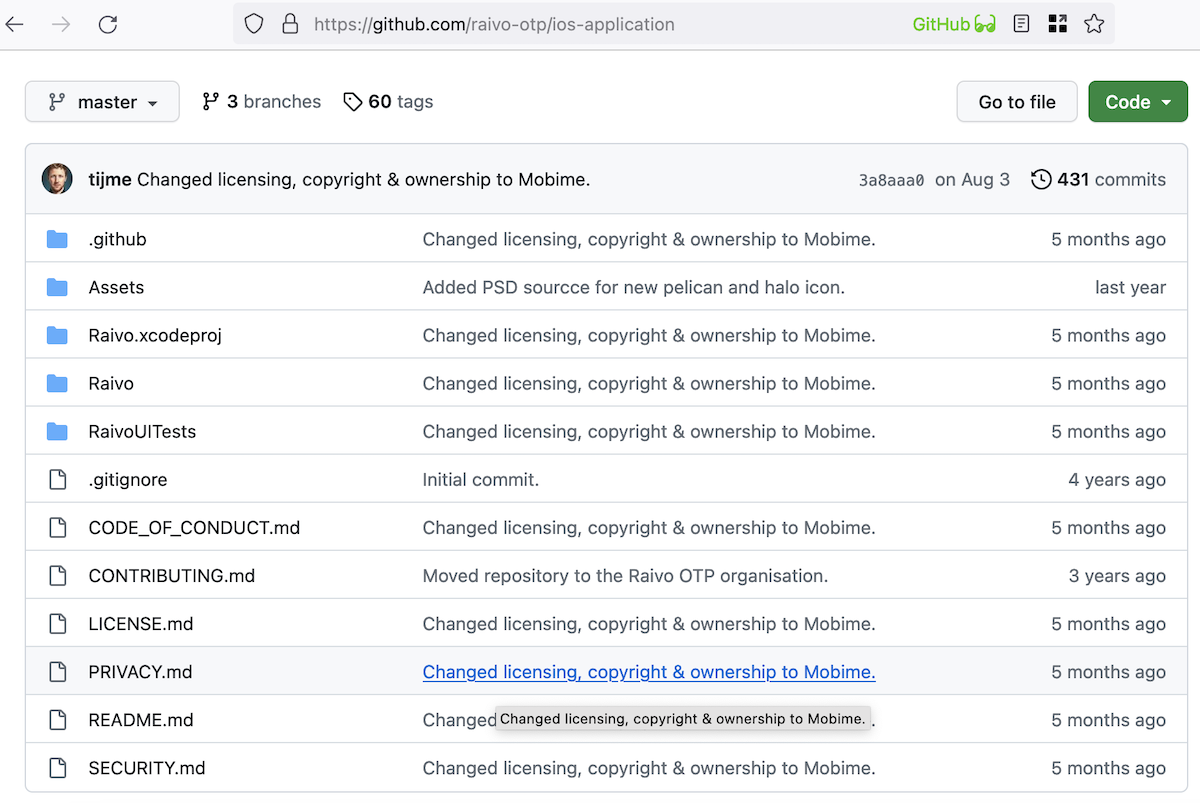

The solution to this according to Adobe is training Firefly models to exclusively read content from Adobe Stock. Adobe also mentioned that it’s exploring a compensation model for Stock contributors who don’t mind monetizing their talent. Those creators that wish can opt out of training by attaching a “do not train” tag on their work. Adobe promises to offer artists more control over their work.

Copyright Issues

As the company continues to work on future models. Adobe customers worry about whether they will own the rights to Firefly-generated artwork. The copyright status on the issue is currently unclear Costin also agrees with this statement. With the current copyright issues on Firefly, there’s a little to be desired.

Costin also mentions that the company is ready for the challenge. He also said future Firefly models will work on a variety of assets, tech, and training data from Adobe and others. He also says they are working on designing a generative AI that will benefit creators through their skill and creativity.

Advertisement

what about copyrighting, really ? if Adobe AI start getting personality, (as machine) they might got

Copyright claims from their photos… and this is a little problem..