Microsoft Turing Image Super Resolution promises to do away with low-res images everywhere

Did you ever have to look at or work with bad quality images? Maybe an image from an old camera that is low resolution, a badly taken photo of an eBay auction item, or a post on a forum that showed only the thumbnails but not the full images? There is often little that you can do to improve the quality of such images. While you may be able to find a better version, e.g., by running reverse image searches, there is no guarantee for that.

Microsoft believes it has the answer for that. Turing Image Super Resolution is using AI to enhance images. Already used on Bing Maps and currently being rolled out to some Microsoft Edge Canary users, Microsoft believes that its technology will do away with bad and low resolution images everywhere in the future.

The ultimate mission for the Turing Super-Resolution effort is to turn any application where people view, consume or create media into an “HD” experience. We are closely working with key teams across Microsoft to explore how to achieve that vision in more places and on more devices.

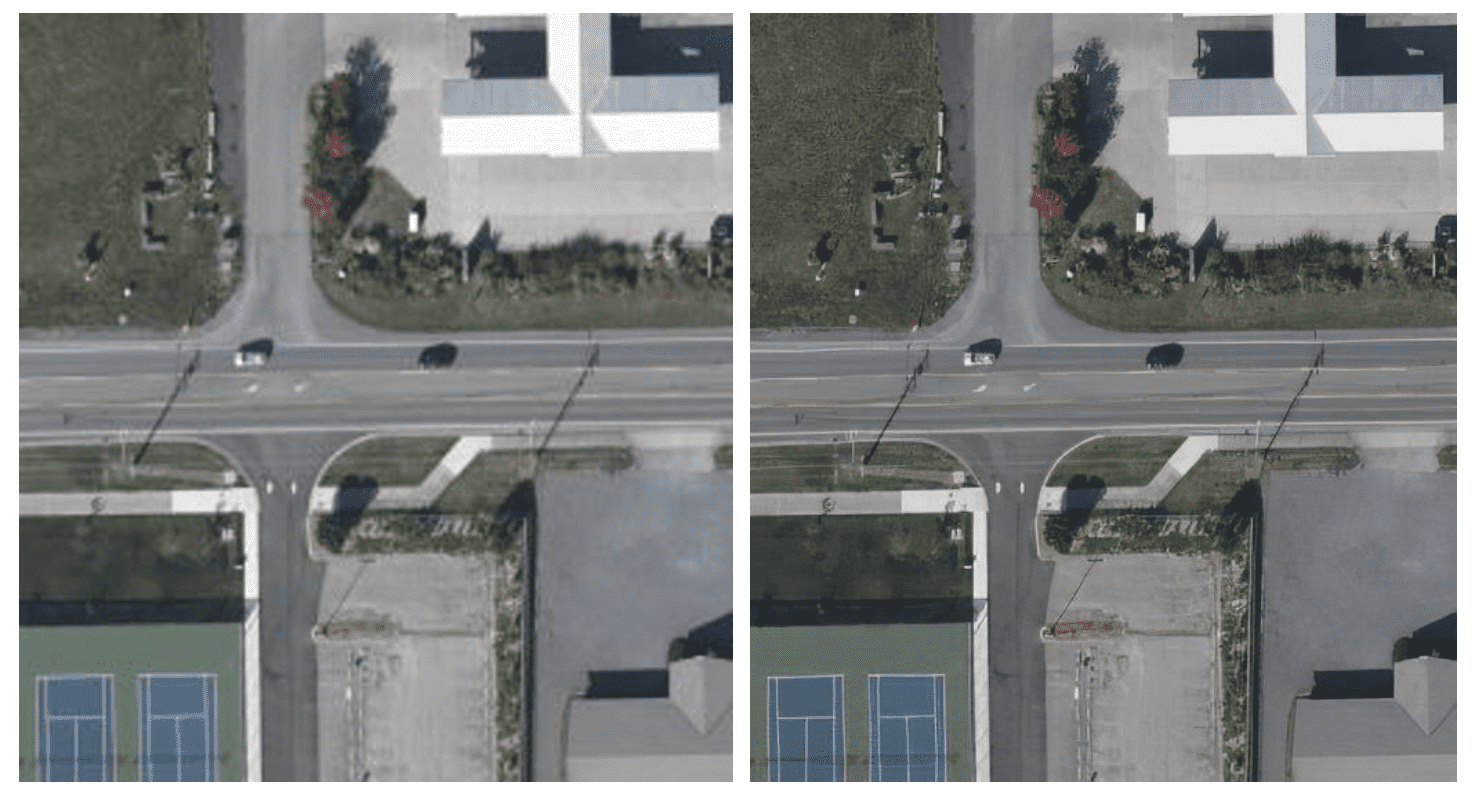

Microsoft published a blog post on the official Microsoft Bing blog in which the company explains the technology. Several before and after photos are provided to highlight the changes that Turing-ISR made to the original photos. The thumbnail images that Microsoft posted lack quality, and it is necessary to open the images or save them to the local system to compare the full resolution versions against each other.

When you do, you may notice that Turing Image Super Resolution is capable of performing different operations on source images. Besides improving the resolution of images right away, it may also improve the clarity of images or enhance images in other ways.

Microsoft is using the new technology on Bing Maps' aerial imagery feature already. Microsoft states that it has rolled out the functionality to "most of the world's land area" already, and that 98% of side-by-side test users preferred the enhanced imagery over the originals.

Some Microsoft Edge Canary users are already seeing image enhancements in the browser. Microsoft does not provide details on the implementation in Edge at this time, but explains, that it is using content distribution networks for enhanced images to avoid having to process images repeatedly.

The company's goal is to turn "Microsoft Edge into the best browser for viewing images on the web" according to the blog post.

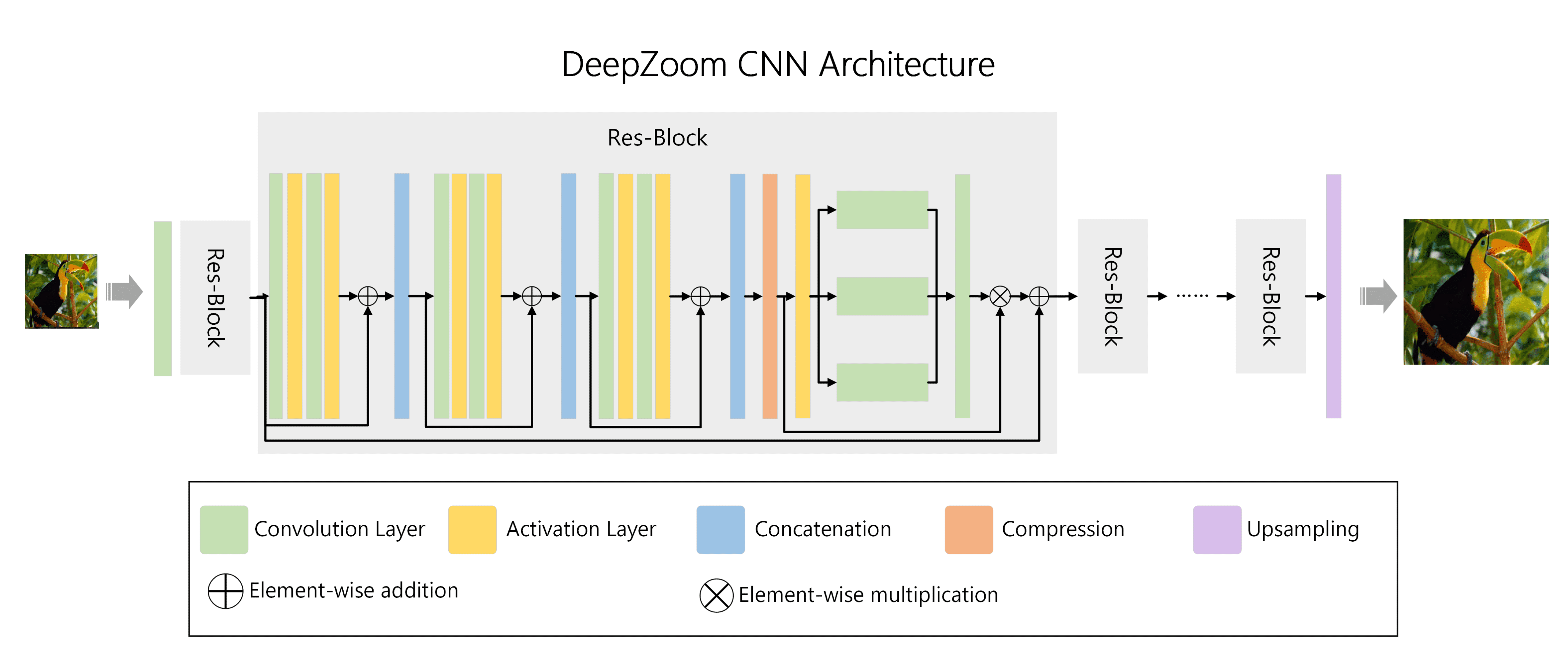

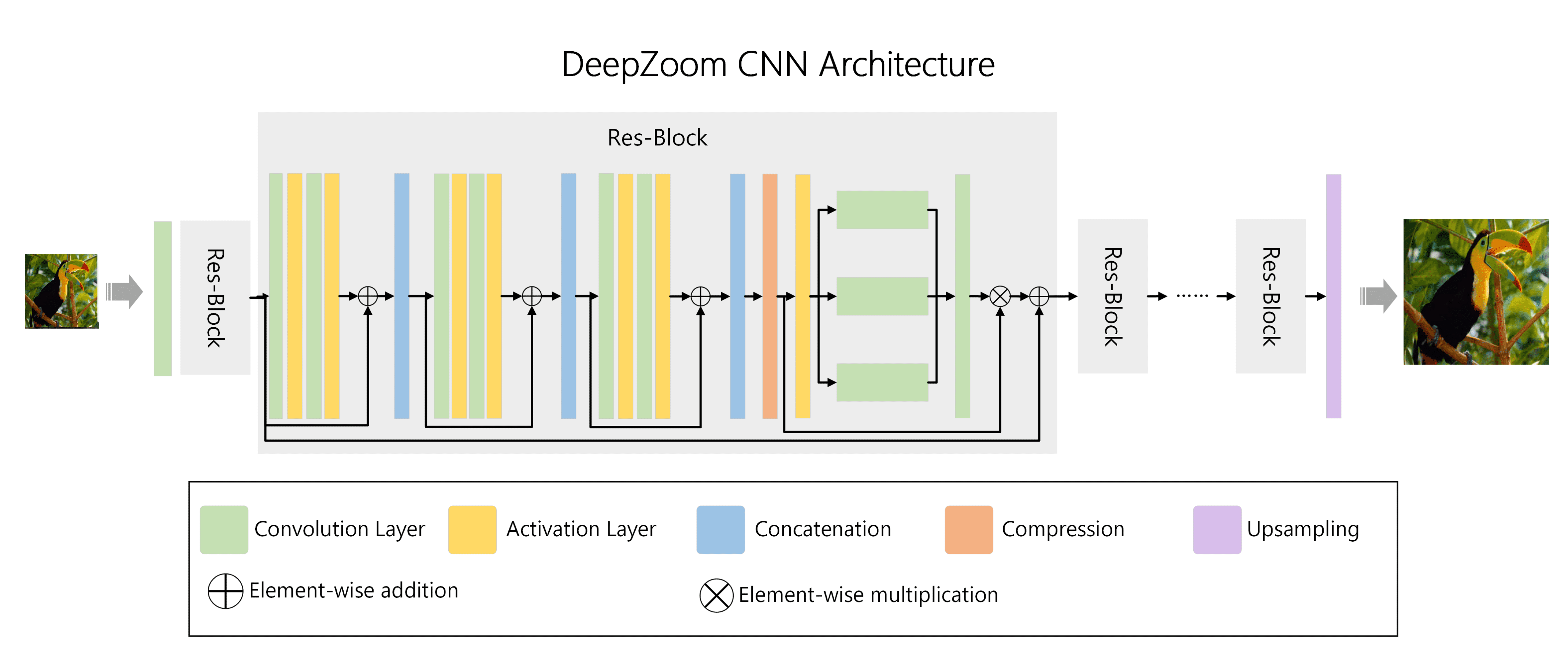

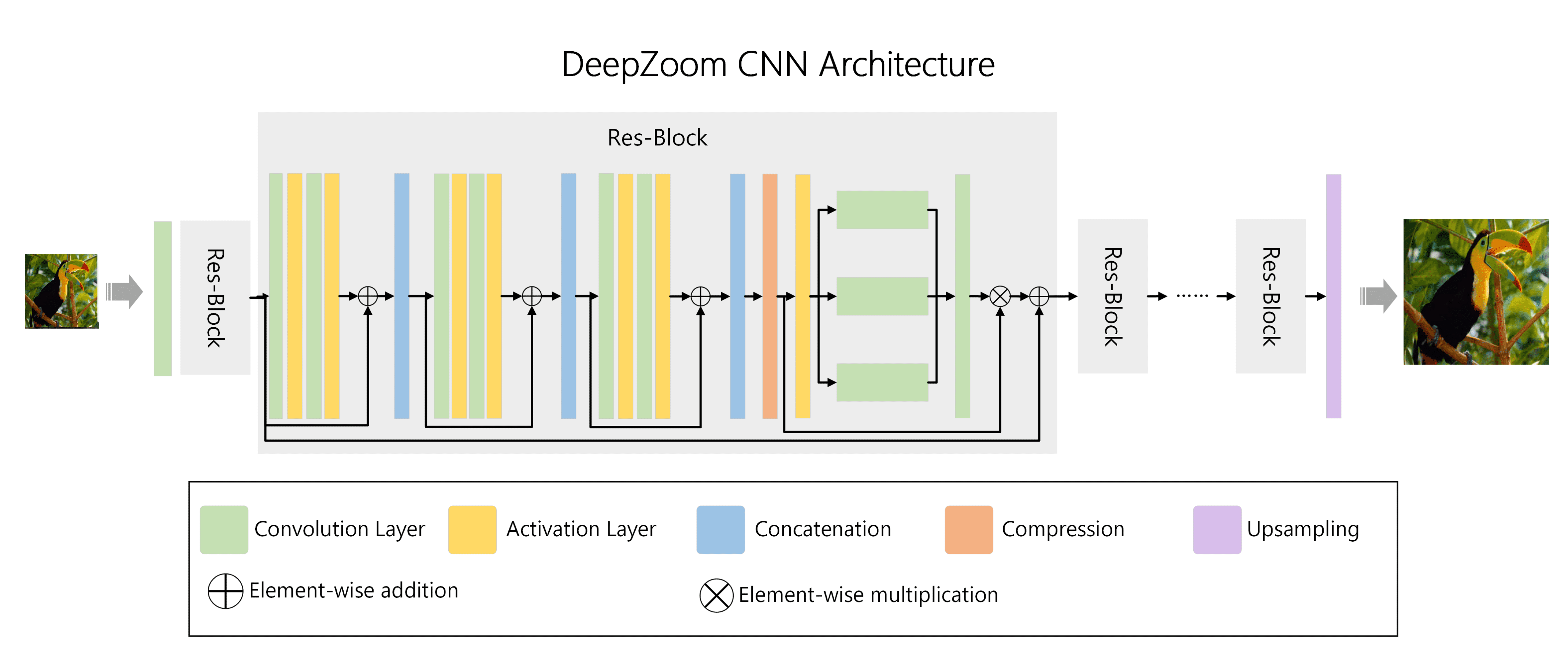

The technical part of the blog post provides details on the model, including on how it cleans, enhances and scales images. The improvements work on all kinds of images, including images with text.

Closing Words

Improved image qualities of low quality images appears to be something that most Internet users would welcome. The implementation matters: is the functionality enabled all the time? what about on/off switches or exceptions? what about Telemetry and connections to Microsoft controlled CDNs whenever these images are displayed in the browser?

Now You: do you find AI image enhancements on the Web and elsewhere useful?

Yes, kids this is how we do it. We blur a gazillion pictures and present them to you. Then in our next brilliant move we show you the original sharp ones, if you use our products that all bring us ad revenue every time anyone uses them. You know that scam when someone acts very ill and his/her fake doctor appears with a bottle filled with magical medicine? This that.

And the before image is hardly blurry to begin with, any less blurry would be impossible, try with real world shitty crappy images MS, lets see your AI model handle that.

input: https://files.catbox.moe/dhqxw1.jpg [0,2 MiB]

output: https://files.catbox.moe/f52i3b.png [6,3 MiB]

input: https://files.catbox.moe/vldahi.png [3,4 MiB]

output: https://files.catbox.moe/p08d84.png [36,2 MiB]

Image reconstruction has come a long way :^) Quite impressive imo. First one was done with Topaz Gigapixel (paid software). The second was done with waifu2x-caffe (FOSS, requires CUDA). Gigapixel is better with real-life pictures, whereas waifu2x is better with flatter/CG stuff.

What are those links? I got ERR_HTTP2_PROTOCOL_ERROR on all of them

on

Every Chromium based browser out there renders pages blurry. Most people don’t see it because they’ve never tried anything else to compare to.

Just snap a Chrome/Edge/Brave on one side of your desktop and FF on the other, end you’ll see the difference. FF is crystal clear whereas the other/s are blurred. It’s most obvious in the downsized images, logos and thumbnails.

So MS is doing what exactly? Fixing the existing problem for Google and calling it “AI”?

Many top websites perform bad on Firefox. Probably a result of both- websites being only tested on Chrome, and maybe also that Chrome is somewhat superior in this matter.

But I’ve always wondered about why webpages feels uglier on Chrome, I know now.

And when it comes to using addons, Firefox easily beats Chrome because Chrome doesn’t let addons work properly so that Google can effectively track users.

Firefox is a plain-old web browser. While Chrome is primarily a software (with built-in tracker) to use Google services, it’s ability to browse web is an added advantage to attract & keep it’s user-base. But it also enables Google to stop browsers like Firefox to deprive Google of tracking data it gets from millions of other websites.

Firefox is indeed sharper, but only when the image being display is shown at a smaller size.

Font rendering and images at full size are displayed the same. This is a difference in the resampling algorithm.

“but only when the image being display is shown at a smaller size”

Which is 99.9% of the time.

Plus, there is a very minute contrast difference even in full size images, but that’s within a tolerable limit.

See: http://entropymine.com/resamplescope/notes/browsers/

I was about to call blatant BS, but:

[https://abload.de/img/firefoxl9ksi.png]

[https://abload.de/img/chromium25k8n.png]

What are they doing? Even the fonts look bad on Chromium.

Addendum, created with PNG screenshots, then cropped in IrfanView, resized by 500% and saved once again as PNG to illustrate the difference for visually impaired people.

And yes this is the right side of the AI image in both screens comparing the browsers.

“Some Microsoft Edge Canary users are already seeing image enhancements in the browser”

As I said in another comment, don’t believe everything Microsoft says on their blogs, this has been available for months in the Canary version:

https://winaero.com/microsoft-edge-can-now-enhance-and-sharpen-images/

.

“what about on/off switches or exceptions?”

There is a toggle to disable this feature in Settings>Privacy, search, and services>Enhance images in Microsoft Edge. That toggle is already available for some users in the stable version.

Amazing quality. I wonder if this AI could be applied to W11 to rebuild such a crap OS. :]

I think they did, just the parameters used were “money”, “integration with every other microsoft product that exists in any employee’s brain”, “money”, “copy macos”, “ad money”

It creates details that look good but are not same as in reality. It’s good for porn. It’s bad for maps.

“that it is using content distribution networks for enhanced images to avoid having to process images repeatedly.”

So they can only do this for images they own or have permission to modify, otherwise it would be illegal.

It’s good for porn

That’s all you had to say

@Shadowed

Some porn deserves to be blurry..