The Dangers of Using High Tech Facial Recognition Software to Catch Criminals

In the wake of rioting in London, England, the British police are turning to CCTV footage in an attempt to capture the ringleaders involved in the rioting and troublemaking. With the advances of high-tech facial recognition software used in airports to identify people at immigration, they say it should be possible to match up faces with know criminals and troublemakers. But will it be that easy?

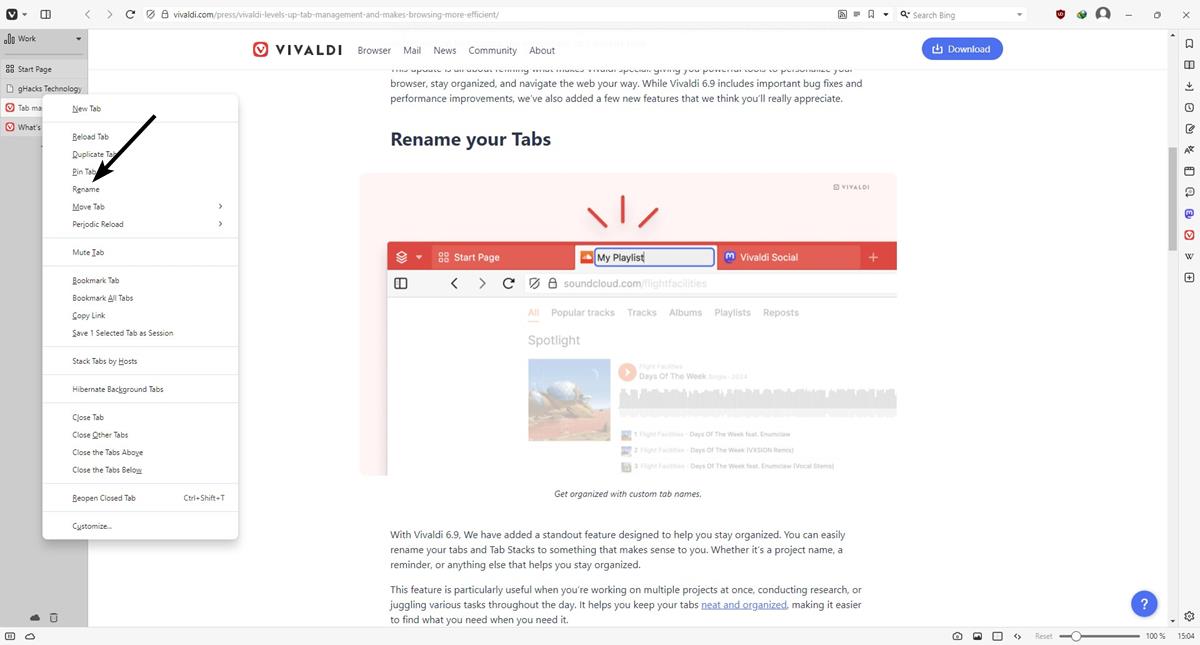

There are attempts being used, albeit private sector attempts, to try and use this software and match people with that of criminal records and known photographs of perpetrators, but it’s not altogether that simple really, because in order to do facial recognition you already need a database of confirmed photos, with names attached to them. You can’t simply look at a photograph and work out who it is, and you must of course have something to compare the CCTV footage with.

The other issue is the quality of the photograph you’re trying to compare from. There is a huge difference between a photo of someone staring straight at a camera with a plain background, and another which is slightly misty and possibly from a significant distance away, plus the fact that many rioters in London wore masks to cover parts of their faces. It actually turns out to be very difficult indeed to match these kinds of photos, and as of yet the technology seen on sci-fi movies where you can enhance pictures to extraordinary degrees is not available. Unfortunately real life isn’t quite like that, so the dodgy quality CCTV footage the police have may not be entirely reliable and open to interpretation. Certainly in a court of law, a fuzzy picture may not stand up on it’s own without other evidence to support it, such as eyewitnesses. So if we have to rely on eyewitnesses to get a conviction, we’re really back to square one.

At the moment, some images are beginning to appear on TV and on police websites, and even in shopping malls, where the police are appealing to the public to inform on anyone they recognize in the pictures. The problem with this is, if a person is misidentified in this manner, we don’t want vigilantes taking matters into their own hands and attacking innocent people just because they have a resemblance to someone involved in the riots.

There is another issue of data security. Social media provides an excellent source of photographs, where people have uploaded many hundreds of images of themselves. It may not be so difficult to find a match, especially if you take into account a company like FaceBook that has 750 million users. But it may be the case that the police are simply not allowed to use this information amidst privacy concerns. For one, if the technology can be used to catch criminals, then it could also certainly be used for criminal activity, so there is a danger the information could be abused in the future.

Advertisement

I think I’ll start using wanted pictures as my facebook profile pics and see what happens.

Google have our photos, they have facial recognition software and they know where we are and where we’ve been from our Android phones. Job done.

‘Police and experts’? Yeah – right! This is the UK where cheap-pinch, and often hugely corrupt, policing is now so much part of life that it’s a unquestionably a contributory cause to the current unrest (which has now spread a long way outside London).

No matter what the forensic technique, the end result can never be more than the evidence in court of someone who is employed by the police and knows full well which side his bread is buttered on.

I’m not condoning the violence, but anyone wanting a more honest and measured picture to that shown in government-acquiescent TV news or knee-jerk political outbursts might care to look at:

http://blogs.telegraph.co.uk/news/peteroborne/100100708/the-moral-decay-of-our-society-is-as-bad-at-the-top-as-the-bottom/#disqus_thread

I do not really see the dangers mentioned enough in the article. Sure, it can be problematic if a software identifies someone falsely but that’s where police and experts come into play to determine the correctness of the identification. It is not as if only the software is used to determine that.