Watch out for these ChatGPT scams

ChatGPT scams are, unfortunately, on the rise. In a world driven by technological advancements, artificial intelligence has emerged as a groundbreaking innovation, transforming how we live, work, and interact. Among the numerous AI breakthroughs, language models like ChatGPT have opened up a realm of possibilities in natural language processing. While these models have been predominantly utilized for positive purposes such as aiding businesses, enhancing customer support, and bolstering creativity, there exists a darker side to their potential.

As with any powerful tool, there will always be those who seek to exploit it for nefarious purposes, and ChatGPT is no exception. The rise of scammers and malicious actors capitalizing on the AI revolution to deceive and manipulate unsuspecting individuals is a concerning phenomenon that demands our attention. In this blog post, we delve into the underbelly of how people are exploiting ChatGPT and other AI language models for scamming, shedding light on the deceptive tactics they employ, the consequences faced by victims, and the ongoing efforts to combat such abuse.

It is crucial to recognize that while AI offers immense promise for positive change, it also presents unique challenges and ethical considerations that must be addressed to ensure a safe and trustworthy digital environment.

Join us as we explore the dark side of ChatGPT and gain insights into the measures being taken to strike a balance between innovation and safeguarding users from malicious AI misuse.

Popular ChatGPT scams

The world of scams and fraud has seen a significant evolution with the integration of AI, particularly language models like ChatGPT, and here are the most used ways:

ChatGPT-generated email scam

Emails have been used to spread malware, extort victims, and steal sensitive information, earning them a bad reputation as a scamming medium. Email scammers are now using ChatGPT in an attempt to trick unsuspecting receivers.

A number of news organizations raised alarms about an increase in ChatGPT-created phishing emails in April 2023. Criminals increasingly use chatbots to craft malicious phishing emails because of their capacity to produce material on demand.

Let's say a cybercriminal wants to commit crimes against people who speak English but isn't fluent in the language. They may create flawless phishing emails free of typos and grammatical mistakes with the help of ChatGPT. These expertly crafted messages are likelier to fool their targets because of the air of legitimacy they exude.

Basically, fraudsters might save time and effort by employing ChatGPT when creating phishing emails, which could lead to an increase in the frequency of phishing attacks.

Malicious ChatGPT browser extensions

While browser add-ons are incredibly useful, they may also be exploited as Trojan horses by criminals to install malware and steal sensitive information. This con works just as well in ChatGPT as it does anywhere else.

There are certain trustworthy ChatGPT-specific add-ons (like Merlin and Improved ChatGPT) that can be found in your browser's app store, but this is not the case for all add-ons. In March of 2023, for instance, a fake ChatGPT plugin known as "Chat GPT for Google" spread quickly across many mobile platforms. This malicious ChatGPT plugin was spreading and stealing information from thousands of Facebook users as it went viral.

The extension's name was chosen deliberately to cause misunderstanding, as it sounds very similar to the legitimate ChatGPT for Google service. Several people installed the add-on without verifying its authenticity, believing it was secure. The add-on was, in reality, a covert channel for planting backdoors in Facebook accounts and gaining illegal admin access.

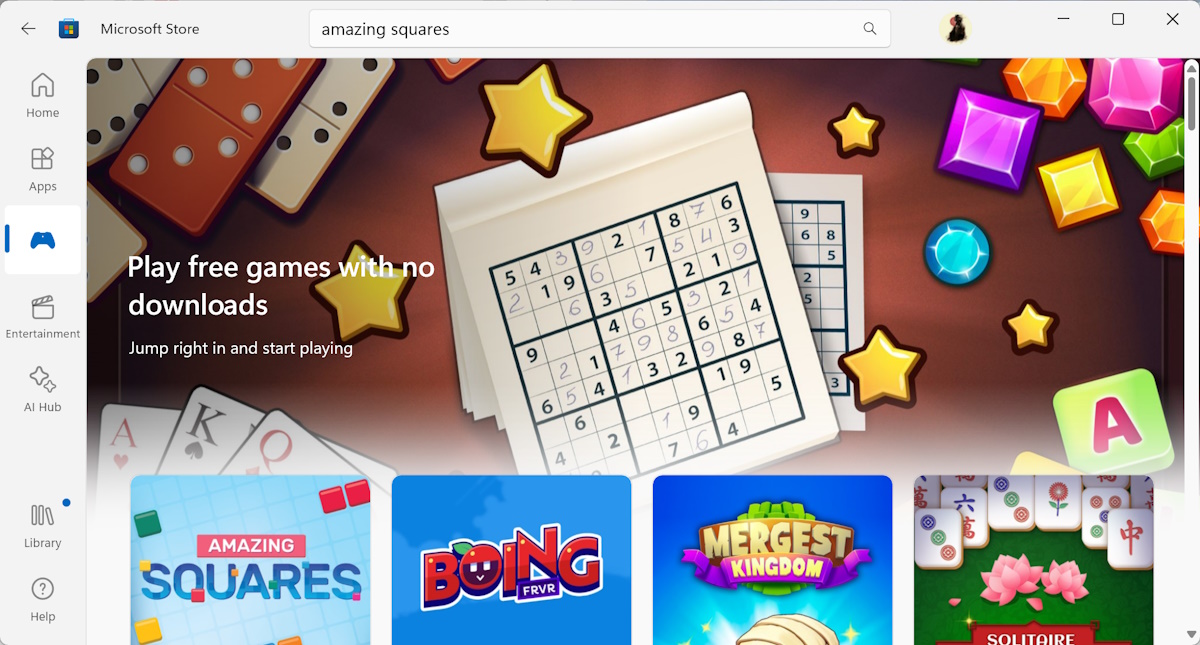

Fake third-party ChatGPT apps

Cybercriminals frequently disguise their malicious software as legitimate-looking downloads with trusted brand names like ChatGPT. Though not a new idea, these malicious programs have long been used to spread malware, steal information, and spy on users. These malicious apps are now using ChatGPT's popularity to spread themselves.

It was discovered in February 2023 that attackers had created a fake ChatGPT program that might spread malware on Windows and Android devices. Bleeping Computer reported that malicious actors used OpenAI's ChatGPT Plus to deceive users into downloading a free version of the normally pricey program. These cybercriminals' true goal is to steal passwords or release malicious software.

In order to avoid downloading dangerous software, it is important to research a program's history before installing it. If an app's security can't be verified, it doesn't matter how enticing it may look. Download apps only from reliable app shops and always check out user reviews beforehand.

Malware generated by ChatGPT

Concerns that AI may make it easier for criminals to commit scams and attacks on the internet have generated a lot of discussion about the intersection of AI and cybercrime in recent years.

Since that ChatGPT may be used to create malware, these worries are not unwarranted. Malicious code was quickly being written with this widely-used tool shortly after its release. In early 2023, a post on a hacker site referenced an outbreak of Python-based malware that was reportedly written using ChatGPT.

Although the malware wasn't particularly sophisticated, and no extremely harmful malware, such as ransomware, has been identified as a ChatGPT product as of yet, the ability of ChatGPT to craft even simplistic malware programs opens a door for those who wish to enter cybercrime but lack significant technical expertise. This newfound skill offered by AI might become a major obstacle in the not-too-distant future.

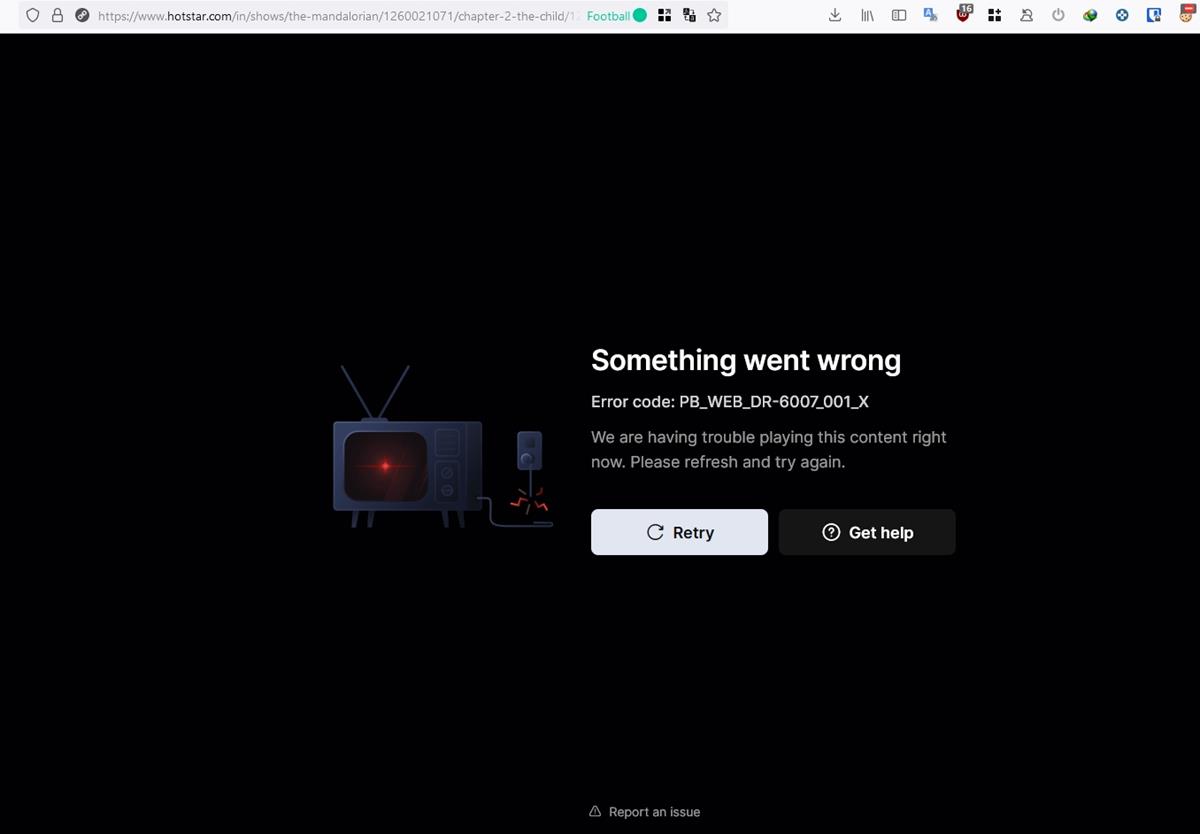

Phishing sites

Phishing attacks frequently take the shape of fake websites designed to steal sensitive information. If you utilize ChatGPT, you may be vulnerable to phishing attacks. Let's say you've found what appears to be the real ChatGPT homepage. Just provide your name, email address, and other information to establish an account. If the website is malicious, the information you input may be stolen and used in dangerous ways.

On the other hand, a fake ChatGPT employee can email you and say that your account has to be verified. In order to complete the purported verification, you may be directed from the email to a website.

The trick with this ChatGPT scam is that clicking the link might compromise your account by taking whatever information you provide, including your password. Your ChatGPT account might be compromised at any time, giving hackers access to your private prompt history, account details, and other data. Knowing how to spot phishing schemes is crucial for protecting yourself from falling victim to cybercriminals.

The ChatGPT subscription scam

Unfortunately, ChatGPT is not immune to the scammers that plague other subscription-based platforms. Fake ChatGPT membership offers are becoming increasingly common as a method of fraud. There is a possibility that you may find a tempting internet deal for a free or heavily reduced subscription to ChatGPT Plus.

The advertisement or link takes you to a page that seems like OpenAI's real homepage but is actually a fake. To get the "special deal," you must complete a form with your name, address, and credit card information. Yet as soon as you do, malicious actors will have access to your private information.

Fake social media messages

There is a plethora of possible ChatGPT scams on social networking sites as well. Inauthentic users may try to contact you through direct messaging or post on the official ChatGPT account. To enter a sweepstakes, confirm your account information, or make changes to your profile, they may send you a link to click on.

Unfortunately, these notifications are only a trap designed to steal your information or infect your device with malware. Be sure to verify the sender's identity before acting on any such message.

Advertisement