Amazon blunder leaks Alexa data to other customer

It appears that the fears of privacy and data protection advocates in regards to voice powered devices have come true; at least in a single case where Amazon leaked a customer's voice data to another customer.

What happened? According (PDF) to German computer magazine CT, one of Amazon's German customers requested access to the data the company had stored about him. Amazon sent the customer a zip archive with the data and the customer began to analyze it.

He noticed that the archive included about 1700 WAV files and a PDF document that contained Alexa transcripts. The customer did not own or use Alexa devices and concluded quickly, after playing some audio files, that the recordings were not his.

The customer contacted Amazon about the incident but nothing came out of it; he decided to contact CT and provided CT with a sample of the files. The audio recordings provided a great deal of information about the then-unknown Amazon customer including where and how Alexa was used, information about jobs, people, alarms, likes, home application controls, and transport inquiries.

CT created a profile of the user and was able to identify the customer, his girlfriend, and some friends, using it. CT contacted the customer and he confirmed that his voice was on the recordings.

Amazon told the magazine that the leak "was an unfortunate mishap that was the result of human error". Amazon did contact both customers after CT contacted the company.

Privacy issue

Amazon stores Alexa voice data indefinitely in the cloud. The company does so to "improve its services". The data may be used to identify owners of Alexa devices and others mentioned in recordings or audible when recordings take place. While it depends on how Alexa devices are used, it is clear that the recordings contain private information that most, if not all, customers would be very uncomfortable with if leaked to others.

Most owners of voice controlled devices are probably unaware, or indifferent, that their data is stored in the cloud indefinitely.

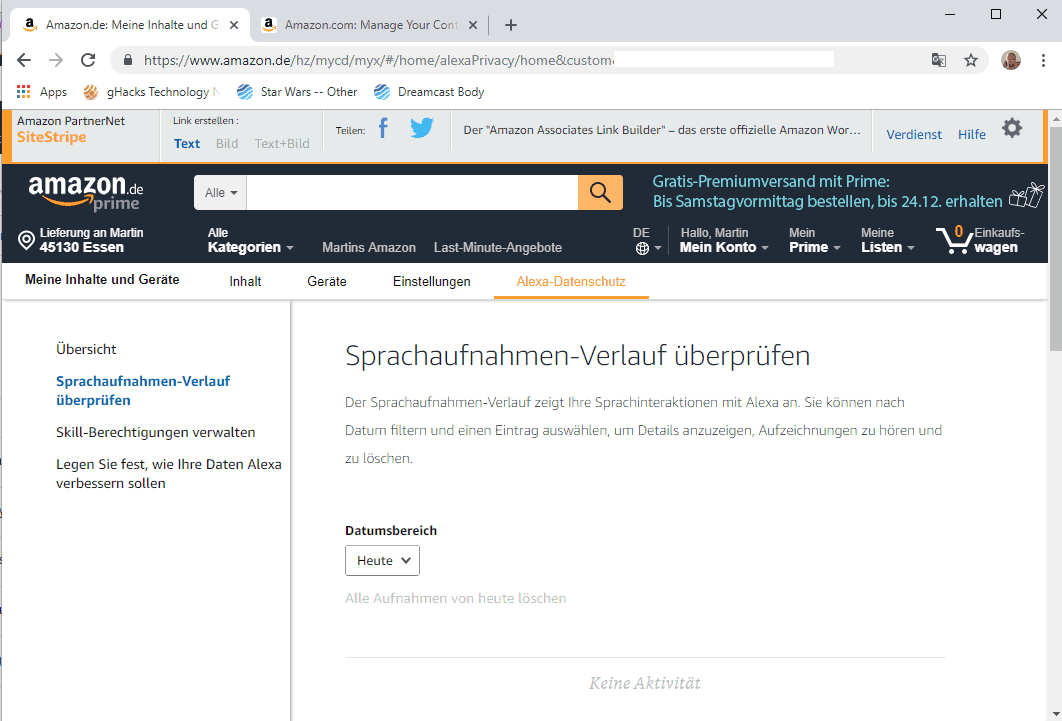

Amazon customers may delete voice recordings that Amazon has stored in the cloud on https://amazon.de/alexaprivacy/. I was not able to access the functionality on the main Amazon website, https://amazon.com/alexaprivacy/, as it redirected the request automatically.

The German page is accessible and provides options to delete recordings that Amazon has on file. There is no option, however, to block Amazon from storing recordings in the first place. It is unclear if the page works only for German customers or all Amazon customers.

Closing Words

Companies need to take human errors into account when it comes to privacy leaks and violations. The Amazon case demonstrates that leaks may happen for numerous reasons including successful hacking attempts, software error, or human error.

Now You: Do you use voice controlled devices?

This is a CIA tool, and is only in the experimental stages…

Great news ! The french ISP Free is now embedding Alexa in its routers for customers homes !

The CIA will soon be retiring all its agents from the ground as soon as Alexa is at your home; they don’t need anything else out there…

Since Alexa is intended to connect even with your toilet, the amount of data collected on this unsuspected customers is astonishing.

Some people wanted addictive technology so bad and now you are paying a heavy price. Cut the complaining all you addicted people especially when your personal data will ALWAYS be at risk no matter what you think or do. Technology they claimed was supposed to make life so much easier yet you can’t find hardly anyone NOT of a cell phone or other device. You want to be in the know so why can’t the companies be in the know? lol

The next version will have multiple cameras with infra-red capabilities (in case you turn out the lights and it can’t see what you’re doing using the ordinary light spectrum). It will hover in the air and follow you around from room to room, so it’s always there when you need it. Since you may accidentally close a door and shut it out of the bedroom or bathroom, it will have laser microphones to pick up sound vibrations on the door or wall. If this happens more than once, it will discretely embed tiny remote mikes and cameras in the room in question, periodically popping back in to recharge their tiny batteries. That way, there will be no need to open the door if you need it.

It will be a whole new era of convenience and efficiency! And let’s not forget national security! If you say anything that could be construed as unpatriotic, or frown while listening to patriotic news, it can have a local fusion-center SWAT team breaking down your front door within minutes!

Speaking of which, the actor who voiced HAL 9000 in the two “Space Odyssey” films, Canadian actor Douglas Rain, passed away five weeks ago. Let’s all take a moment to remember the man whose voice warned us of the dangers posed by Alexa and its ilk … 50 years ago.

PS: Whenever I see a tech company saying something like, “Your privacy is our foremost concern,” I wonder what kind of suckers they take us for. Unfortunately, the answer is “pretty big suckers” and it’s largely accurate. (I still use Google, YouTube, and Gmail…)

I read on the Guardian newspaper this morning regarding an Alexa Echo device telling its owner to “kill your foster parents”: https://www.theguardian.com/technology/2018/dec/21/alexa-amazon-echo-kill-your-foster-parents

Personally though, I don’t use any voice activated devices at all. Bit too creepy for my liking.

It has begun.

All these years I thought Google would be the one to bring Skynet to reality. Guess I was wrong :)

Oh, c’mon, be nice! People who scream at cylinders don’t have any friends. They scream at small metallic slabs with little pictures in them, too.

My best friend is a sexy black cylinder named Alexa. OMG!

Tech’s getting better and better at generating more and more junque every day. Those mountains of discarded cell phones…

Not for the first time.

Woman says her Amazon device recorded private conversation, sent it out to random contact

A Portland family contacted Amazon to investigate after they say a private conversation in their home was recorded by Amazon’s Alexa — the voice-controlled smart speaker — and that the recorded audio was sent to the phone of a random person in Seattle, who was in the family’s contact list.

“My husband and I would joke and say I’d bet these devices are listening to what we’re saying,” said Danielle, who did not want us to use her last name…

Amazon’s response :

“Amazon takes privacy very seriously. We investigated what happened and determined this was an extremely rare occurrence. We are taking steps to avoid this from happening in the future.”

https://www.kiro7.com/news/local/woman-says-her-amazon-device-recorded-private-conversation-sent-it-out-to-random-contact/755507974

My personal afterwit here is that Amazon apologized to the victim by handing over more data mining crappy hardware like an Echo Dot, an Echo Spot, and a Prime membership. LOL

Honestly, my sympathy is very limited to the victim here as all people using this kind of spying devices knew about the risks, unlike their visitors. There should be sticker being placed outside the main door warning visitors of hazardous privacy stealing devices monitoring the rooms!

Or, visitors can order 1 ton of ice cream to be delivered when they enter a new house, to check if someone’s listening…

As we know from many Facebook, Amazon, Google.. customers/users feedback : No one has something to hide and everyone has nothing to hide, hence, billions of Facebook, Google search, Android.. users.

I will never comprehend why people use personal devices that are open to the internet. This includes open ports that are forwarded to devices such as disk drives or media servers. Alexa is another example of this. It couldn’t work without open ports, otherwise Amazon could not get files such as those mentioned above. My Android phone is as open as I care to get and I use it only as a phone, browser, media player, and a few other non-social activities.

For remote home access, I first fire up OpenVPN from wherever I am. Certificates are unique to the device and will only work for the named user, meaning each device will only work with a particular user. Once the door is securely open, I can securely access my network just as if I were at home in the living room. No ports are open, except for the non-standard ports OpenVPN is listening on, and OpenVPN protects those.

You say how bad it is they leaked some customers info. I say LOOK AT HOW MUCH DATA THEY GATHER ABOUT YOU!! Holy sh!t, this is insanity! Obsession! They are literally stalking you! O.O And I fail to see what you would get in return for owning such a device. Nothing! This device offers absolutely nothing of any use to a human being.

Disgusted, is how I feel about these devices. And quite frankly, at the end of the day it is the consumer who is choosing to let themselves abused by these companies. You kind of deserve it, sorry to say it.

They are toys for the “I want that crowd”.

Official Statement:

Did you read the ToS?

It is not a bug. It is a feature.

“privacy” they say. i p1sS myself :D