Yes, defragmenting is still a useful thing

You may have read Samer's Ten software trends that are (or should be dying) recently here on Ghacks which listed disk defragmentation as one of the trends that is dying.

It is undeniable that the rise of Solid State Drives and better defragmentation support of operating systems have made the need to defragment disks or files less of an issue on many computer systems.

If you do run platter-based drives on the other hand, defragmentation is usually still useful especially when it comes to heavy-use files.

You may have noticed that some programs tend to slow down over time, especially when it comes to loading operations if they are used regularly and stored on conventional hard drives and not Solid State Drives.

One reason for this is if one or multiple files required by the program have become heavily fragmented over time, usually because of lots of data adding write operations. Think of the database of an email client, feed reader or web browser for instance. These tend to grow over time, and you may end up with a file that grew from a small size to hundreds of Megabytes in the process.

If you notice a deterioration in performance when opening programs or specific features of a program, you may want to check if files it loads are defragmented.

Checking for defragmentation

There are lots of programs out there that you can use to check for a file's defragmentation status.

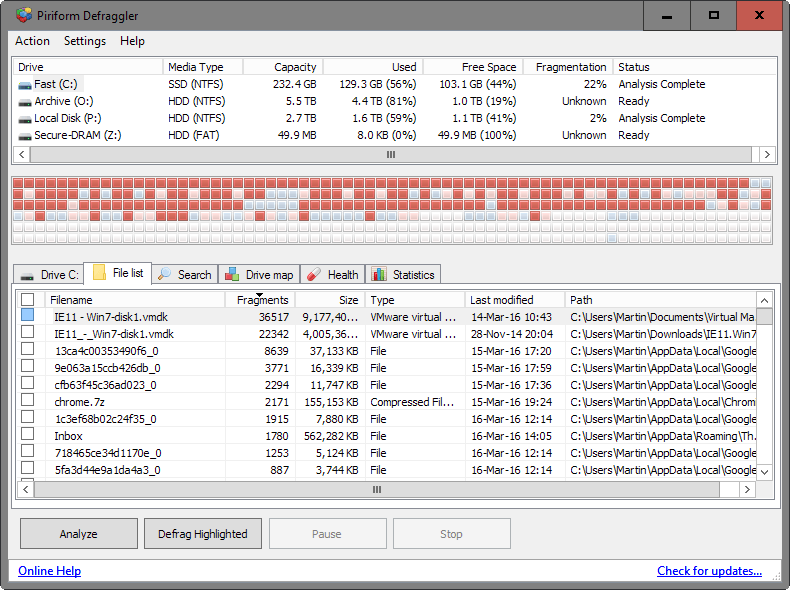

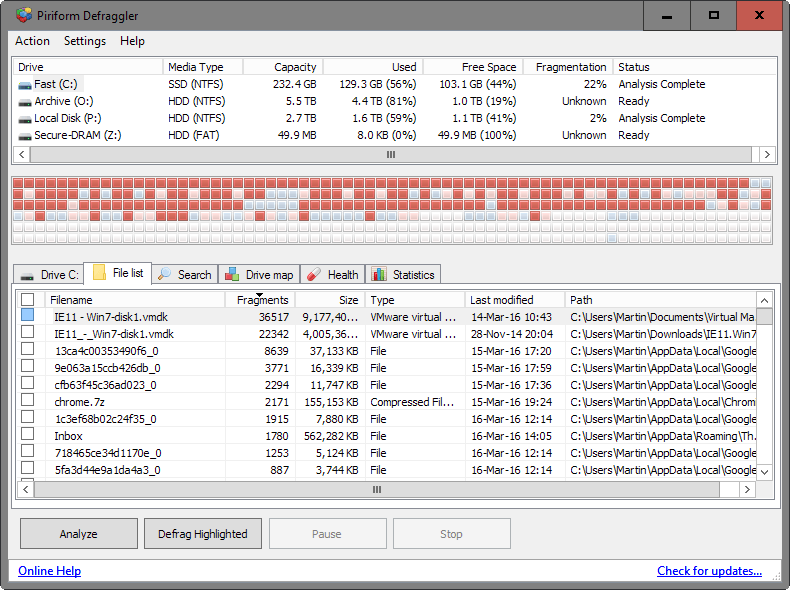

Defraggler from the makers of CCleaner is one of those programs. It is offered as a portable version that comes without third-party offers and can be run from any location right after download and unpacking.

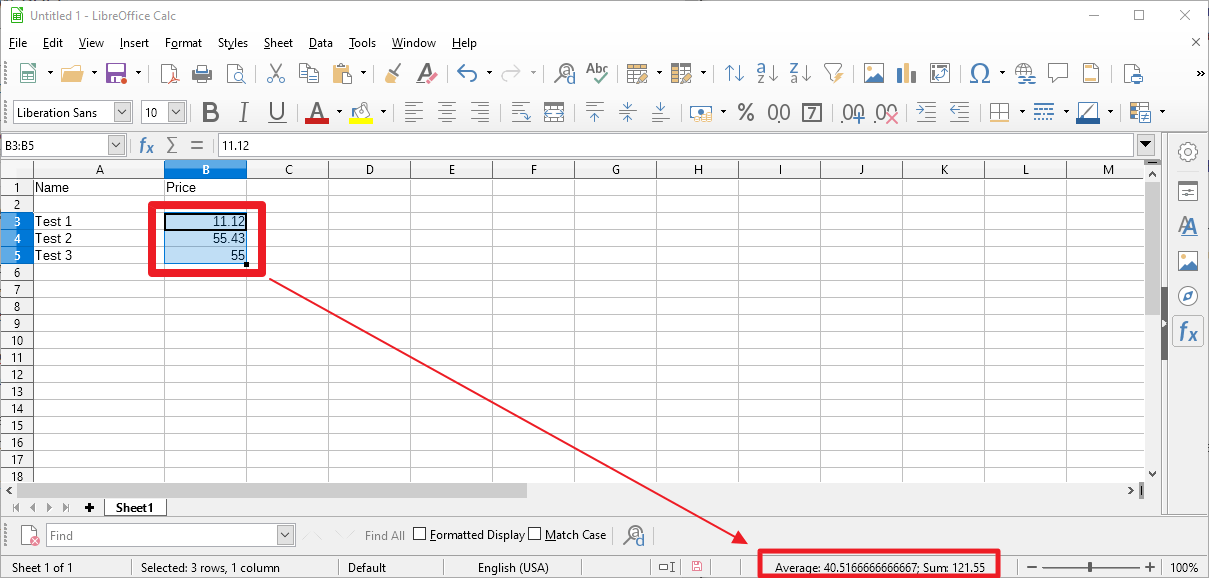

Defraggler displays all drives connected to the computer on start, and all you have to do is select the drive you want to analyze and hit the "analyze" button afterwards.

The process does not take long and when it is completed, switch to the "file list" tab to get the list of files that are fragmented the most.

A click on the filename or path column sorts the data accordingly which can be useful if you want to check the fragmentation of a specific file or that of files in a folder.

You can defragment one or multiple files right away with a right-click and the selection of "defrag highlighted" after highlighting one or more files.

If you spot an important file at the top of the listing, say a database file used by a program that slowed down over time, you may want to give it a try and defragment the file to see if doing so resolves the issue and improves the loading performance of the program in the process.

I burn large video files to DVDs and then delete them. Sometimes, the burned disks have verification errors, and this happens after several attempts, after I try to burn another set of files, or use blank disks from another tumbler. It’s only after I defrag the HD containing the videos does the problem disappear.

In addition, before I used an SSD, I would experience a slowdown in the system after several weeks and with the built-in defrag program running by default as well as clean-up maintenance. The system would speed up only after I did a boot defrag using a third party program.

Finally, SSDs are still not cheap, so not only do I have to use HDs for data I also have to install some programs in them.

Here Are a Few Tips for People That May Have a SSD:

Introduction

Understanding your SSD can be confusing at first, with this little run down on your SSD hardware will get you more then started. Well go over the interface followed by Program-Erase (PE) cycles and NAND Lifespan. Then show you how to preserver the lifespan of your SSD with a few simple OS tweaks.

SATA 3 (6G) Explained: SATA 3 or 6 Gbps is the most recent revision of your SATA storage unit connectors. It will increase the bandwidth on the SATA 2 controller from 3 GBit/sec towards 6 GBit/sec. For a regular HDD that is not really very important. But with the tremendous rise of fast SSD drives, this really is a large plus. Typically we get 3000 Mbit/s: 8 = 375 MB/sec bandwidth minus overhead, tolerances error-correction and random occurrences. SATA 3 is doubling it up, as such we get 6000 Mbit/sec: 8 = 750 MB/sec (again deduct overhead, tolerances error-correction and random occurrences) of available bandwidth for your storage devices.

As you can understand, with SSDs getting faster and faster that’s just a much warmed and welcomed increase of bandwidth. Put Sata III in RAID and you’ll have even more wicked performance at hand. Most motherboards offer only two ports per controller though, so you are (for now) limited to RAID 0 and RAID 1 (stripe or mirror). Also there are two controllers currently being used for mainstream, currently the Sandy Bridge, Ivy Bridge and Haswell platforms offer the highest performing solution. Though still fast, any platform using the Marvell 9128 or 9130 will see lower performance scores as the Marvell controllers use a PCI-Express Gen2 x1 lane interface to the system which restricts performance a little. The internal processor in that chipset also limits IOPS by the way.

What Is A P/E Cycle? A program-erase cycle is a sequence of events in which data is written to solid-state NAND flash memory cell (such as the type found in a so-called flash or thumb drive), then erased, and then rewritten. Program-Erase (PE) cycles can serve as a criterion for quantifying the endurance of a flash storage device. Flash memory devices are capable of a limited number of PE cycles because each cycle causes a small amount of physical damage to the medium. This damage accumulates over time, eventually rendering the device unusable. The number of PE cycles that a given device can sustain before problems become prohibitive varies with the type of technology.

The least reliable technology is called multi-level cell (MLC). Enterprise-grade MLC (or E-MLC) offers an improvement over MLC; the most reliable technology is known as single-level cell (SLC). Some disagreement exists in the literature as to the maximum number of PE cycles that each type of technology can execute while maintaining satisfactory performance. For TLC and MLC, typical maximum PE-cycle-per-block numbers range from 1,500 to 10,000. For E-MLC, numbers range up to approximately 30,000 PE cycles per block. For SLC, devices can execute up to roughly 100,000 PE cycles per block. Mainstream SSDs these days have 2,000 ~ 3,000 PE/cycles.

MLC VS SLC: At the beginning, memory cells stored just a single bit of information. However, the charge on the floating gate can be controlled with some level of precision, allowing to store more information than just 0 and 1. Basing on such assumption the MLC (Multi Level Cell) memory came to exist. To distinguish them, the old memory type was called SLC – Single Level Cell.

The decision of choosing between SLC or MLC is driven by many factors such as memory performance, number of target erase/program cycles and level of data reliability. The MLC memory endurance is significantly lower (around 10,000 erase/program cycles) comparing to SLC endurance (around 100,000 cycles) but with current drive wearing tricks, these SSDs might still outlive you.

So What About Newer 19nm NAND Lifespan? New <= 25nm NAND FLASH memory was introduced, designed to be cheaper. The overall lifespan of the ICs has been reduced from 10,000 towards 5,000 program/erase cycles and now hover even at 2,500 P/E cycles. As drastic as that sounds, it's all relative as this lifespan will very likely last longer than any mechanical HDD. Drive wearing protection and careful usage will help you out greatly. With an SSD filled normally and very heavy writing/usage of say 10 GB data each day 365 days a year, you'd be looking at roughly 22 full SSD write cycles per year, out of the 3000 (worst case scenario) available. However, all calculations on this matter are debatable and theoretical as usage differs and even things like how much free space you leave on your SSD can affect the drive.

I've been monitoring the usage myself. The latest NAND partition of TLC and MLC offer at the very least 2,500 P/E cycles. To give you an idea, on my main work system I use 4 P/E cycles per month. If we take 2,500 P/E cycles before cells start to die then 4×12= 48 P/E cycles per year. 2,500 P/E cycles divided by 48 P/E cycles per year could mean a life span of 52 years, but it will depend on how much you write / your workload and stress you put on the SSD. With normal to even hefty usage you should easily get 10 to 20 years out of your SSD.

Installation & Recommendations: On this page we want to share some thoughts on how to increase the lifespan and performance of your SSD. But first, the installation. Installation of an SSD drive is no different than installing any other drive. Connect the SATA and power cable, and you are good to go. Once you power on that PC of yours, the first thing you'll notice; no more noise. That by itself, is just downright weird opposed to the old fashioned spinning platters in an HDD. My system boot drive many moons ago was a WD Raptor and when that HD is crunching, you know the HDD is alive alright.

That's just no longer a reality. You will look at the SSD wondering "is that thing even working?", while the Windows 7 logo has already appeared on your monitor. So no more purring and resonating or other weird noises. Completely silent, I like that very much. The second factor you can rule out is heat. Modern day HDDs tend to get hot, or at the least quite warm. When not cooled down they can reach 40-50 Degrees C pretty easily. No worries though as the HDD can handle it, yet the SSD remains completely cool to lukewarm. Most SSD drives will get to roughly 25 Degrees C, just above room temperature. Then there's that first boot up on the SSD, weird… it's fast… really fast. That's where you'll get the first smile on your face. But let's talk about taking some precautions, remember this is an MLC based drive, we want it to last at least ten years right?

SSD Life-Span Recommendations: Drive wearing on any SSD based drive will always be a ghost in the back of your mind. Here are some recommendations and tips for a long lifespan and optimal performance. Basically, what is needed is to eliminate the HDD optimizations within Vista (that cause lots of small file writes like superfetch and prefetch), things like background HDD defragmentation (that causes lots of small file write drive activity). In short (and this is for Vista and Windows 7):

â–¸ Indexing Service or Windows Search disabled. (useless access times are so low)

â–¸ Prefetch disabled

â–¸ Superfetch disabled

â–¸ Defrag disabled

So make sure you disable prefetchers. Also, especially with Vista and windows 7, make sure you disable defragmentation on the SSD disk. You do not have a mechanical drive anymore so it is not needed, let alone you do not want defragmentation to wear out your drive, and Vista does this automatically when your PC is in idle (picking its nose). Don't get me wrong though, you could do a defrag without any problems, you just do not want that to be regular. For Superfetch/prefetchers and other services, at command prompt just type: services Use Windows 8 / 7 / Vista's services to disable them. To disable defragmentation:

Windows 8.x, 7 & Vista Automatic Defrag

â–¸ Click Start

â–¸ Click Control Panel

â–¸ Select the Control Panel Home

â–¸ Click System and Maintenance

â–¸ Under the Admin Tools section at the bottom, click Defragment your hard drive

â–¸ You may need to grant permission to open the disk defragmenter

â–¸ Click or unclick Run automatically (disable) depending if you want this feature enabled or disabled.

â–¸ Click OK

OR alternatively at the Vista start prompt just type: dfrgui

Now over time your SSD will get a little fragmented but it's NAND flash and there's no mechanical head moving back and forth to access that data so just leave it disabled.

Windows 7, 8.x & The SSD TRIM Feature: Windows 7 and Windows Server 2008 R2 support the TRIM function, which the OSs use when they detect that a file is being deleted from an SSD. When the OS deletes a file on an SSD, it updates the file system but also tells the SSD via the TRIM command which pages should be deleted. At the time of the delete, the SSD can read the block into memory, erase the block, and write back only pages with data in them. The delete is slower, but you get no performance degradation for writes because the pages are already empty, and write performance is generally what you care about. Note that the firmware in the SSD has to support TRIM. TRIM only improves performance when you delete files. If you are overwriting an existing file, TRIM doesn't help and you'll get the same write performance degradation as without TRIM. In AHCI mode TRIM is activated automatically.

Enable AHCI(!) The last and great tip we want to give you to gain a little extra performance boost is that you should enable AHCI mode. AHCI mode can help out greatly in performance for SSDs. Now, if you swap out an HDD for an SSD with the operating system cloned and THEN enable AHCI in the BIOS, you'll likely get a boot error / BSOD. The common question is, is there a solution for this?

To answer that question (and as we do safely with all modern chipsets) there is a way to safely enable AHCI mode. Here we go:

â–¸ Startup "Regedit"

â–¸ Open HKEY_LOCAL_MACHINE / SYSTEM / CurrentControlset / Services

â–¸ Open msahci

â–¸ In the right field left click on "start" and go to Modify

â–¸ In the value Data field enter "0" and click "ok"

â–¸ exit "Regedit"

Reboot Rig and enter BIOS (typically hold "Delete" key while Booting) In your BIOS select "Integrated Peripherals" and OnChip PATA/SATA Devices. Now change SATA Mode from IDE to AHCI. You now boot into Windows 8, 7 or Vista, and the OS will recognize AHCI and install the devices. Now the system needs one more reboot and voila… enjoy the improved SSD performance.

Source: Guru3D

How to Disable Superfetch and Prefetch in Windows 7 and 8

Prefetch and, since Windows Vista, Superfetch, are technologies in Microsoft Windows that can significantly improve system responsiveness by predicting which applications a user is likely to launch and preemptively loading the necessary data into memory. While essential to ensuring a smooth user experience in systems with traditional hard drives, some systems with solid state drives may not see much benefit thanks to the SSDs’ inherent performance advantage, and Prefetch/Superfetch services may actually be detrimental to SSDs in the long run due to the unnecessary writes they generate.

In Windows 7, Microsoft attempted to address this issue by automatically disabling Superfetch and Prefetch when a fast SSD was detected. In Windows 8, however, the operating system tries to analyse the performance characteristics of the system’s storage and intelligently enable or disable Superfetch/Prefetch as needed.

While most users will be fine letting Windows decide how to use Superfetch and Prefetch on its own, there are situations in which Windows may make the wrong decision, and power users will want to manually disable or enable the services. This most often occurs with non-standard configurations such as fast RAID arrays of HDDs, or mixed use of both SSDs and HDDs.

Manually Disable Superfetch: To manually disable Superfetch in Windows 8, launch the Windows Services manager by right-clicking on the Desktop Start Button, choosing Run, and typing services.msc. Alternatively, you can search for services.msc from the Start Screen.

In the Services Manager, scroll down to find Superfetch, which is controlled by the Windows service called SysMain. Double-click Superfetch to launch its Properties window and click on Stop to stop it.

This will kill the service for now, but it will restart automatically at next boot unless we tell it not to. Under the “Startup Type†drop down menu, select Disabled. Click Apply and then OK to save your changes. Close the Services Manager and reboot to have the change take effect.

Manually Disable Prefetch: After you disable Superfetch, you can disable Prefetch from the Windows Registry. Launch the Registry Editor by right-clicking on the Desktop Start Button, choosing Run, and typing regedit. Just as before, you can also launch the Registry Editor by searching for regedit on the Start Screen.

From the Registry Editor, navigate to the following location:

HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\Session Manager\Memory Management\PrefetchParameters

On the right side of the window, double-click on EnablePrefetcher. You can configure Prefetch in one of four ways by entering the corresponding number in the Value Data box:

0 – Disables Prefetcher

1 – Enables Prefetch for Applications only

2 – Enables Prefetch for Boot files only

3 – Enables Prefetch for Boot and Application files

The default value is 3; setting it to 0 will disable Prefetching.

As mentioned, most users do not need to adjust Prefetch/Superfetch settings, and setting incorrect values can increase boot and application launch times significantly. But advanced users with non-standard drive configurations, or those running Windows in virtual machines, may want to exercise manual control over these important services.

Source: Tekrevue

How come Auslogics Disk Defrag and Microsoft’s defrag tool in Windows 7 have exactly the same icon?

How about My Defrag? in my experience, it fast and many options to optimize (week, daily, monthly, ssd optimize, etc) storages.

This is comical. I installed My Defrag after reading this comment. It caused my boot time to increase by 16 seconds. It froze when instructed to defrag external drive. The defrag option in Glary utitlies defragged the external drive in about 10 seconds.

Exact reason why I uninstalled Glary Utilities. That defrag tool is a complete joke.

Do you really think that in 16 seconds it will do a complete defrag?

Wake up from your dream man. That does almost nothing to your drive.

Also, I found other tools from Glary Utilities to be under par.

For example the app uninstaller does not do a deep uninstallation of leftovers (registry keys and so on).

RAM optimizer does not defrag RAM (which many tools of that kind do today).

I advice to use specific tools for specific tasks rather do-all apps that are jack of all trades but not very good at any of them.

“ssd optimize”

You never defrag, optimize.. SSDs.

You just have to see the ssd is aligned and trim is on.

My Win7 system runs the defrag service automatically on a seemingly daily basis. Unless you disable it, all newer Windows systems will probably do this as well. No particular need for any additional software to handle defrags.

As far as I know Windows’ built-in defrag runs correctly but diagnoses defrag requirement poorly. This means that it considers defrag as not necessary even when it may be. Generally speaking Microsoft’s OSs built-in tools are an ersatz of quality applications available elsewhere. In fact Microsoft makes once in a while a good OS (none since Seven) but never succeeds in either browsers or tools, practically all are craps or rudimentary. Better to search for serious applications, many available, even free. Not to say but Microsoft is cheap, not in terms of price. Office is an exception, at least 365 when the new Microsoft policy (cheaper than cheap) is spreading all over its products.

What is false, anon? We’re referring to Windows 7 and you’d know that if you took the time to read.

So, you’re happy with Windows’ — 8, 8.1, 10? — built-in disk defrag? Microsoft needs people like you!

This is false. The built-in defragging tool has been the best since Windows 7. Everything else is placebo snake-oil garbage.

Microsoft’s built in disk defrag program haven’t been very good since Vista, which had the absolute worst defragmenter ever. Our shop recommends to our customers that they run Defraggler weekly, if they use their machines daily. Do a quick defrag on hard drives and a quick optimize on SSD’s. This does make a difference in performance on an ongoing basis.

I agree that the effects of fragmentation on SSDs are reduced because they have no disk heads that need to move. However, one thing I wonder about is whether the extra amount of metadata necessary to keep track of all the fragments of heavily fragmented files causes a noticeable amount of overhead. (I’m not talking about the amount of metadata, rather I’m thinking about the extra processing time it would take to read, write, and process the metadata). Even if this overhead is noticeable, I wonder if the wear the extra writes required to defragment files would cause would be worse than keeping the file fragmented.

“I agree that the effects of fragmentation on SSDs”

You never defrage SSDs.

Never defrag SSD’s? I used to think that.

In fact, the file system has to keep track of each fragment with metadata. The processing required to deliver file data grows with fragmentation over time.

Unfortunately there is a limit to the total number of fragments the file system can track. If the limit is reached data does not get written to the drive. Consider the repercussions. I imagine most people would assume media wear/failure is the sole cause of a write error.

So, it can be necessary to defrag an SSD. The question is when. Microsoft has been tracking metadata status on SSD’s since Windows 8 and automatically defragging specifically to address potential metadata problems.

Look for this capability in the defragging tool you use.

You are right, Martin. Defraggler is still the best defragmenter around. I’ve used it for 3 years. It is amazing how many files have more than 1.000 fragments after a couple of weeks. I have not noticed much difference in speed but it’s nice to see all the red squares disappear.

@Andrew.

You are right, it’s very relaxing to watch.

Is Defraggler really the best? I never used it, but according to benchmarks it is not so:

http://www.hofmannc.de/en/windows-7-defragmenter-test/benchmarks.html

I have used a few defragmenters (although not Defraggler). But so far MyDefrag seems to be the best choice, if “the best” refers to the overall disk performance after defragmentation.

Hi Martin,

just a tip for you :)

“program have become heavily defragmented over time” – please change it to fragmented. Programs get slow because disks write fragments all over the disk, defragmenting means elimininating those fragments and moving files to be consistent. The same error in the sentence: If you notice a deterioration in performance when opening programs or specific features of a program, you may want to check if files it loads are defragmented.

Sorry if I somehow am rude or something.

Thanks, you are right of course.

I remember I used to defrag my disks often, prior to Vista. Now thinking about it, I wonder if I noticed any improvement. Who Knows, but I still think I mostly did it because I felt it was necessary and sometimes I liked watching it, moreso on the dos/win9x version.

Lordy, Back in the Day, I thunk I was the only person who could possibly enjoy watching that thingie move along, eating those little boxes of the wrong color!! Thanks!!

Defragmentation is indeed still quite important. There is a problem today, in that hard disks have increased greatly in density and capacity, but not or not much in speed. If utilizing much of the capacity of the large hard disks, the time to defragment the disks becomes rather unreasonable. This increase in time problem is also true for other hard disk maintenance such a zero fill, disk initialization operation. I would have preferred the hard disk industry to have focused more on making the hard disks faster, rather than making them of high capacity.

On a side note, I have not yet found a defragmentation utility for systems running an Android operating system.

“On a side note, I have not yet found a defragmentation utility for systems running an Android operating system”

I don’t know if any exist, but you wouldn’t want to do this in Android for a couple of reasons. First of all, Android devices use flash storage, and this has much more limited read-write cycles compared to a platter hard drive. Running a defrag process on a flash drive would measurably reduce its lifespan. (This is one reason why SSDs on a PC should never be defragmented.)

Secondly, from about version 2.3, Android has been using the ext4 file system, which is the standard on Linux desktop machines. Ext4 is resistant to fragmentation, and fragmentation only starts to noticeablely affect performance when your drive gets near capacity.

If you have a fragmentation problem on your Android drive, the only solution I can suggest is to make a backup of the entire drive (including the operating system), then delete everything on the drive and restore from the backup.

I do not know how many, but I would say enough. I would guess that most that use platter-style storage disks use the disks as external storage, typically over USB.

True, but how many of those are out there?

Though most users do not, Android devices can utilize platter-style hard disks.

Thanks for the article, Martin. Defragmentation apps are definitely still of value to me for usage on my platter drives. I prefer using UltraDefrag, (found at sourceforge.net) but also use Defraggler from time to time.

Unfortunately no SSD here, still with a snail platter-based drive. Next system will be with SSD but meanwhile disk defragmentation is required, at least after a long and intensive usage. Speaking of delays for defragmenting the disk drive, I’ve read an article advising to avoid defragmenting the *system* drive too often, but I still don’t know why. Any idea?

Of course once SSDs will have made platter-based drives a thing of the past as diskettes now (and even as CDs/DVDs in the near future), disk defragmentation will participate to those stories grand-daddies enjoy telling their grand-children (don’t forget the fireplace!)

My systems all have multiple hard drives. I use an SSD ONLY for the system drive which only contains the OS and programs. Storage goes onto my other drives which are traditional magnetic disk drives. Of course, they are enterprise class. One system has two 6TB drives and the other 2 4TB drives. The third, a laptop, has an M2 for the system drive and a 2TB traditional magnetic disk drive. SSD’s and M2’s are too expensive for the large capacities I use

Yeah. I noticed this the other day (opened defraggler to check the version number and on a whim scanned by secondary drive, where all my portables live) and the top fragmented file was Quite RSS’s feeds.db .. in 6,000+ pieces. 3+ years, 2000+ starred articles in an otherwise clean/empty database, feeds.db was only 65mb, but it was getting a bit clunky to load load and initially open (upwards of 30secs sometimes). Defragged that sucker and now it’s like brand new.