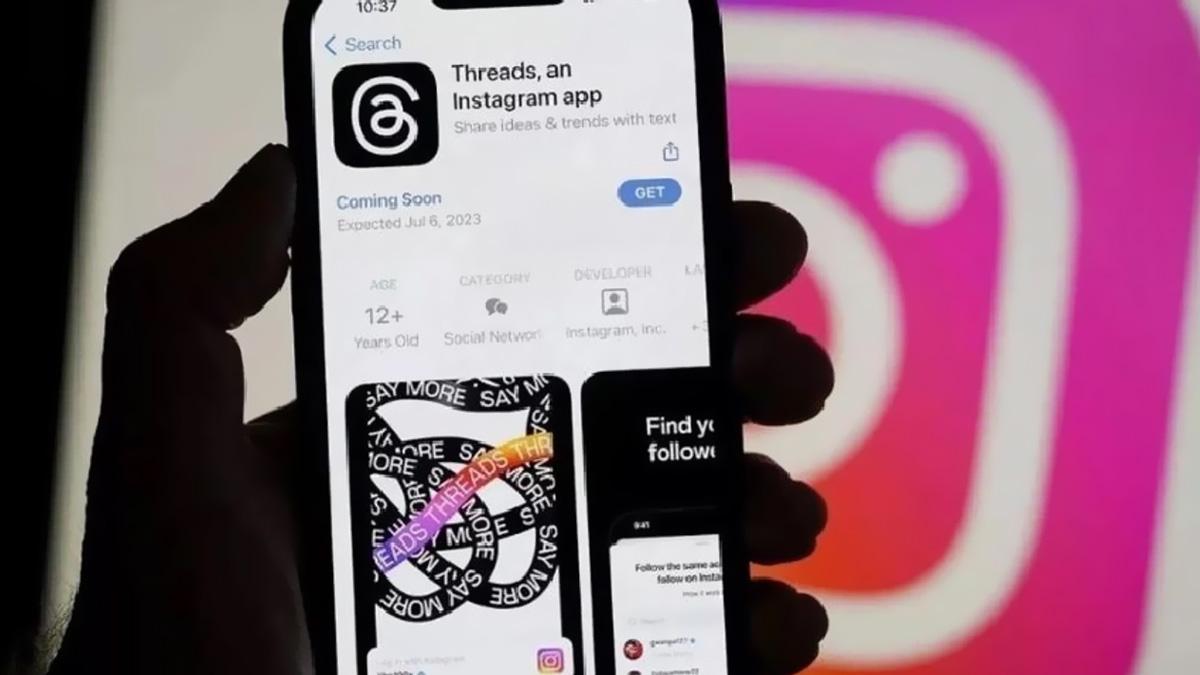

Elon Musk and others call for a six-month AI pause, citing ‘risks to society.’

It’s official - Elon Musk, industry executives, and a group of AI experts urge for a six-month pause to develop more robust systems than OpenAI’s latest released GPT-4 in an open letter stating the potential risks to society.

As many of us know, Microsoft-backed OpenAI launched the fourth interaction of its GPT earlier this month. The latest Generative Pre-trained Transformer AI program has users in awe by impressing them with the human-like conversation, summarising lengthy documents, and composing songs.

The Future of Life Institute letter read, "Powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable."

According to the European Union's transparency register, the non-profit that’s mainly funded by the Musk Foundation, the London-based group Founders Pledge, and Silicon Valley Community Foundation.

Earlier this month, Elon Musk said, "AI stresses me out." Musk has expressed frustration about the autopilot system and the regulator's efforts. He has, however, sourced a regulatory authority to ensure that AI development serves the public interest.

James Grimmelmann, professor of digital and information law at Cornell University, responded, "It is … deeply hypocritical for Elon Musk to sign on, given how hard Tesla has fought against accountability for the defective AI in its self-driving cars."

"A pause is a good idea, but the letter is vague and doesn't take the regulatory problems seriously."

Last month, Telsa recalled more than 362,000 US vehicles due to software updates. US regulators claimed the driver-assistance system could cause crashes. But to no one's surprise, Musk jumped on Twitter and tweeted that the word "recall" for an over-the-air software update is "anachronistic and just flat wrong!"

There was no immediate response from OpenAI concerning comment requests on the open letter. The letter has called for a pause until developed shared safety protocols by independent experts and urged developers to work with policymakers on governance before they continue with advanced AI development.

The letter asked, "Should we let machines flood our information channels with propaganda and untruth? … Should we develop non-human minds that might eventually outnumber, outsmart, obsolete and replace us?" - stating "such decisions must not be delegated to unelected tech leaders."

Although Musk signed the letter and more than 1,000 others, Sam Altman, OpenAI chief executive, was not among those who signed, nor were top executives of Microsoft and Alphabet Satya Nadella and Sundar Pichai.

Co-signatories included researchers at Alphabet-owned DeepMind, Stability AI chief executive Emad Mostaque, Stuart Russell, a pioneer of research in the field, and AI heavyweights Yoshua Bengio, often referred to as one of the "godfathers of AI."

Europol (EU police force) has already warned about the potential misuse of disinformation, cybercrime, and phishing attempts. At the same time, the UK government has disclosed proposals around AI for an “adaptable” regulatory framework. Concerns behind it are due to ChatGPT’s attracted attention from US lawmakers’ questioning its impact on education and national security.

Advertisement

I got scared the moment I heard of ChatGPT. I am glad to learn that many brilliant people are also worried. However, who can step in and put on the brakes? Only governments, and that is never a good thing.