Indispensable Webmaster Tools And Resources

If you run a website, be it as a hobby, semi-professional or professional you need to know some tools of the trade.

Webmaster tools can help webmasters in many different area. They may help you make sure that sites display fine in all modern web browsers, reveal issues to you, for instance when it comes to the visibility of the site in search engines, or may provide you with information on new policies or changes.

The following list is a collection of webmaster tools that should help most webmasters. Many would consider them basic tools that every webmaster should know and use. Most of the tools are accessible from all operating systems and web browsers.

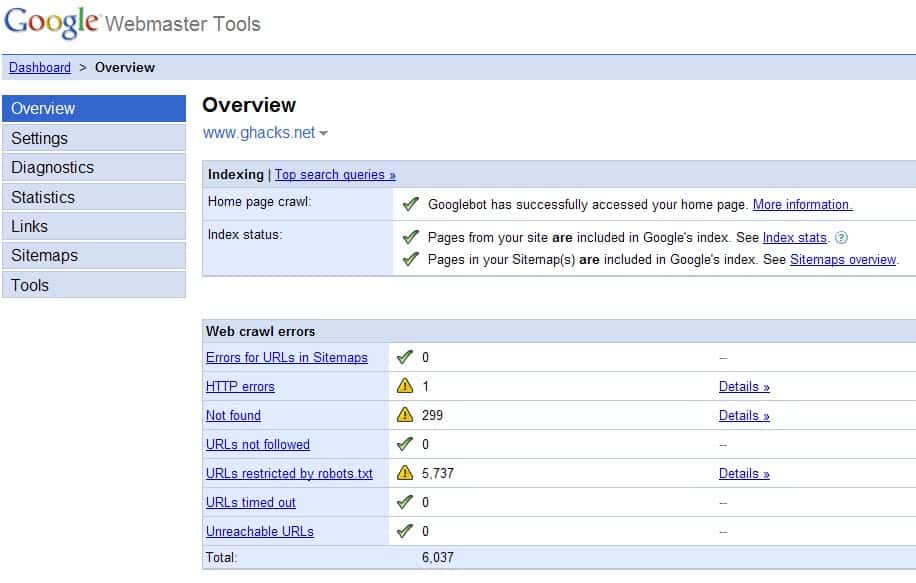

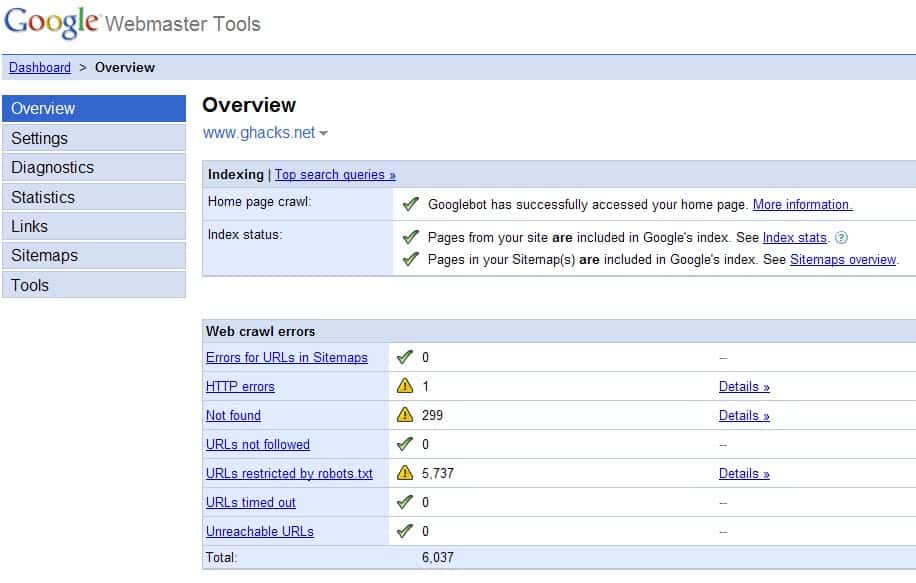

Google, Yahoo and Microsoft Webmaster Tools

Each of the three major search engines provides access to online webmaster tools. These tools require an account but will display all kinds of information about the website that is related to the specific search engine.

This includes crawling errors, statistics about indexed pages, visitors, sitemaps and troubleshooting help.

- Google Webmaster Tools (known as Google Search Console now)

- Bing Webmaster Tools

- Yahoo Site Explorer (Yahoo Site Explorer has been integrated into Bing Webmaster Tools)

Validation Services

The W3C Markup Validation services checks the syntax of webpages. It will report syntax errors after it crawled a page that you entered in the form on the site.

The issues are sorted into severity, and the most severe category should be fixed as soon as possible as it can lead to broken functionality, a bad user experience, sites that don't render properly in some browsers, or even lower search visibility. It is a good idea to check the source check box on the page which will display the source code of the webpage which makes it way easier to locate the erroneous syntax.

Other services of interest include SSL test services that check a site's SSL implementation.

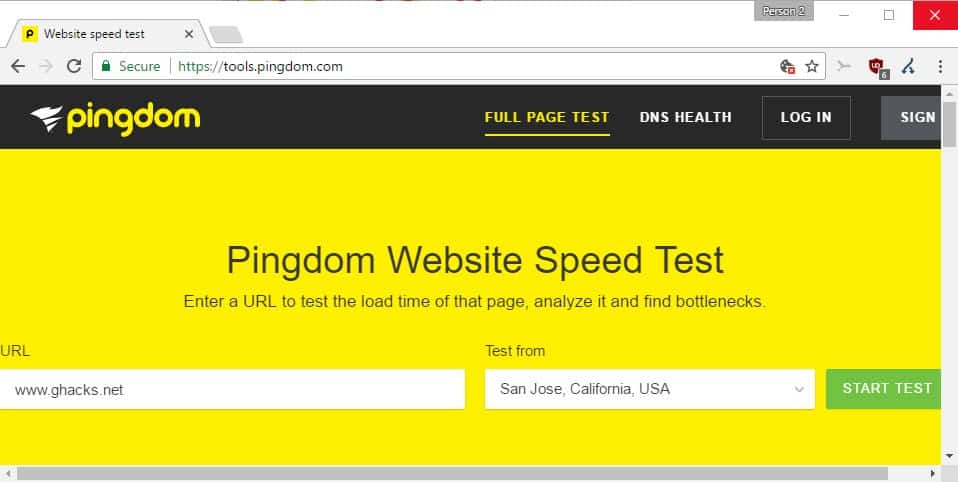

Speed and Performance tests

Speed and performance has become a hot topic on today's Internet. Google for instance stated some years ago that speed is part of the company's algorithm that ranks websites.

Web Browsers

Testing websites in different web browsers is a must for every webmaster. What displays fine in Internet Explorer can throw up error messages in Opera or Firefox.

Web Statistics

Web Statistics are tools that record and analyze traffic of a web property. These tools provide extensive information about visitors (where do they come from, which pages did they access, how long did they stay), referring websites, errors and additional information.

Webmasters have the choice to install a third party tracking code or run a web statistics script on a server directly. Running a third party tool will decrease the load on the server as no processing power is needed to crawl and analyze the access logs. Their disadvantage is that a piece of JavaScript has to be loaded on every user request which increases page loading times. It also means that data about the traffic is available at a third party site.

- AWStats (Server)

- Google Analytics (Third Party)

- Sitemeter (Third Party)

Resources

The following resources may be useful as well.

Robots.txt

Robots.txt files can be used to guide search engine bots on a website. They can allow or prevent access to certain files and directories.

.htaccess

Htacess is a very powerful configuration option included in Apache web servers. It can be used to do various things like password protecting directories or redirecting 404 pages to another page.

Firebug

If there was one Firefox add-on that webmasters could use they would certainly pick Firebug. The add-on can be used to display realtime information about the active website in the web browser. Webmasters can select elements on the website to be taken directly to the code that is creating that element including its CSS properties. It can also be used to monitor network activity and to debug JavaScript. Several extensions are available for the add-on to increase the functionality further.

- Firebug (requires Firefox)

Note that modern browsers ship with Developer Tools built-in. A program like Firebug may not be needed anymore as a consequence.

Selenium

Selenium is a web application testing system for the Firefox web browser that can be configured to perform clicks, typing and other actions on the website which can be played back at a later time using variables like different web browsers or languages.

- Selenium (requires Firefox)

If you can think of any other resources that are missing in this list let us know.

The post is really gOod. Really interesting and informative for me. :)

Hmm, Thanks for the information.

thans for telling me the firebug thingy.. I nvr knew tht one!! :)