Find out who is copying your texts with Count Words for Firefox

One of the biggest issues that webmasters face on today's Internet are copy cats which are often referred to as scraper or scraper sites.These type of sites repost articles and texts posted by other webmasters on their own sites. Why are these sites popular? They are easy to setup, receive traffic from search engines, and sometimes even manage to outrank the site the article was originally posted on.

In short: it takes less than ten minutes to set those sites up, and after that everything is set on auto pilot bringing in traffic and revenue.

The only defense that webmasters have when it comes to these types of site is to write lots of DCMA takedown requests, or complain about the site at advertising companies, web hosters or domain registrars.

WordPress webmasters can install the excellent PubsubHubbub plugin to inform companies like Google that they are the original author of the content.

Finding the copy cats

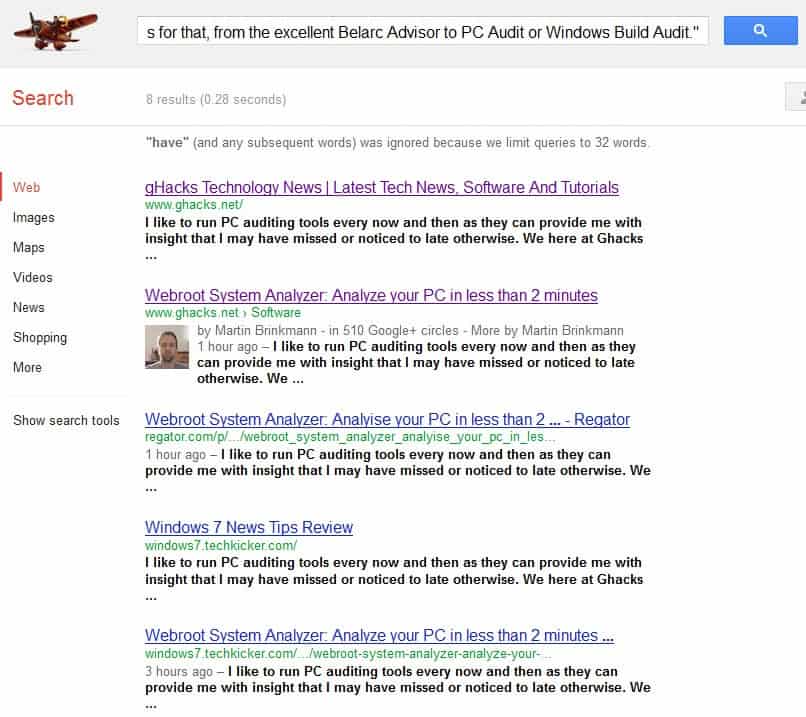

How do you find out who is copying your articles? The easiest way to do so is to search for a sentence or paragraph in search engines such as Google or Bing. This not only reveals sites that copy your contents, but also whether your own website is listed at the top of the results, or if a scraper site managed to take that coveted spot from yours.

I suggest to use quotation marks when you search to make sure you find exact copies only, and then again without the quotation mark. The example above yielded a few sites that had copied at least the first paragraph of the latest article here on Ghacks on their sites. You still need to visit those sites to see if it it just a quote, or if the full article has been copied and pasted.

You also need to remember that sites that rewrite the content manually or automatically are not included in those results usually. Article spinners or rewriters are available as plugins for popular scripts such as WordPress that automatically turn the original article into a barely readable mess that passes copyscape. Sites can fool bots this way but fail when it comes to manual inspections or human visitors.

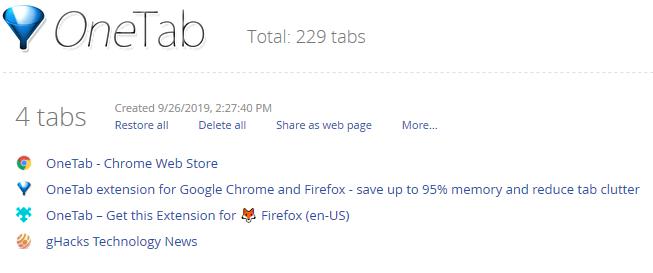

If you do not want to copy and paste all of your article paragraphs manually into search engines, you can use Count Words for Firefox for that instead. Just install the extension and use the new icon it adds to the browser's status bar to search for the highlighted words in Google Search.

Remember that Google is limited search queries to 32 words, which means that it is not necessary to select more than one or two sentences usually from each article. Once you have found scraper sites, you can try and ask them nicely to remove your contents from their site if you find the means to contact the webmaster, or use a Google form to get the content removed from the search engine. Other search engines make available similar forms.

Closing Words

Count Words simplifies the process of finding copies of text on the Internet. It can be useful for editors, teachers or university professors as well who want to make sure that text they are reviewing is unique and not copied from another source. In the end, it makes the process easier to complete but does not really add anything to it besides that. You could alternatively place two browser windows next to each other, one with the articles that you want to review and the other with a site like Google or Bing to speed up the manual search process as well.

Advertisement

I allways want to do that but didn’t know how thank you for this great article that will help me catch the copy cats.

Gizmodo is a perfect example: I have never seen an original article on Gizmodo.

Many of those larger sites are aggregators. Write one or two paragraphs, link to the original source, done. Seems to work pretty well for them.

I always do that Exact Match Search on Google. This plugin could be a time saver. @ Martin I wonder, don’t you file DMCA Complaints for stolen content ? Look, how freely people are copying your content without permission.

I’m not filing DMCA requests. I have contacted a few sites in the past and most have agreed to remove contents, but it would be more than a part-time job if I would pursue it strictly.