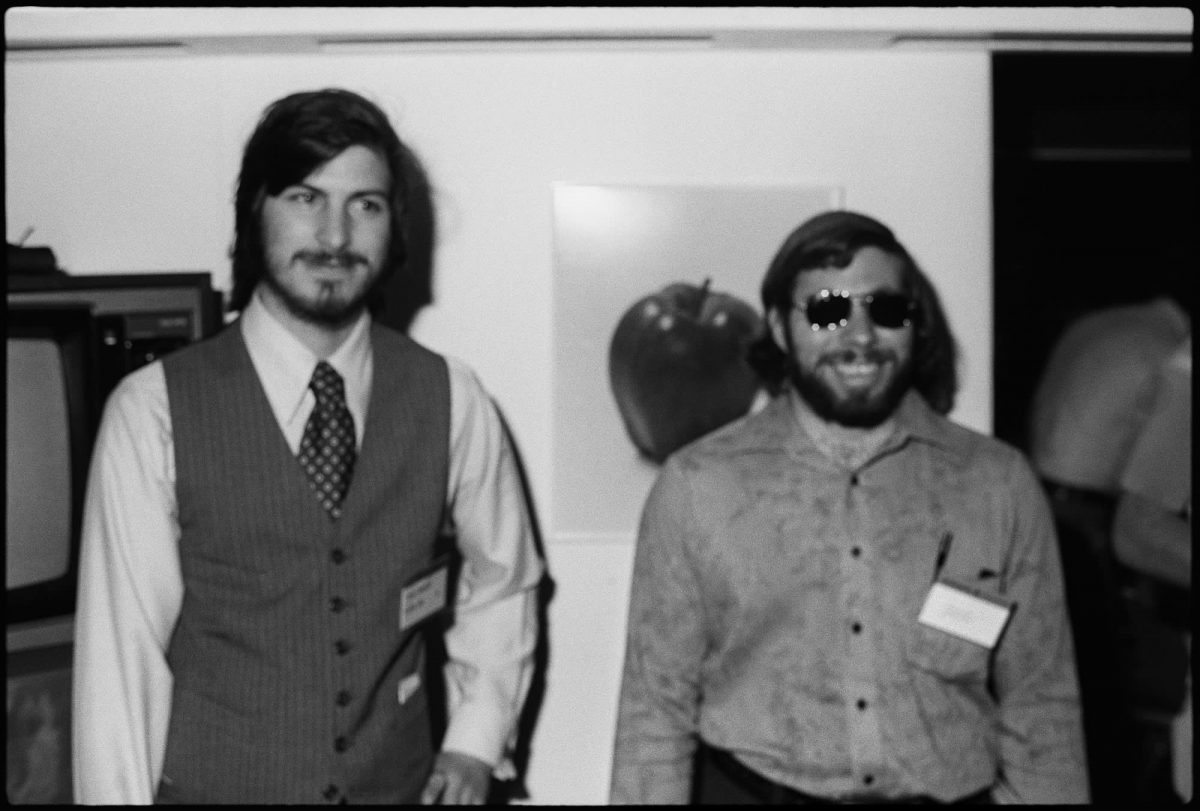

Steve Wozniak warns about AI dangers

The co-founder of Apple, Steve Wozniak, said that it might be hard to spot scams or misinformation because generative AI tools like ChatGPT can produce very intelligent texts.

Wozniak gave an interview to BBC and discussed some of the issues that AI has brought to our lives. He thinks that AI content must be labeled accordingly, and regulations are surely needed for the sector. Despite having many pros, artificial intelligence can also become a real threat in the hands of bad people, "a human really has to take the responsibility for what is generated by AI." he said.

Because AI lacks emotion, Wozniak doesn't think it will ever completely replace people, but he did issue a warning that, in his opinion, it will make bad actors even more convincing because tools like ChatGPT can write texts that "sounds so intelligent." Wozniak also said, "AI is so intelligent it's open to the bad players, the ones that want to trick you about who they are."

Wozniak thinks that it is almost impossible to stop the technology, but there are ways to prepare humans for any kind of malicious attempt. This way, they won't be harmed even if they are targeted by a "bad actor" in the industry.

Wozniak is one of the names that signed the letter back in March

A couple of big names, including Elon Musk, came together to write an open letter to stop AI training, and Steve Wozniak is one of the technology pioneers that had their signature underneath.

Geoffrey Hinton, also known as the godfather of AI, also shares a similar opinion with some of the most popular names in the technology world, including Elon Musk and Steve Wozniak. Hinton recently quit his job at Google to talk about the dangers of artificial intelligence without harming the company.

With the increasing competition between some of the biggest technology companies like Google and Microsoft, Hinton thinks artificial intelligence will cause more people to lose their jobs, and in the end, it may even end humanity.

Advertisement

Ending humanity is not so far fetched as it sounds. If a bad actor managed to infiltrate a nuclear silo and used AI to launch a nuclear missile the consequences would be unthinkable especially if the AI were to take steps to prevent attempts at disarming the missile.