Block Firefox connections with ReqBlock

ReqBlock is a WebExtension for the Firefox web browser that enables you to check and block Firefox connections to web resources.

Firefox users have a couple of options when it comes to blocking resources. They can run an add-on in the browser to block resources, or configure firewall or hosts file on the operating system to do so.

Add-ons that fall in the category are among many others the popular NoScript blocker, and any content blocker such as uBlock Origin or AdBlock Plus.

It may make sense to use the firewall or hosts file instead, as it offers some advantages, but also disadvantages over using an add-on. The main advantage is that it blocks the resource regardless of program that you use, a disadvantage that it is not as easy to manage the list, or use temporary exceptions.

ReqBlock

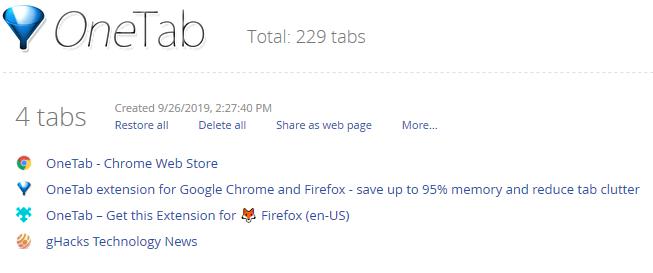

ReqBlock for Firefox is a brand new add-on that you may use to add web resources to a blacklist to block connections to these resources.

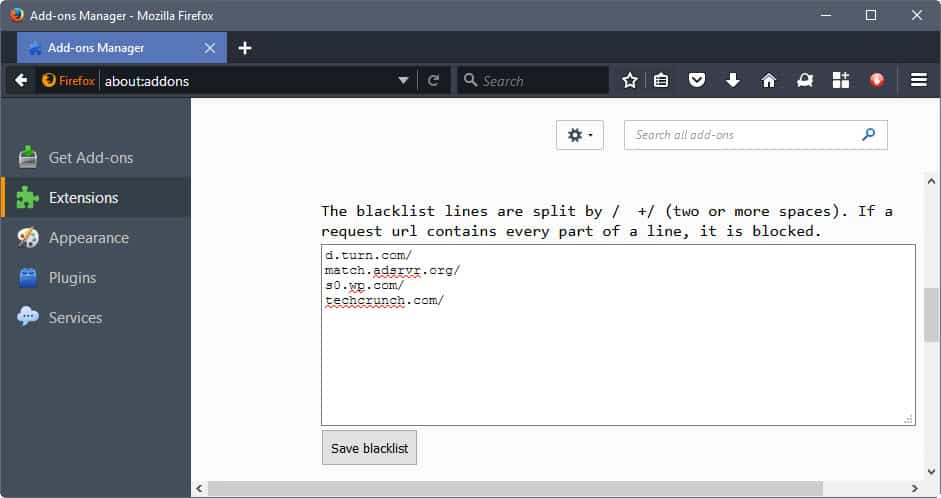

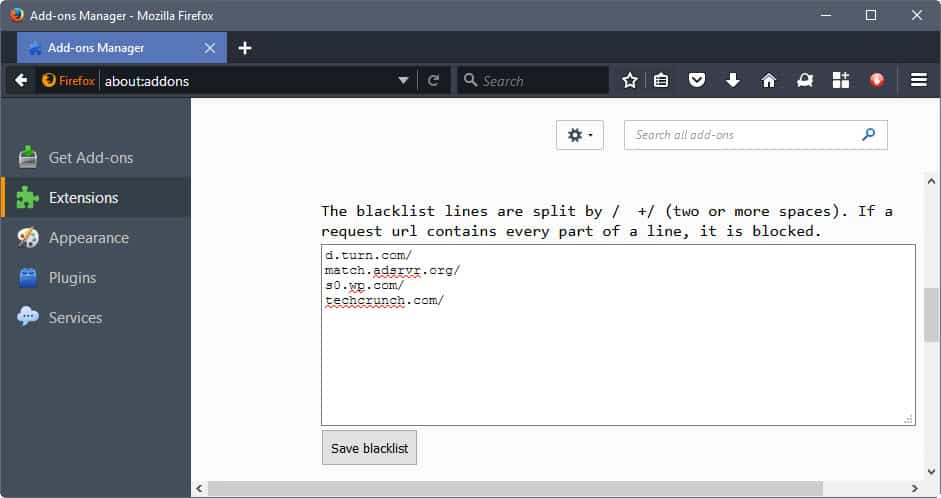

All this happens on the options page. There you find the blacklist; simply add, edit or remove entries from it. The editing works identical to editing text in a plain text editor.

I found the instructions a bit difficult to understand (see screenshot), but adding one resource per line worked well.

You can add domain names but also resource names, e.g. /pagead/ without any domain. The latter can be useful if you want to block a script or resource that is used by several sites, and not just by one.

A click on the save blacklist button saves the updated list. It is immediately active, and resources that you have added to the list should be blocked and not loaded anymore.

You can check this directly when you load a site that makes use of these resources, for instance by opening the Network monitor of the Developer Tools of Firefox.

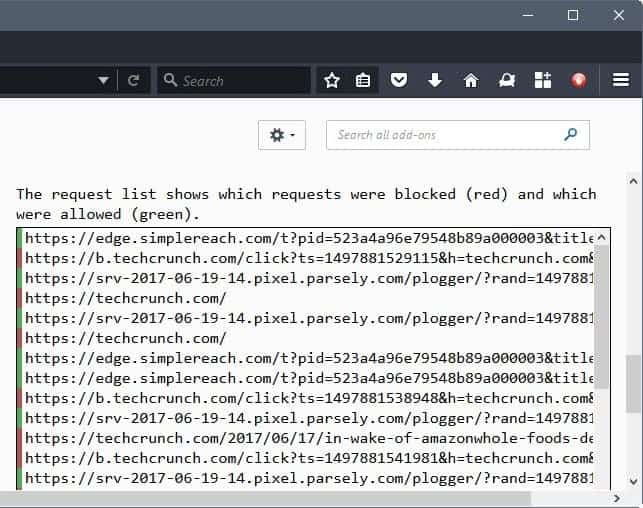

The add-on lists all recent connections on the options page as well however, so that it is not really necessary to do so for verification purposes.

The connections are color coded. Red indicates blocked connections, green that a connection was allowed.

The extension is simple besides that. It does not add a button to the toolbar of Firefox, so there is no indication whether content was blocked on a site when you load it. This means as well that there is no option to disable the functionality easily. The only option you have is to disable the add-on in the options, or to remove entries from the blacklist.

There is also no option to add an element that is displayed on a site to the blacklist.

Verdict

ReqBlock blocks connections effectively in the Firefox web browser, but it lacks options to make the whole process more comfortable. It can still be useful to some users, but mostly for blocking certain addresses or web resources permanently in Firefox.

Now You: What's your take on ReqBlock? Which add-on do you use to block resources?

Nice find Martin, thanks!

it could be a replacement for

https://addons.mozilla.org/en-US/firefox/addon/silentblock/?src=cb-dl-updated

if it supports regular expressions

but not even close to

https://addons.mozilla.org/en-US/firefox/addon/requestpolicy-continued/?src=cb-dl-updated

Not a WebExtensions so another two handy add-ons Mozilla is assasinating. :(

It’s kind of pointless because UBlock has this built right in.

As former RP user for years, I switched to UMatrix because it offers more granularity and it’s a damn good replacement.

uMatrix is far more superior than this.

After playing around with it this morning I have to say, that at this point in time, I really like ReqBlock. Using the advanced settings in UBO I don’t know how much will get through that needs to be filtered but I’ll keep on eye on it for a few days to see how useful it will actually be, for me.

To answer a question on why this and not just use a hosts file, I have an observation from my use of a hosts file. I’ve been working on and using a hosts file on ALL of my computers and mobile devices for around 10 years. Not an expert, just an enthusiast. As great as a hosts file is it does have a couple flaws. The entries have to be an address, not a resource, and I don’t know of any way to get around that. With ReqBlock you can block every single network request that has the word ‘pagead’ in it, or ‘notification’ or ‘whatever’. Can’t do that with a hosts file, at least not that I’m aware of.

And maybe the biggest flaw with a hosts file is ‘failed network requests’. Occasionally,when a webpage has a failed request it will retry that request, when that fails it will retry again. I don’t remember seeing more than three attempts for the same request, in FF, but if for instance you have 6 different failed requests and all 6 are attempted a total of 3 times that Will have an impact on page load times. I believe that in FF each failed request is limited to a quarter second for each attempt before it will timeout. So, in my admittedly worst case scenario, we are looking at 18 failed requests at a quarter second each. Holy excrement batman! Might as well take a nap! The last time I looked the timeout was longer in chromium browsers than it is in FF but I seldom use the developer tools in a chromium browser. Over the years I have intentionally removed entries from my hosts files because of failed network requests causing longer page load times, not often but it happens. Basically a hosts file blocks network requests After the webpage/browser has made that request preventing it from leaving the system. With an ad/content blocker (uBO, ABP) and with ReqBlock the request is never made by the browser. Hence faster page load times when compared to a hosts file. Always.

With ReqBlock I really like that you can fine tune specific items from an address. Using BBC as an example.

/mybbc.files.bbci.co.uk/ /css/

or

/nav.files.bbci.co.uk/ /js/

I like that you can block some items from specific addresses without blocking everything. Those were just random examples that I tried after looking at the network requests.

Another example is earlier today I went to a tech blog that I know that has been pushing an adblock warning overlay, that you can click through. I was able to prevent the overlay by adding one word to the ReqBlock blacklist. After taking a look at the network tab in the developer tools I was able to make a semi-educated guess and found the culprit. Sorry ‘anonymous tech blog’!!! With uBO it would have taken numerous entries to accomplish the same thing when using my limited knowledge. ;)

Here on Windows I’ve never noticed what you interestingly mention concerning the incidence of HOSTS blocked requests.

I use a HOSTS file built by merging several others with the HostsMan application, 54701 entries at this time. It’s quite a notorious application. Funny how some days include several mentions of a product you haven’t referred to for years sometimes : I commented about this app this morning, but can’t remember where!

Anyway, HostsMan or not is not the point.

Maybe choosing 0.0.0.0 rather than 127.0.0.1 may be a better option, even if I can’t remember any issues as those you mention when I used 127.0.0.1.

Also, quasi imperative especially when the HOSTS file is heavy is the fact of disabling Windows’ DNS Client Service (dnscache) which is totally useless.

Finally, no need to keep what most HOSTS file include, should it have a whatever impact:

local

localhost

localhost.localdomain

broadcasthost

HOSTS is an old trick (originally a HOSTS file mas meant to include the true address in order to gain the time of having it requested by the DNS!) but remains a good addition to a user’s blocking arsenal. But it is really an old approach.

O ye of little faith! LOL

“I’ve never noticed…” How often do you open the dev tools to see what a page is doing as far as network requests go? That’s not meant as a slight, just curious. Reason I ask is because your hosts file is 3.5 times larger than the one I use so I would be willing to bet my previous scenario would be seen more often by you. I have, for the most part, removed all of the problem entries that cause repeated requests while also using a content blocker at the same time. If something gets past my content filters (easylist, easyprivasy, malware, and so on) and is caught by my hosts files and then causes longer page load time with repeated failed requests I will almost always kick that entry to the curb. Especially on my desktop, I am used to very fast page load times, if a website load time changes, I will notice. On my smartphone and tablet, Google Chrome is also very fast and I rarely ever see an ad using the hosts file.

I’m also a longtime user of HostsMan. I actually like to use an older version (3.2.73) better than the newer. My hosts file has been optimized, checked for duplicates, blah, blah. ;) 15,049 entries and 311KB in size.

I had a hard time finding an example of my scenario mentioned earlier, because, I think, the bad actors have been removed form my file. After finding an example I saw a higher number of the same repeated requests than I remember seeing in the past. The failed request timeout is also longer. But, I’ve mostly always looked at the network requests while using both my hosts file and content blocker at the same time. I’ve include a screenshot for your viewing pleasure! It’s not a nice milk shake but I have to try and keep it acceptable for a G-rated audience. Check out the ib.adnxs[.]com entries. I verified that they were blocked by the hosts file. Screenshot taken while Only using hosts file. Nuff said! ;)

https://s30.postimg.org/j8k9i60f5/Dev_Tools.png

It seems basic, tremendously basic compared to uBlock Origin’s similar but far more extensive, elaborated feature, not to mention all other blocking possibilities that bring uBlockO to appear as a blend of Adblock, Noscript and this ReqBlock.

Some users consider uBlockO complicated, I don’t but I do consider the more elaborated uMatrix as slightly above my level, so I can understand those that fear a uBlockO’s complexity. We all know that more features together with more tailoring is always more complex : complex, not complicated.

Therefor ReqBlock can be, as far as I conceive it, an extra tool for users of Adblock who wish to block not only what is already downloaded when visiting a site but also and before all connections to external sources when these bring ads, trackers, malware or call for totally non required craps. Here with uBlockO I’m stunned to see pages perfectly well rendered with sometimes (almost) all external connections blocked. I even know several places which run fine with their domain’s own data and nevertheless call 10, 15, 20 external sources, among which almost always the same from Google, Facebook, Twitter … all very nice perhaps but less when spreading all over the place like a visitor who’d jump into dad’s armchair after having entered one’s home by the backdoor : totally uneducated.

ReqBlock may be a good start.

Vale, but why would a user want to block the address only for FF? Why not edit hosts file?

Perdoneme, no eintiendo!

The hosts file is useful for blocking whole domains, but not so useful for blocking things like “/pagead/”, which blocks only requests with “/pagead/” in the URL, but not specific to any site.

Also, blocking at an app level allows portability between machines eg I can take and use a portable FF (on a USB stick) for use on client machines

Why delete my comments?

But what if the CIA infiltrates a 1024-bit ultra atomic spy bit under the mouse pad? What then, ah?

Perhaps . . . so I block an address on an app that I take elsewhere for browsing knowing that I don’t want to ever visit that site–or maybe it’s a collection of malware sites. And when I go to the other computer, the darned thing has a USB restriction on it which makes both the portable version and the blocked addresses somewhat pointless exercises in the fallibility of thinking too much or not enough.

Mainly because a HOSTS file will block what it has in the engine independently of the situations. You may wish to block Facebook when you’re not on Facebook but still have it accessible otherwise. That way the big fat F meets a shut on his face door when you go to the bedroom or to the lavatories (otherwise as we know it’d follow you wherever you go).

Martin, thx for this tip. It sounds great. But how to open this option page? I installed it on 3 different FF versions, but I don’t see how to get the options page open.

Great to have this tool available to block the steadily increaseing firehose of shite bad hipster developers throw in their website without any consideration for optimization or efficiency. But really, the UI for this tool is unusable.

I don’t want to discourage the developer though as I’m sure the ‘front end’ can be improved and having the ‘back end’ in place is a great thing. Is it open source? Maybe I can help.

You need to load about:addons, and there you find the options button listed next to the extension

Thx, but I already did. But there is no option button. FF 45.9 ESR. The same one I am writing you, now. No other extension installed. Blank FF, so to say,

I think you have to wait until you upgrade ESR to the next major version.