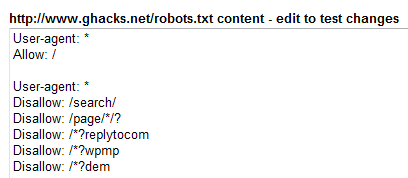

Check your robots.txt on Google's Webmaster Tools website.

A robots.txt can be used by webmasters to limit site access of bots that crawl the website. You can configure rules for individual bots or create rules for all of them.

A robots.txt file that is improperly configured may shut out bots from crawling your website which can have serious consequences for your website's visibility in the search engines.

This can be quite devastating especially for webmasters who do earn their living from their websites. It takes up to two weeks until changes can be seen when you edit the robots.txt file which is way to long if you make a mistake.

One great way to check if your robots.txt is valid and does exactly what you want it to do is to check it in realtime on Google's Webmaster Central service. The first thing that you have to do is to create a free account and add your site to it.

Once that is done you can access various services that are offered. One of them is the robots.txt analysis which lets you check your robots.txt on your website. Google automatically retrieves the robots.txt from your website if one exists and adds the main url to the list of urls that you can check using the online tool.

Update: You find the feature now under Health > Blocked URLs in Webmaster Tools.

You may add new entries to the robots.txt and to the list of urls that you want to checked. This is important for two reasons.

- You can check if the current robots.txt file is blocking or permitting access to select pages on your website.

- You can test new robots.txt entries to make sure they have been set properly and only block pages that you want blocked from search engine bots.

It is also important to check various urls and not only the main url. If you take ghacks for example. All article pages have a certain syntax which differs from that of the main page. To give you an example, I did add the following robots.txt file and articles pages. This is the right way if you run a WordPress blog. If you do run a different website you do need to add a different robots.txt and pages of course..

robots.txt

User-agent: *

Disallow: /wp-

Disallow: /feed/

Disallow: /trackback/

Disallow: /rss/

Disallow: /comments/feed/

Disallow: /page/

Disallow: /date/

Disallow: /comments/

User-agent: Googlebot

Disallow: /*/feed/$

Disallow: /*/feed/rss/$

Disallow: /*/trackback/$

Disallow: /*?*

Disallow: /*?

# This is the ad bot for google

User-agent: Mediapartners-Google*

# Allow Everything

Allow: /*

Test URLs against this robots.txt file

https://www.ghacks.net/

https://www.ghacks.net/2007/05/20/support-ghacks/

https://www.ghacks.net/tag/

https://www.ghacks.net/category/

You may add a second search engine bot which you want to test your new setup against as well, Adsense or Google Mobile comes to mind.. Clicking on check displays the results if Google bot wanted to crawl your website.

Allowed means that Google Bot or the bot in question is allowed to visit the page, while blocked means the opposite. If the results are not to your satisfaction you can easily edit the robots.txt and check again until they are.

Once they are, copy the new robots.txt file and paste it into the file that is stored on your web server.

Verdict

It is important to test setting modifications and changes before you apply them to a live website as a configuration error can have serious consequences for the site in question.

Advertisement

Is disallow /feed/ a good choice ?

Since it will block the feed address for search engine.

Why would you want your feed to be indexed?