Preserve web pages with the Wayback Machine

The Wayback Machine, part of the Internet Archive, is a massive web page archive that holds more than 279 billion copies currently.

This makes it an excellent option to look up pages that are no longer available at all, or have changed. You can head over to the Wayback Machine website directly to look up copies of web pages manually, or use browser extensions like Wayback Machine, No More 404s or Resurrect Pages instead.

What many Internet users may not know is that the Wayback Machine offers an option to add web pages to the archive.

This can be quite useful. Maybe you want to make sure that an article or page is preserved, so that you can access it in the future, or use it for citation, without having to worry that it is no longer available or altered.

While you can do the same by saving the page to your local system, it is difficult to prove that you have not altered the web page in any way during or after that operation. If you use the Wayback Archive, you prove that you did not manipulate the web page in any way.

How to add pages to the Wayback Machine

It is rather easy to add the copy of a page to the Wayback Machine. Please note that this works only for pages that allow web crawlers. If a page blocks those, it is not possible to add it to the Wayback Machine's archive.

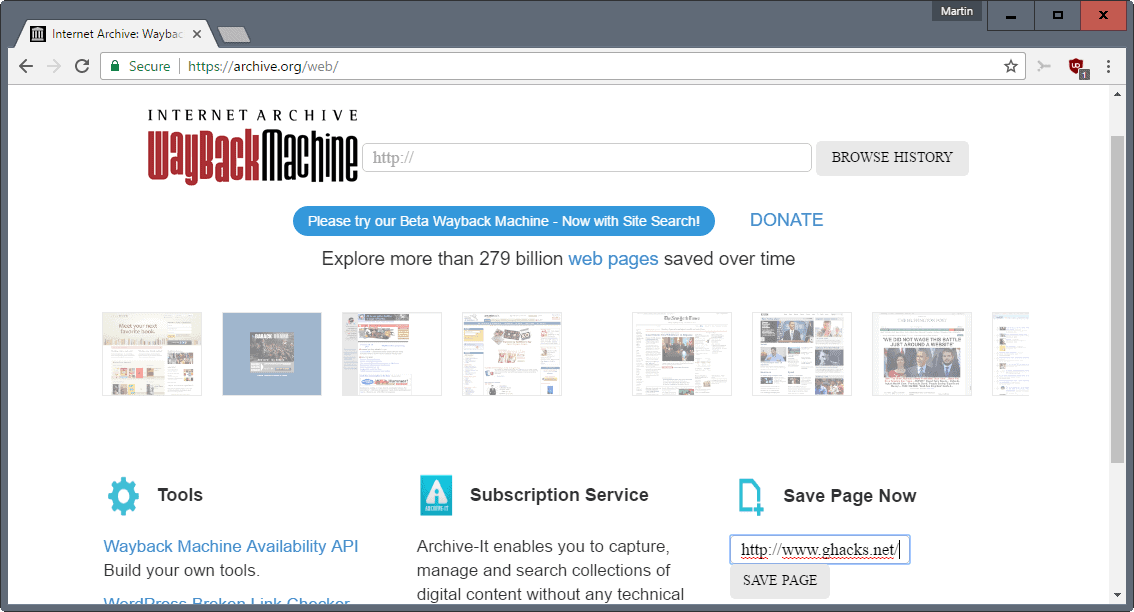

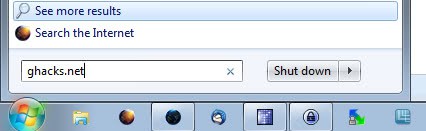

- Load https://archive.org/web/ in your web browser of choice. This works using desktop and mobile browsers.

- Locate the save page now section on the page that opens.

- Type or paste a web URL into the form.

- Hit the save page button.

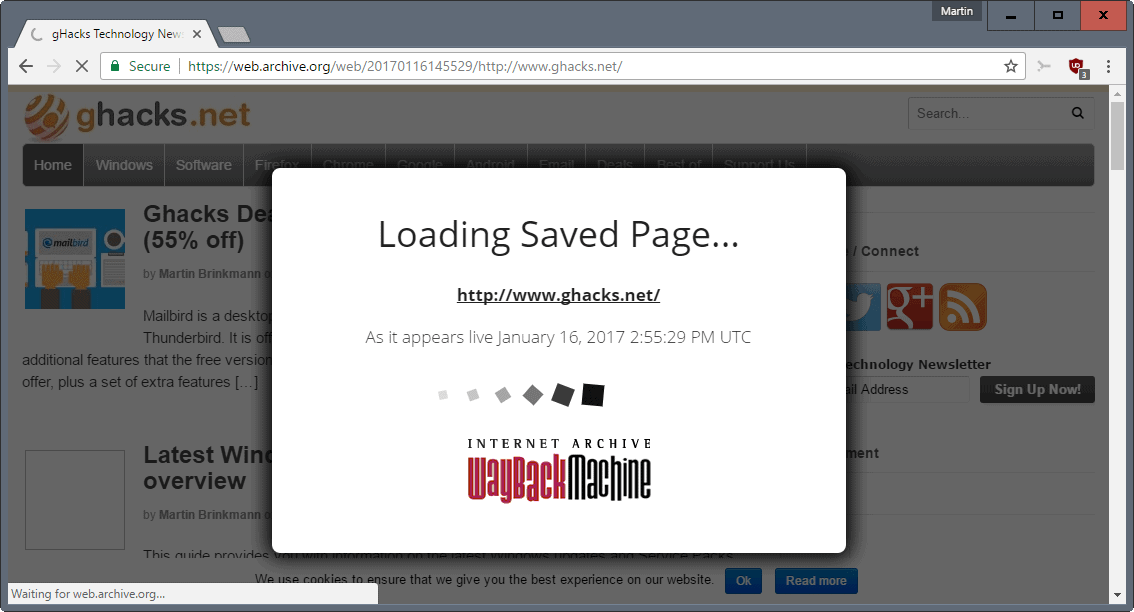

- The process to save the page to the archive is launched immediately.

The page is loaded, and a prompt is displayed on top of the page that returns status information to you. It should not take longer than a couple of seconds to save web pages.

The process may take longer if the server the web page is hosted on is under heavy load, or rejecting requests.

The service lists the URL the page is accessible on from that moment onward. You can copy that link, for instance to bookmark it, or for sharing.

Tip: You can use the syntax https://web.archive.org/save/http://www.example.com/ to start the capturing process right away without having to use the form.

Make sure you change the "http://www.example.com/" part of the URL to the URL you want to save.

An alternative is provided by archive.is which you may use for that purpose as well.

Now You: How do you preserve web pages?

The problem with The Wayback Machine is that they apply robots.txt RETROACTIVELY.

The means that if your domain’s registration expires and the name is later purchased by another party, who slaps a robots.txt on the root page which disallows everything, The Wayback Machine will happily delete all of the archived content for that site.

See https://archive.org/post/1019415/retroactive-robotstxt-removal-of-past-crawls-aka-oakland-archive-policy

Any already archived content is not really deleted, just hidden from view by robots.txt compliance.

Only Illegal things are deleted (and sometimes non-illegal data archived around the same time goes off-line too since the archived material is (or was, if things have changed) stored in 100MB zip files by archive.org).

IMO, anything [legal] that was originally allowed to be archived should remain permanently available even if newer stuff is blocked by a robots.txt file change…

===

Some wayback bookmarklets (scroll to May 16):

http://www.bookmarklets.com/tools/new.html

Above page backup (zipped .mht file):

https://web.archive.org/web/aspnetmht.com/getmht.aspx?mht=www.bookmarklets.com_201701210734.zip

I’ve already opened the Wayback Machine but always for searching/consulting an archive, never for getting a page archived, I didn’t even know this was possible with the Wayback Machine! For this I saved from time to time a page with Archive.is, the other archiver mentioned in the article.

Quoting the article, “Tip: You can use the syntax https : // web.archive.org/save/http://www.example.com/ to start the capturing process right away without having to use the form.”

Great. Hence a bookmarklet for getting things even snappier (opens the “archiver” in a new tab) :

Archive page with WebArchive.org

javascript:void(open(‘https://web.archive.org/save/’+location.href))

Archive page with Archive.is

javascript:void(open(‘https://archive.is/?run=1&url=’+location.href))

Both scripts run fine but before all both sites archive well. I saw on webarchive.org that 8 archives for this very Ghacks page had been performed since yesterday : looks like we tried archiving with the page we had, which is logical :)

Glad it gets a mention, even if it doesn’t store pictures.

I’ve archived at Wayback Machine things that may disappear.

These days I use ErrorZilla plus addon which offers Wayback among others, like google cache, if you get a 404, invaluable.

I used HTTrack Website Copier long ago, but now I save using the Mozilla Archive Format addon, giving an mht file rather than messy html. It also saves the URL at the top which is handy.

If I just want the text I use TidyRead addon to convert the page and save it as an mht file. It does mean disabling compatibility checks though.

The old rule I learnt is there’s only a backup if there’s more than one copy.

I use the ‘Mozilla Archive Format” Firefox add-on as well for quick page local backups. Most of the time I’ll have a page saved but not faithfull in its display since compacted with printfriendly.com which does a fantastic job when the aim is to have the content only saved as a PDF file. Otherwise I run Firefox’s ‘ScrapBook X’ to save a paragraph or a whole page (editable) quickly.

Whatever the tool, the add-on used to save a page they have no legal frame should the user wish to present a backuped page as evidence. I know there is/was a Firefox add-on claiming to save a page within a notary signed frame.

I do wonder if pages saved on archive.is or on web.archive.org may be considered as legal evidence. Does anyone know?

I use Chrome 56 beta to print a desired web page to a PDF. I use Adobe Acrobat Reader DC to read it in the future. Links in that PDF are clickable as well if you give permission to do so. The PDF is a bit more than twice the size of the HTML if saved, but I like saving it in PDF format for personal reasons.

4 helpful Javascript Bookmarklets for viewing past Wayback Archives and saving websites to Wayback Archive and credit goes to ghacks user Lumpy Gravy for helping out with these:

Opens First Wayback Archive In New Tab – javascript:(function(){window.open(‘http://web.archive.org/web/20080706114831/’+location.href);})();

Opens Year of Most Recent Wayback Archives In New Tab – javascript:void(window.open(‘https://web.archive.org/web/*/’+location.href));

Opens Year of Most Recent Wayback Archives In Same Tab – javascript:location.href=’https://web.archive.org/web/*/’+document.location.href;

Saves Currently Viewed Website on Wayback Archive In New Tab – javascript:void(window.open(‘https://web.archive.org/save/’+location.href))

More info here – https://en.wikipedia.org/wiki/Help:Using_the_Wayback_Machine#JavaScript_bookmarklet

2 Bonus Javascript Bookmarklets, i got 1 from ghacks user Tom Hawack, credit to him. Both these following 2 will only work if that website has HTTPS available.

Makes Current URL HTTPS – javascript:((function(){window.location=location.href.replace(/^http/i,”https”).replace(/^http\w{2,}/i,”http”);})()); – Clicking it again will make it go back to HTTP

Makes Current URL HTTP – javascript:((function(){window.location=location.href.replace(/^https/i,”http”).replace(/^http\w{2,}/i,”http”);})());

Also, you have to have Javascript Enabled for all of these to work and certain add-ons might give them trouble such as No Script and Redirector add-ons. i guess they’ll work in all the other major browsers as i have never tested them yet, i’m a Firefox user.

i’d Love to see you do an article on these Javascript Bookmarklets Martin, that way i can find out about new ones, users can always post new ones that we might not know about in the comments section. i’ve been meaning to search for new ones but you have to be careful.

Now to find Javascript Bookmarklets if they exsist for Archive.is

@Rick A., nice. There’s also a single javascript for toggling a site from http to https :

javascript:((function(){window.location=location.href.replace(/^http/i,”https”).replace(/^http\w{2,}/i,”http”);})());

I agree, bookmarklets are little gems. I use about two dozens, really handy.

Wow, Rick!

thanks, will try these out…

No problem trek100. i was about to reply to your comment to tell you to scroll down to my comment but i refreshed the page first and saw you already seen it. Hope they’re helpful. i Love these Javascript Bookmarklets. They helpful and can also take the need away for add-ons which is a good thing.

Saves Currently Viewed Website to Archive.is In New Tab – javascript:void(open(‘https://archive.today/?run=1&url=’+encodeURIComponent(document.location)))

@yogesh – Sorry yogesh but i never got a notification for your reply, but i have not tested them on other browsers as i’m a Firefox user but they should work as long as Javascript is enabled. Also, on Firefox just highlight the exact text and drag it to the bookmark bar and the bookmarklet is made. i don’t know if Google allows that on Chrome because Google is a funny company. Every browser can be different.

Makes Current URL HTTPS – Not working on Google

Any JS *** Bookmarklet ***

(in FF or Pale Moon)

to just click to save the

currently displayed page in the browser,

to the Wayback Machine ?

That would be nice and easy…

Web pages aren’t the only thing you can save/retrieve. If you have a direct link to a file (for instance, software hosted on the developer’s own domain) you can archive or search that, allowing you to download a copy even when the site has shut down or moved!

There is a great WebExtension called “Save To The Wayback Machine” for Chrome-based browsers ( https://chrome.google.com/webstore/detail/save-to-the-wayback-machi/eebpioaailbjojmdbmlpomfgijnlcemk?utm_source=chrome-app-launcher-info-dialog ). It can not only assist you in archiving the current page (two mouse-clicks required, one on the extension, one on the “Archive Page” button), but also tell you when the current page was last archived, plus it will provide links to the most recent archived version or the whole version tree with only two clicks as well.

I’m not aware of there being a comparable extension for Firefox and haven’t tested this WebExtension on Firefox either.

Have known about this site for at least 10+ years. It has helped immensely when a client has damaged a webpage themselves or even forgot to pay continued hosting fees and lost the domain and site completely.

A problem with using wabyback for recovery is it appends its header to every page which must be removed and of course there is the issue of not every page being indexed i.e. private pages or password protected content being available. I’ve used HTTrack Website Copier successfully by appending the waybackmachine full address to the site I wanted to recover and although thankful to get some of the content back, there still remains tons of work to do after recovery.

“A problem with using wabyback for recovery is it appends its header to every page which must be removed”

Add “id_” eg append it to the date numerals:

Original date:

https://web.archive.org/web/20170121155209/https://stevesouders.com/mobileperf/pageresources.js

Original date with id_ appended:

https://web.archive.org/web/20170121155209id_/https://stevesouders.com/mobileperf/pageresources.js

Partial dates following give a save within the year/month/day etc:

https://web.archive.org/web/20170121id_/https://stevesouders.com/mobileperf/pageresources.js

https://web.archive.org/web/2017id_/https://stevesouders.com/mobileperf/pageresources.js

“2id_”: Latest save (no matter if the save is a good save or not):

https://web.archive.org/web/2id_/https://stevesouders.com/mobileperf/pageresources.js

“1id_”: First save (no matter if the save is a good save or not):

https://web.archive.org/web/1id_/https://stevesouders.com/mobileperf/pageresources.js

Some web page examples:

“1id_”:

“http://web.archive.org/web/1id_/https://www.example.org” as per following examples, redirects to the first save (no matter if it’s a good or bad save):

“2id_”:

“http://web.archive.org/web/2id_/https://www.example.org/” redirects to the latest save (no matter if it’s a good or bad save):

http://web.archive.org/web/2id_/https://stevesouders.com/mobileperf/pageresources.php

With a partial date + “id_” as below:

http://web.archive.org/web/2017012116id_/https://stevesouders.com/mobileperf/pageresources.php

or

http://web.archive.org/web/20170121id_/https://stevesouders.com/mobileperf/pageresources.php

or

http://web.archive.org/web/2017id_/https://stevesouders.com/mobileperf/pageresources.php

redirects as something like:

http://web.archive.org/web/20170121161807id_/https://stevesouders.com/mobileperf/pageresources.php