Why we Need Technology Transparency Info for Websites

It's been over a decade now that we've had secure socket layer (SSL) encryption technology for making Internet transactions safe. With only a very few exceptions, including a certificate cloning scare a couple of years ago, it's worked very well and has enabled millions of people online to perform trillions of online purchases and financial transactions.

Last week however thousands of websites running Microsoft SQL Server 2003 and 2005 were hit by cyber-criminals with an attack designed to circumvent their security. The attack injected code into the servers that meant every visitor thereafter would be greeted by a message saying their computer had been infected by hundreds of viruses.

This of course wasn't true, it was a way to trick people into paying for a downloadable trojan that would clean the virus problem but would really install botnets, keyloggers and more onto your PC. Worse, in paying for this software, the criminals would then have your credit card details... or more!

This attack could have compromised 28,000 websites according to some reports and is frightening news, especially for all those of us with personal data held by web companies A, B and C.

This brings me back to SSL. If we want to shop online then for over a decade our web browsers have been able to warn us whether or not the information we send is being encrypted, and if that website is deemed safe for financial transactions or for the exchange of personal data.

Then we have companies including Microsoft and Google maintaining blacklists of unsafe websites, shared between them and anti-virus companies, to warn us further of malware-ridden websites by turning our browsers red.

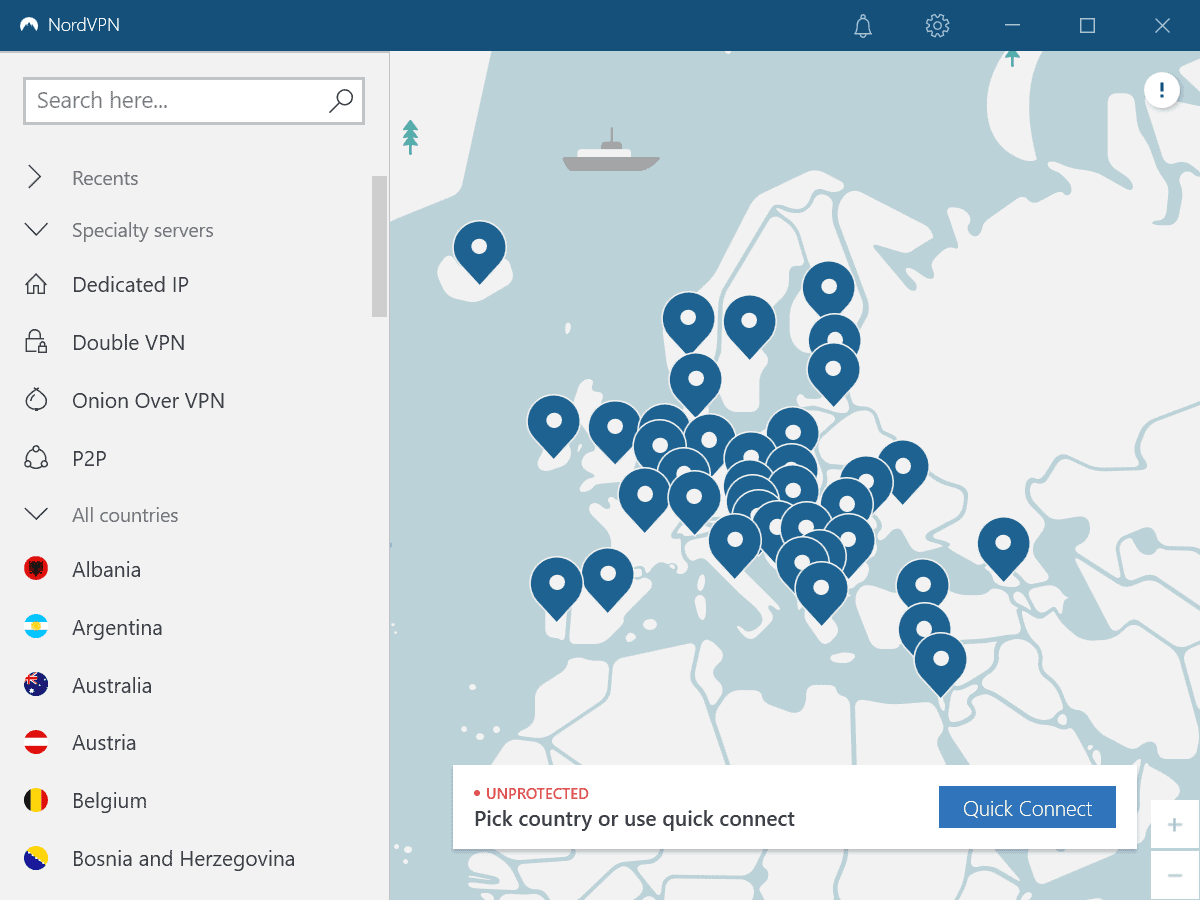

What we don't have are warnings about how secure the underlying technology on a website is, and whether we can trust that.

There's no reason why this would be hard to do either, an encrypted file located on the server (probably with the SSL certificate) that could be read by the browser and certificated by a third-party would be all that would be needed, after all this is tried and tested technology. This file would contain informaton about the hosting on that computer, what operating system version it runs and the versions of what other technologies it is using.

In the cases outlined above a system such as this would have warned visitors to the websites that the sites they were visiting and trusting their personal information to, were using older technologies that, even when properly patched, could be vulnerable to attack.

Indeed many people who already know about such things, might choose to steer clear of all servers running Windows in favour of those running Linux and MySql.

It truly amazes me that we don't already have a system such as this but I'm even more stunned that so many companies and hosting firms are using technologies on their website that are almost a decade old. So come on people, agree a standard by which, within a small margin of error, we can see a traffic light of how secure our personal information will be on a website before we hand it over.