Google is testing Never Slow Mode feature in Chrome

A new commit on the Chromium development site suggests that Google is testing a new feature for Chrome called Never Slow Mode designed to speed up the loading of webpages.

Websites have grown in size significantly over the years. A KeyCDN analysis found that the average webpage size increased from about 700 Kilobytes in 2010 to 2300 Kilobytes in 2016.

Internet speeds on the other hand have not increased nearly as much in that time in many regions and the same is true for computing resources; this leads to longer loading and processing times.

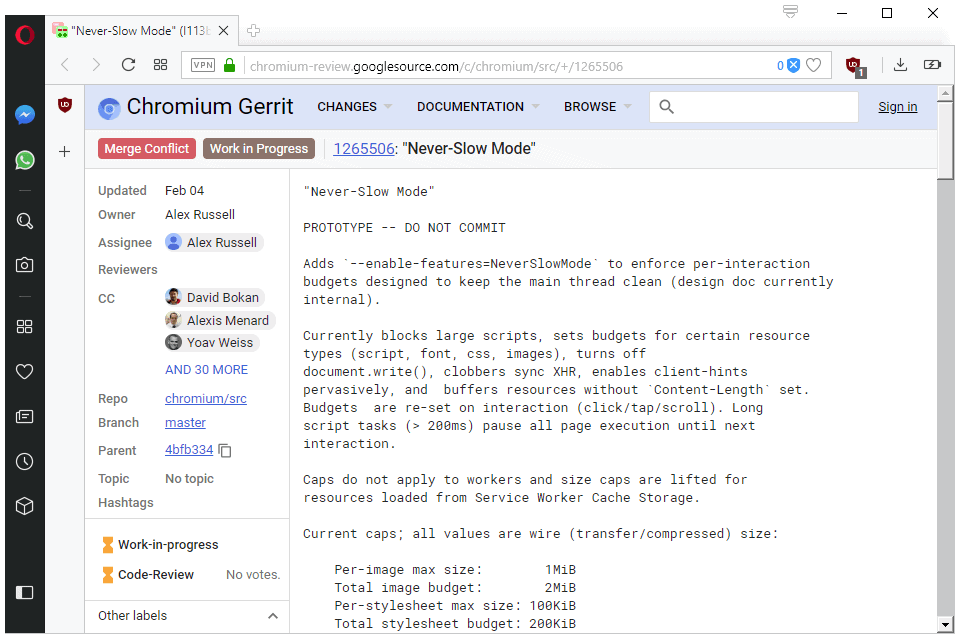

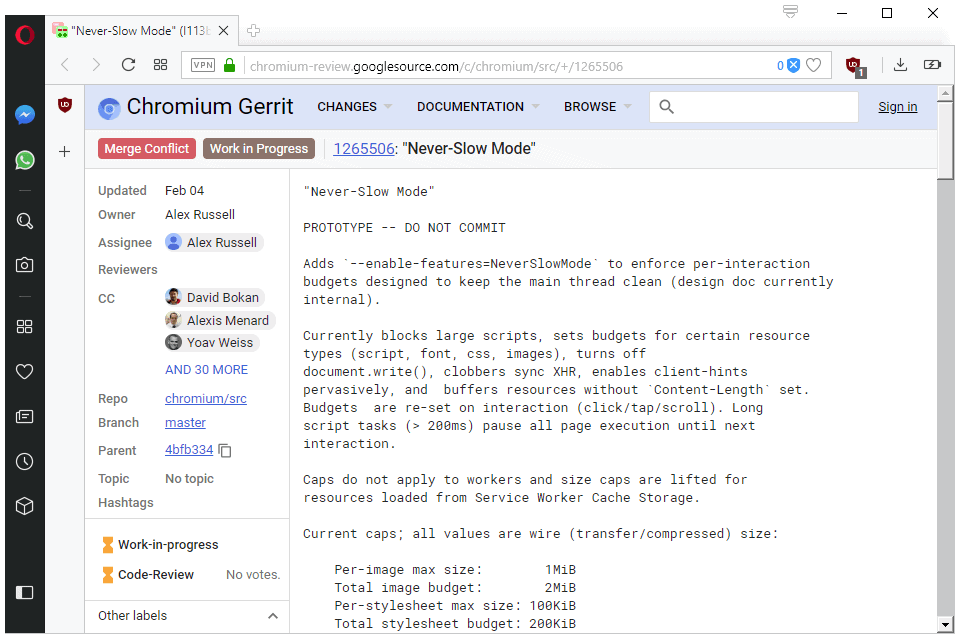

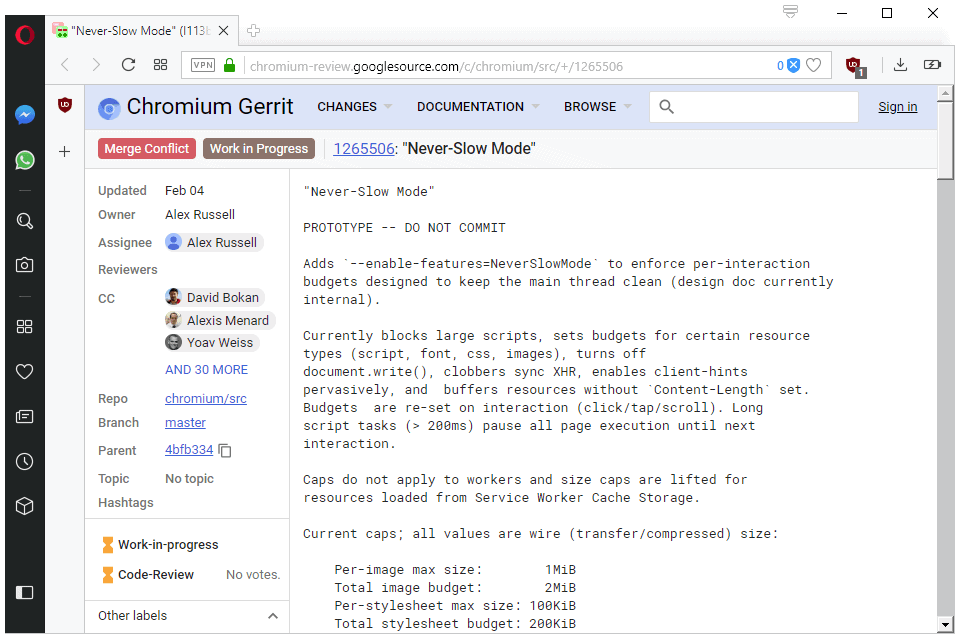

Google published prototype code recently on the Chromium development site that addresses some of that. The main idea behind Never Slow Mode is to introduce budgets for certain types of resources.

Currently blocks large scripts, sets budgets for certain resource types (script, font, css, images), turns off document.write(), clobbers sync XHR, enables client-hints pervasively, and buffers resources without `Content-Length` set. Budgets are re-set on interaction (click/tap/scroll). Long script tasks (> 200ms) pause all page execution until next interaction.

Values tested right now include limits for stylesheets, images, scripts, and fonts. Stylesheets for instance are limited to a size of 100 Kilobytes and images to a total image budget of 2 Megabytes.

Resources that exceed the budget are blocked by the browser. Google notes that some resource types, e.g. Service Workers, are not restricted, and that the size limits apply to the compressed state of resources.

Dinsan Francis found the description of the experimental flag in the code. It is called Enable Never-Slow Mode:

Enables an experimental browsing mode that restricts resource loading and runtime processing to deliver a consistently fast experience. WARNING: may silently break content!;

Google warns that the feature may break sites as content is blocked. There is also the --enable-features=NeverSlowMode startup parameter to enable the feature in Chrome. Both don't work at the time of writing.

It is unclear, at this point, if blocked content will be loaded when resources are available again or blocked for good. Blocking scripts, images, and other content types could certainly break a lot of websites. It will be interesting to see how Google plans to address that.

Now You: Would you like to see something like this implemented?

For “normal” websites that might work. But there are special cases like dedicated internal services that have to go over that limit becouse reasons (e.g. website-base newspaper create helper that with like 40 pages long mockup it’s bound to exceed those limits). If I read it correctly it’s 2 MB for ALL of images together. In those special cases we need like 5-10x that (e.g. all the ads previews on the mockup).

So my opinion it should not be ever on by default. Or even never being implementing.

@Newspaper

The really important question is how the function will change during development and how many fringe use cases it will block.

I bet if it will ever become the default function you will not notice any negative impact, as Chrome devs are very smart.

@Newspaper IT:

That sounds like an inappropriate use case for a web-based application to me.

“So my opinion it should not be ever on by default.”

Why? Defaults should cover the most likely usage. The usage you’re describing is an edge case, and should not be relevant to what the default setting should be. As long as you can turn the limitation off, then making the default on would be correct for more users than the other way around.

Looks like a great way to break 99% of sites.

Seems like a good idea if it makes videos play at something like the resolution selected. Decades later, vid playback still isn’t predictable. I can play 4k vids online sometimes but they’re clearly not streaming at 4k resolution. Even 720 vids are hit and miss with a 150 Mbps cable connection.

If the past is an indicator, as Internet speeds improve, ads and stupid page animations will devour more bandwidth while the elements that really need speed will continue to suffer. Sometimes I click a link and through all the clutter can barely find the subject in the page spawned. Limits to the size of page embellishments is a great idea.

What slows down the internet is the ads coming from 20 different sources that are not on the page you want to load.

Entire websites should hosted in single locations. Let the hosts do all the backround work and leave only the browsing to the users.

This would also increase security as the host would be responsible for every 1 and 0 on thier site instead of blaming malvertising on 3rd parties.

Fascinating, as Google’s own websites are among the slowest on the web nowadays.

lol the irony.. I wonder if user can use the slow mode on the Google sites too

Yep, the current incarnations of gmail and youtube are atrociously slow

Whatever the browser and without striving to wonder what a never slow mode may/should include, the simple fact of using a tool which simultaneously blocks advertisements, trackers and unnecessary 3rd-party downloads does the job nicely. Such tools exist and of course ‘uBlock Origin’ is the leader of that band.

If Google’s aim is to put valuable data on a diet in order to make ads feel less constipating then they’re on the right path.

I think it’s a good idea, there’s far too much bloat these days. However those limits aren’t exactly low so I’m not sure if it will make much difference. Style sheets are limited to 200MB in total (not 100), it doesn’t say in the screenshot what the javascript limit is and that’s probably the big thing. The lazy these days always use frameworks for no good reason, which tend to be huge (although not all are).

Is it available anywhere ? (Canary for instance)

I’m not a programmer or developer, but reading the words “new commit” suggests that it just appeared as a concept or something. I bet it will take some months before it appears on Canary or Chromium builds.

@Weilan:

A “new commit” means that new code was added to the codebase.

I don’t think so.

“Blocking scripts, images, and other content types could certainly break a lot of websites. It will be interesting to see how Google plans to address that”

Is this breaking something that isn’t broken? I wonder what the motive for this is…

I like the idea. Maybe this will drive web developers to stop creating 10 MB monstrosities of pages just so they can inundate me in crappy animations heating up my laptop for no reason. In the end everyone will benefit from this.

Your comment reminds me there used to be a superb XUL addon called *Toggle animated GIFs*, which can control the displaying of GIFs granularly.

It’s webext alternative:

https://addons.mozilla.org/en-US/firefox/addon/toggleanigif/

Not that powerful than old one. Sad.