ChatGPT gets schooled by Princeton University

At this point, we’re all at least a little bit familiar with ChatGPT, the large language model developed by OpenAI to increase productivity and provide a little laugh every now and then. What you may not know, is that people are using this language model to cheat on college assignments and work projects. No judgement, this is an incredible tool, and we may be looking at the future of productivity and information transmission. However, a student at Princeton University has decided to take ChatGPT to task by creating his own tool.

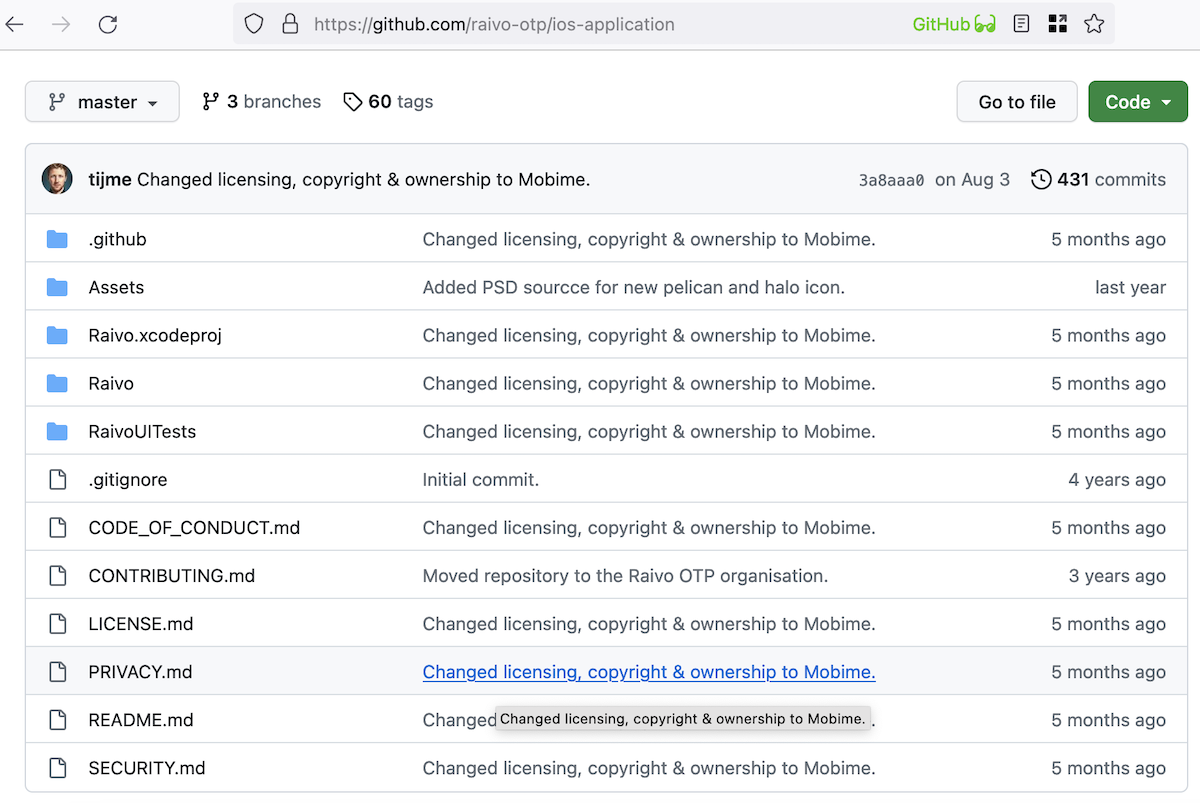

The new utility is called GPTZero, and it was created by Princeton student Edward Tian. Tian has already posted numerous proof-of-concept videos that seek to demonstrate the capabilities of this new tech. The first demonstration involved GPTZero determining that a particular article on the New Yorker was written by a human. It then took its skills to LinkedIn, where it verified that a particular post had been created with ChatGPT. Tian posted his findings regarding the LinkedIn post with a short caption: ‘here's a demo with @nandoodles's Linkedin post that used ChatGPT to successfully respond to Danish programmer David Hansson's opinions.’

According to Tian, he was motivated purely by academia and its usually infallible nature. He didn’t like the idea that students were using ChatGPT to commit what he termed ‘AI plagiarism.’ Tian posted a short tweet elaborating on his concerns wherein he stated that he thought it was unlikely ‘that high school teachers would want students using ChatGPT to write their history essays.’

These are valid concerns that the creators of ChatGPT share. OpenAI is, at this moment, working on a watermark to instantly show whether or not something was generated using ChatGPT, but it isn’t ready yet.

We won’t know how effective Tian’s utility is until it’s tested out in the field properly. The reality is that so many of these utilities that claim to be able to detect AI in written works simply do not work. I recently explored a few of these utilities myself, and the results were frankly shocking. I used two pieces of text; one that I had written myself, and one generated by ChatGPT. Running both texts through so-called AI detectors revealed that while these utilities were able to pick out the AI 100% of the time, my own original work came back as written by AI as well.

The problem is this; in this day and age, any written content posted to the internet has to conform to SEO standards. These search engine optimization standards are set up by algorithms to make works attractive to other algorithms. While university assignments aren’t in this same class of writing, humans are still taught every single day to write like computers. The more we have to implement SEO in our writing, the less ‘human’ it becomes.

In closing, we’re never going to be able to launch a flawless AI detector because we humans ourselves are programming ourselves and each other to write, act, and think like algorithms. The monsters that brilliant minds like Tian want to decimate are, unfortunately, humans.

Advertisement

I think this article was written by a ChatGPT bot.

@John G. you can’t imagine how good reading your comment makes me feel. Roots, nature, life, “Everything that trembles and pulsates” as translated from French “Tout ce qui tremble et palpite”) in French singer’s Jean Ferrat “Que c’est beau la vie” (“Life is so beautiful”.

> I spend a lot of hours outside looking for plants, flowers and trees.

I used to wander in the countryside, daydreaming. Reading you invites me to perpetuate those delightful moments.

> I touched months ago a tree of more than 200 years old. Then I asked myself how the world will be in 2223.

As I wandered in the countryside I had similar thoughts. Even if we have no answer the question itself allows one’s thoughts to bounce towards many topics. One of them is that there is no future without love, love of life, its diversity, its magnificence. Brotherhood, friendship.

Poetry on a foggy Sunday afternoon and suddenly colors seem to emerge :=) But I’m digressing!

@Tom Hawack +1000 :]

@Tom hi! Due to my studies I spend a lot of hours outside looking for plants, flowers and trees. I touched months ago a tree of more than 200 years old. Then I asked myself how the world will be in 2223. You are right, life is not only the web. In fact each day I am more sure that here in the web there are only words that people will forget in hours. We are just words and photographs that nobody will really care anymore. I think I will go to see that tree again next week. @Tom, always glad to read your comments!

I don’t quite understand this paragraph of the article :

“The problem is this; in this day and age, any written content posted to the internet has to conform to SEO standards. These search engine optimization standards are set up by algorithms to make works attractive to other algorithms. While university assignments aren’t in this same class of writing, humans are still taught every single day to write like computers. The more we have to implement SEO in our writing, the less ‘human’ it becomes.”

1) “[…] any written content posted to the internet has to conform to SEO standards […]”

Has to conform to achieve what result? I’ve searched with various search engines for extracts of comments i had posed in various blogs over the years and found them, be they written in French or in English, never conform to “SEO standards” (my non-style is so personal I tend to write as I speak , lol).

2) “[..] humans are still taught every single day to write like computers […]”

Does this mean that students are taught, systematically, not only to care for concision, clarity, grammar and vocabulary but as well to include that all within SEO standards? What universities, all? Where : USA, Canada, UK, Commonwealth? Not in France, I can tell you, nor in Switzerland nor in Belgium as far as i know. What academic culture is the article referring to?

Generalizations bother me. I tried to serialize a similar assertion “We’re well past the time when schools actively taught critical thinking and problem-solving. Education is now focused on critical race theory and teaching students to be good test takers.”. in one of your previous articles, @Shaun [https://www.ghacks.net/ban-instituted-against-chatgpt-by-new-york-schools-amidst-cheating-fears/] but twice in a row starts to trigger a few brain cells :=) I appreciate the traditional “What, how, where, when” and find only the “what” and partially the “how”!

3) What exactly are SEO standards?

Tom, you’ll probably have to read between the lines of the article… Shaun was describing part of his job role as a Copywriter. For example, how he personally approaches the subject, and what values he applies when writing (marketing). Perhaps, even his perception on the world; he seemed to be complaining about, yet seems to embrace those techniques he claims to despise. I basically suggested “Don’t be a Lemming” or blindly follow pseudo-intellectual constructs, e.g. the computer GIGO.

There are no “SEO standards” whatsoever; I already mentioned that prior, it’s mostly mythical marketing and fanciful meaningless ‘spin words’. In contrast, there are actually Web Accessibility Laws: https://www.w3.org/WAI/policies/

If you hear phrases like “SEO standards” or things like “alt tags”, you know someone is probably trying to “pull the wool over someone’s eyes”. The “alt tag” doesn’t exist either; the term “alt tag” is another misuse and display of ignorance… Hyped by legions of so-called self-proclaimed: “SEO

ExpertsCharlatans”.An HTML (ALT attribute), may contain “alternative text” – I’m not going into details about markup. But, what SEO suggests, regarding markup, is typically grand-scale “markup abuse” and poor practice. :-(

I’m respected regarding my knowledge on semantic HTML markup, and have judged several such related competitions on SitePoint.

Incidentally the posts I linked to in my previous post (see: January 8, 2023) when I asked the question “Do I pass as human?” were in fact wholly authored by myself. Fact: I’ve NEVER used AI to generate any post content.

Now, if you ‘blindly believed’ something brain-dead like, GPT-2 Output Detector [https://openai-openai-detector.hf.space/] it’d probably say [91% Fake], on my ‘first post’…

Test example (‘single post’ location): https://www.ghacks.net/2022/12/26/firefoxs-accessibility-performance-is-getting-a-huge-boost/#comment-4555692

The ‘bot’ fails for the simple reason; it cannot cope with the third paragraph, especially the URL I used: “[…] (jantrid.net/2022/12/22/Cache-the-World/ ‘nsCSSFrameConstructor.cpp on Searchfox’ results)? …”. Removing the curly bracket (jantrid.net/…) URL, the AI then swings it towards [99% Real].

Reducing articles to algorithms, visit popularity and hits; is short-sighted, a good content writer would have the courage to engage directly with their audience one-to-one. Martin does occasionally personally respond via [REPLY] to posts and constructive criticism (displaying a more valuable human touch).

> “3) What exactly are SEO standards?”

In few words, “we will use your useful life data to sell you in the future unuseful things that you believed that you won’t ever need, and of course meanwhile you will swallow drop by drop our woke way of life to maintain you brain thinking that your life was completely wrong, because only we have the truth and only we are your glorious saviors”. More or less. Probably less. :S

@John G., hi there!

SEO and marketing are likely tied. Your definition IMO applies to marketing. What is marketing within SEO leading to SEO (Search Engine Optimization) standards?

I’ve found this to define SEO itself at [https://www.seo-theory.com/seo-standards/what-they-are/] :

“Search Engine Optimization – Noun phrase.

1) The practice of analyzing search engine protocols, actions, resources, and guidelines for the purpose of improving Website compliance and performance in search results.

2) The practice of managing the relationship between a business or entity and the search environments to which it is exposed.

From there on I’m tempted to consider the article’s reference to “SEO standards” concerns Web admins and web authors. Don’t tell me that university considers that all of their students will never be admins but of a website, but authors spending their talents only on Websites. I mean : life is not only the web, is it?!

The following statement is obviously some form joke: “[…] content posted to the internet has to conform to SEO standards. …” Incorrect! (I would use a much stronger word(s) about such misleading SEO snake oil, but Martin would censor me LOL.)

Perhaps, if instead you actually followed some basic usability and accessibility principles (and wrote for wetware) you’d get much further… A possible reason your articles are potentially being seen as AI driven is because a lot of (duplicated) link text is meaningless – even for a Search engine robot.

Apparently if you don’t like having to write “less than 500 word articles”, want to shun “Black hat SEO”, would prefer writing for an “intellectual human audience” and not use “shallow marketing drivel”. Then wouldn’t it make sense to try steer clear from such shady marketing practices (as you implied) in future…

Do I pass as human?

https://www.ghacks.net/2022/12/26/firefoxs-accessibility-performance-is-getting-a-huge-boost/#comment-4555692

I’m certainly “stupid enough” to be wasting my time writing this reply. ;-)

All ChatGPT is, is MiTM

Most may not know what MiTH even means… ?

“humans are still taught every single day to write like computers”

You’re really full of pearls of misinformation, aren’t you? The world must be really scary to you…

The problem is not to be like a computer, is living like sheeps in front of the TV, voting some politics with the hope they will fix something. And indeed yes, the world is truly scary, trust me. After the pandemic of Covid19 the world is worst than before. Thanks @Shaun for the article by the way.