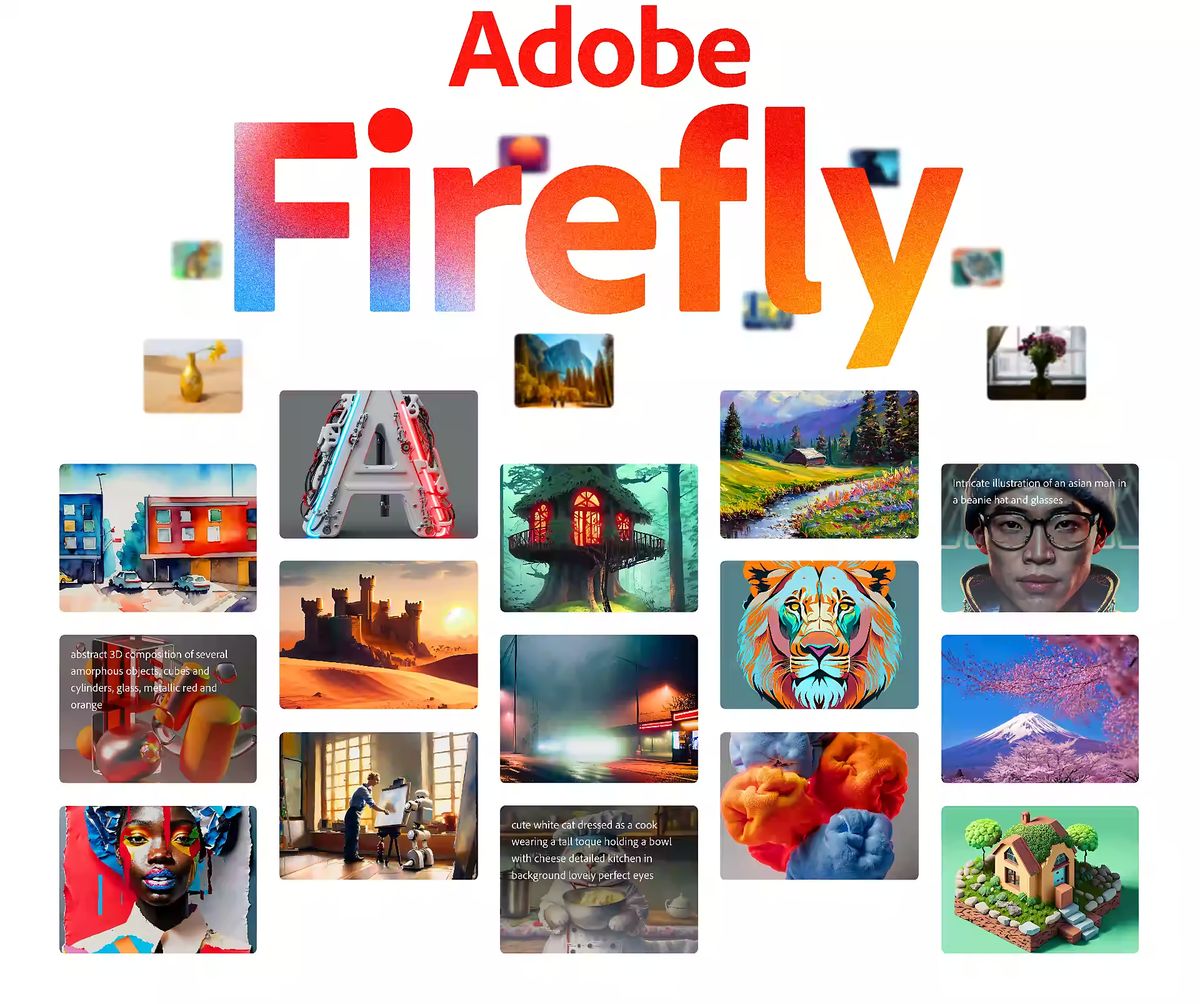

Photoshop powered by Firefly's generative AI

Photoshop is receiving a significant enhancement in the form of generative AI integration. The latest update introduces a range of Firefly-based features, empowering users to expand the boundaries of their images by incorporating Firefly-generated backgrounds. Additionally, the new capabilities enable the integration of generative AI to seamlessly add objects to images and provide an enhanced generative fill feature for more precise removal of objects compared to the previously available content-aware fill tool.

At present, these advanced features are exclusively accessible in the beta version of Photoshop. However, Adobe is extending some of these capabilities to Firefly beta users on the web as well. Notably, the Firefly service has witnessed remarkable engagement, with users having created over 100 million images using the platform.

What's particularly intriguing about this integration is that Photoshop users now have the ability to utilize natural language text prompts to describe the desired image or object they want Firefly to generate. However, as is characteristic of generative AI tools, the outcomes may sometimes exhibit a certain degree of unpredictability. By default, Adobe offers users three variations for each prompt. However, unlike the Firefly web app, there is presently no option to explore similar variations of a specific result through iteration.

To accomplish this, Photoshop selectively sends portions of a given image to Firefly for processing, rather than the entire image, although the company is also actively exploring the potential of employing the entire image. Subsequently, a new layer is created within Photoshop to accommodate the generated results.

As per TechCrunch’s report, Maria Yap, the Vice President of Digital Imaging at Adobe, provided me with an exclusive demonstration of these new features ahead of today's official announcement. As is customary with generative AI technology, predicting the precise output of the model can be challenging, but some of the results were surprisingly impressive.

For example, when tasked with generating a puddle beneath a running corgi, Firefly demonstrated an understanding of the overall lighting conditions in the image, even producing a realistic reflection. While not every outcome was flawless—a vivid purple puddle was also presented—the model generally excels in adding objects to images and particularly excels in extending existing images beyond their original frame.

Considering that Firefly was trained on the extensive collection of photos available in Adobe Stock, along with other commercially safe images, it is perhaps unsurprising that it performs exceptionally well with landscapes. However, similar to many other generative image generators, Firefly encounters difficulties when dealing with text.

Adobe has taken significant measures to ensure that the generated results from the model are safe and adhere to appropriate guidelines. This assurance is partly achieved through the selection of a carefully curated training set.

“We married that with a series of prompt engineering things that we know. We exclude certain terms, certain words that we feel aren’t safe. And then we’re even looking into another hierarchy of ‘if Maria selects an area that has a lot of skin in it, maybe right now — and you’ll actually see warning messages at times — we won’t expand a prompt on that one, just because it’s unpredictable. We just don’t want to go into a place that doesn’t feel comfortable for us,” stated Yap.

Adobe is focusing on Firefly nowadays

Last month, Adobe took a significant step towards making specialized video editing skills accessible to a wider audience with the introduction of Firefly. This innovative tool harnesses the power of artificial intelligence to revolutionize the creative process, particularly in areas like color grading and storyboard creation.

By incorporating AI-powered features, Adobe has provided users with an intuitive and streamlined approach to video editing, effectively democratizing these once highly specialized skills. Now, even users without extensive expertise can navigate the creative process with greater ease and efficiency.

Advertisement