Unique Content Verifier Un.Co.Ver

One of the biggest problems for webmasters on today's Internet is scraped content. What does it mean exactly? It basically means that other webmasters are copying contents from a website, usually without authorization to do so. That's content theft and copyright infringement.

The technology in this area has advanced a lot in the past years. Today it is possible to setup a domain, auto blog and advertisement in less than ten minutes. The contents are automatically scraped from RSS feeds and are then running on auto pilot.

These websites sometimes rank before the original website, one of the biggest problems on Google which they are currently trying to resolve.

How can webmasters find sites that scrape their contents? They can use a search engine like Bing or Google, enter a unique phrase from one of their articles as the search term to find all other websites that match the phrase.

The Unique Content Verifier Un.Co.Ver offers another option. The free Java based software is available for Windows, Linux and Macintosh computers. It can search the web, a specific domain or websites for plagiarized text.

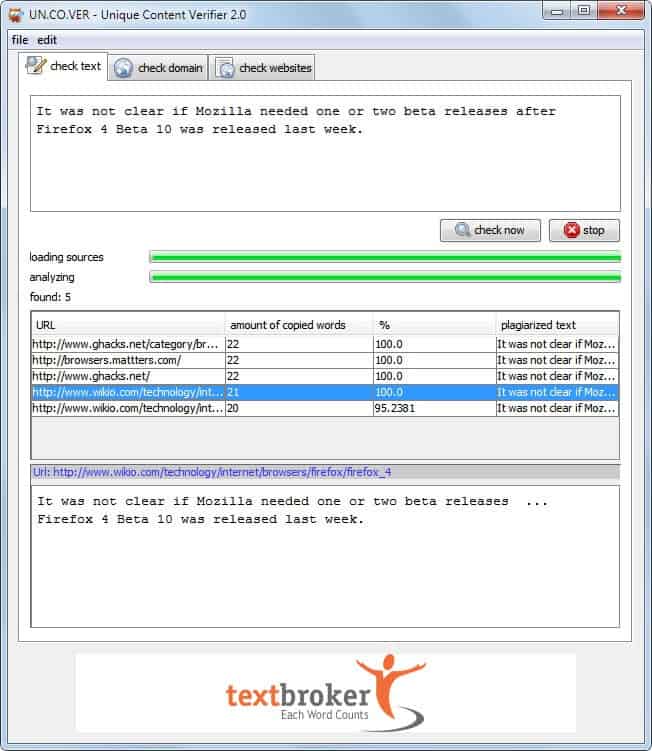

The application displays the three search options in tabs at the top. Check text is the simplest form. A phrase or paragraph needs to be entered into the form at the top before the check now button is activated. This searches the Internet for matches. It is not clear how and where the search is performed.

All matching domains are shown in a listing. Information include the url, amount of copied words, the percentage and the plagiarized text. A click on an item in the table displays the plagiarized text in full below.

Check Domain replaces the phrase form with a url. Un.Co.Ver scans the url for content and tries to find websites that copied those contents. A filter is available to limit the search to specific content. The rest of the process stays the same.

Check websites is a more advanced version of check domain. It can be used to find copied contents for multiple pages of a website. The Unique Content Verified crawls one or multiple websites which are then used as the source for the plagiarism check.

It is theoretically possible to check all pages of a website at once. This may take a very long time depending on the amount of pages on that website.

Unique Content Verifier is an easy to use program, especially the option to verify multiple pages is handy and not possible with a manual search on Google or Bing. The program usually does not find as many scraper sites as a manual search on a search engine would.

Uncover is available for download at the project website over at Textbroker.

Update: The Unique Content Verifier is no longer available at the Textbroker website.

Advertisement

Unfortunately UN.CO.VER is not more available …

That’s to bad, I have removed the link and posted an update.

Thanks for the article. Finally explained to me how it works Un.Co.Ver

Martin – thanks for this post and directing me to Uncover. I have an article submission site and this is exactly what i need so I can eliminate scraped content and only add unique and relevant articles.

Cheers,

Ben