View websites offline with WebHTTrack

There are reasons why you would want to view a web site off line. Say, for example, you know you are on the go and do not always have access to a network connection, yet you want to be up to date on the latest news. Or you are a developer working on a site and need to be able to make changes, or check a web site for bugs or broken links. Â Or maybe you are trying to develop a new site and you want to loosely base your new site (all the while crediting the original site of course) on an already existing site.

You can come up with plenty of reasons for this action and fortunately there are plenty of tools to enable this. One of those tools is WebHTTrack. WebHTTrack is the Linux version and WinHTTrack is the Windows version, so not only can you read your sites off line, you can read them in either platform. In this article I will show you how to do just that - only on the Linux platform.

Installation

Installation is quite simple. Let's take a look at how to do this from the command line for both Ubuntu and Fedora. The Ubuntu steps look like this:

- Open up a terminal window.

- Issue the command sudo apt-get install httrack.

- Type your sudo password and hit Enter.

- Accept any dependencies that might be necessary.

- When the installation is complete, close the terminal window.

The Feodra installation is very similar:

- Open up a terminal window.

- Su to the root user.

- Issue your root user password and hit Enter.

- Issue the command yum install httrack.

- Accept any dependencies that might be necessary.

- When the installation is complete, close the terminal window.

You're ready to start downloading sites. When WebHTTrack is installed, you can start it by clicking Applications > Internet > Web HTTrack Website Copier.

Usage

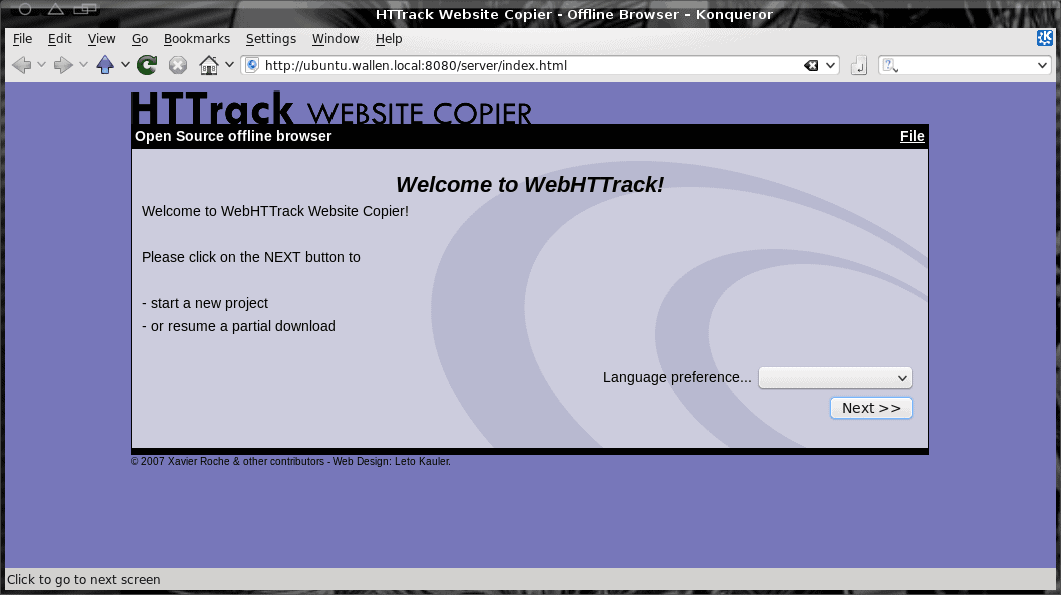

Screen 2

Name: Give the project a name (or select from pre-existing projects).

Category: Give the project a category (or select from pre-existing categories).

Base path: Select where you want the project saved (by default it is in ~/websites).

Screen 3

Action: Here you select from a number of options, including Download web site(s), Download web sites + questions, get individual files, Download all sites in pages, Test links in pages. You can also choose Continue interrupted download or Update existing download.

Web Adresses: Enter the URL you want to download.

In this same screen you can also set Preferences and Mirror options. There are plenty of options to select from (such as Build, Scan Rules, Spider, Log/Index/Cache, Flow Control, and more).

Screen 4

This final screen gives you a last warning to make any adjustments  and allows you to save your settings only (for later download). Or you can simply click Start to begin the download process.

Once you start downloading you will see a progress screen that will indicate what has been downloaded. Depending on the size and depth of your site, this process can take quite some time. Once the download is finished you can then browse your downloaded site by opening up your browser and navigating to the download directory of that site (it will be a sub-directory within ~/website).

Final thoughts

No matter the reason for needing a downloaded web site, it's good to know there are tools that can handle this task. WebHTTrack is one of the easier and more reliable of these tools I have found. And since it's cross platform, you won't miss a beat switching back and forth between Linux and Windows.

Advertisement

I ripped the website but I am unable to use the usual search engine that is there in the ripped website that is why I am unable to use the material that I have downloaded. Please suggest me a solution.

Have been using this for ages, and it works very well