Google Adds Site Speed To Web Search Ranking Algorithm

Google announced on its Webmaster Central Blog today that a site speed factor has been added to the company's web search ranking algorithm. This means that site speed is one of the many factors that may impact a site's performance on the company's search engine.

Site speed is yet another factor that webmasters have to take into consideration to make sure their websites rank well.

The information is - as always - rare at this point. According to the blog post the feature was enabled a few weeks back. It is currently only enabled on google.com and only for English queries on the search engine.

First results show (according to Google) that less than 1% of all search queries are affected by the site speed signal that the Google engineers have added to the algorithm.

Less than 1% might not sound like much but this actually means that about 1 out of every 100 queries are affected by the algorithmic change.

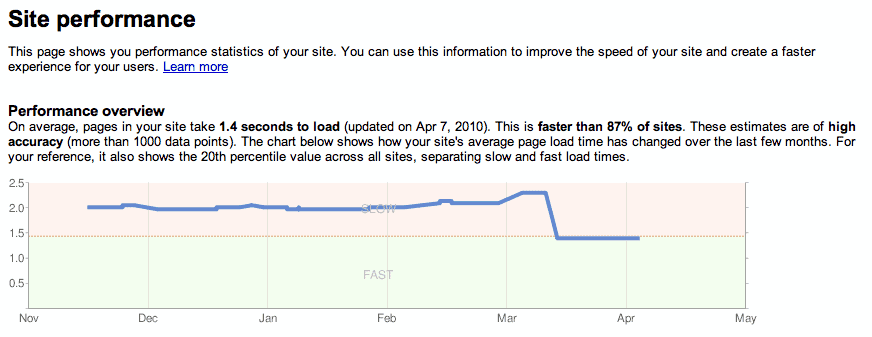

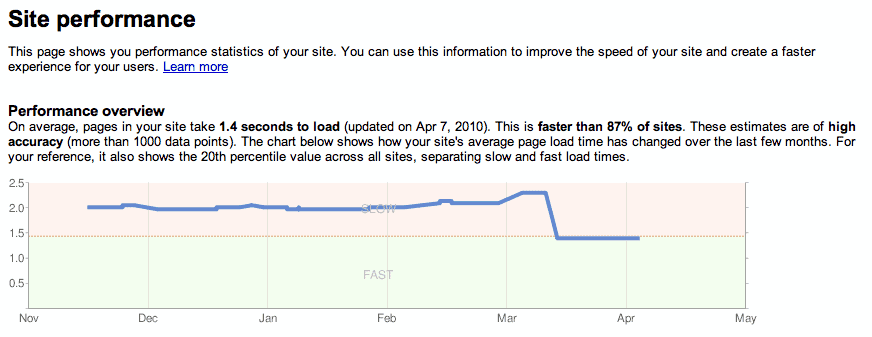

The blog post offers links to the usual tools that can help webmasters evaluate their site's performance.

They include Page Speed, YSlow and Web Pagetest among others. These tools analyze the loading time of the selected website (a page of it) in detail and provide information about areas of improvement.

Basic examples are to run fewer scripts, to compress certain types of data, or to make sure that images are optimized and in proper web formats.

The change leaves many questions unanswered.

- How does this affect sites with lots of contents or large files like photo hosting sites, gaming sites or sites with external scripts (advertisements for instance).

- How do webmasters know if their site's ranking has dropped because of site speed?

- What are acceptable values for site performance? The sub 5 second load time as is suggested in the Webmaster Tools Site Performance graph?

What's your opinion on the matter? Do you think this is a good change? I for one do not like it one bit as a webmaster, mostly because Google failed to reveal essential information.

What is certain however is that the move will speed up the web as a whole, and that is a good thing.

I think this is a positive change because it encourages optimization of the website’s code, and discourages unnecessarily heavy websites. Let us remember that bandwidth is a finite resource and this should have a positive effect in the long run.

With a lot of webmasters heavily into search engine optimization, I think this should have a more positive effect than the possible negative effect on the less than 1% search queries affected.

I am curious about how google do it. This mean that a web developer has another job to do (to optimize their website performance… fuh)

Site speed is only part of the problem. Another annoyance for users is plugin heavyness. Surely having a site that slows down or even crashes browsers is a bad thing.

Also, 5 second load time isn’t very long, I get it a lot from sites that have heavily loaded servers.

The problem here is that we have (at least) two types of search engine users: those who want to find general information, and those who are looking for a specific page. Adding a site speed factor will benefit the first group but may cause trouble to the second group. Also, as mentioned in the article, even those in the first group may get affected if the types of sites are all content heavy.

Sites shouldn’t post a lot of screenshots on pages that they hope to get high in Google rankings. Post thumbnails or structure the page in someway so that you are not penalized.

I’m sure that Google has not chosen to do this arbitrarily, that they have studied and back tested the effects extensively. Let’s see how it works out.

I think adding a speed factor in is positive. But maybe Google should only apply that algorithm to say the top 100 results?

Remember, the overall goal is to serve results pages to the user.

I regularly run into pages from a Google search that take too long to open and which I terminate, tired of waiting on and move on to the next result in my search. Why should a page that delays a user excessively receive a top ranking???

Jojo there are many factors that can lead to a slow page loading time, think of a DDOS attack. I would think it is fine if they factor the signal in but only for really slow sites that have a record for being slow loading. Say everything above 10 or 15 seconds or so. Think of a site that reviews a software and posts lots of screenshots versus a site that posts no screenshots. If everything else is similar the former will load slower than the latter. I do not like it, especially since Google is not giving webmasters any indication whether their site’s rankings are affected by this or not.